The Scoring Reference

The Website AI Score is a composite measure of how reliably an AI system can crawl, parse, chunk, embed, and retrieve the content on a given URL. It is calculated from six independent signal categories, each targeting a distinct layer of the machine-readable infrastructure of a web page.

This is the complete reference document for the scoring system: what each signal measures, how it is weighted, what failure looks like at the page level, and what a validated fix produces.

1. Signal Category: Rendering (Weight: High)

What it measures. The difference in readable text content between a standard HTTP GET response and a simulated no-JavaScript crawl request. Specifically: does the page deliver its core semantic payload — headings, body content, prices, product descriptions — in the raw server response, or does that content only appear after client-side JavaScript executes?

How it is scored. The engine makes two requests and calculates a content delta. Pages that deliver 90% or more of their readable content in the initial server response score in the Optimized range. A delta above 40% — where less than 60% of content is present pre-hydration — produces a failing score. The specific threshold depends on which content is missing: core entity information (product name, service description, pricing) missing from the initial response is weighted more severely than supplementary content.

What failure looks like. A Next.js application in default client-side rendering mode. An Angular SPA that delivers only a root <app-root> element in its initial HTML. A React site where all text content is injected by useEffect() hooks that run post-mount.

The validated fix. Enable Server-Side Rendering or Static Site Generation on your framework. For Next.js: getServerSideProps or getStaticProps on page components. For Nuxt: universal rendering mode. After deployment, the re-audit should show the content delta collapsing to near zero, with the rendering signal moving from Fail to Pass.

2. Signal Category: Schema Validity (Weight: High)

What it measures. Three nested checks: presence of any structured data markup; type specificity of the schema in use; and nesting integrity of parent-child entity relationships.

How it is scored. Pages with zero schema markup score at the floor of this signal regardless of content quality. Pages with generic schema (Organization with only name and url populated) score in the low-Readable range. Pages with specific types (TechArticle, Product, Service) and populated high-signal properties (knowsAbout, sameAs, mentions, description) score in the Optimized range. Nesting integrity — whether child objects like Offers and MerchantReturnPolicy are correctly attached to their parent Product entity — is a pass/fail gate that can cap the schema score even if type specificity is high.

What failure looks like. A SaaS homepage with no JSON-LD whatsoever. An e-commerce product page with a Product schema that has a name and image but no Offers object, making the price machine-unreadable. A blog with Article schema where the author field contains a plain text string rather than a Person entity with an associated URL.

The validated fix. Add a type-specific JSON-LD block to the page <head>. Validate using Google's Rich Results Test before re-auditing. The schema signal should move from Fail to Pass if the block validates and the nesting structure is intact.

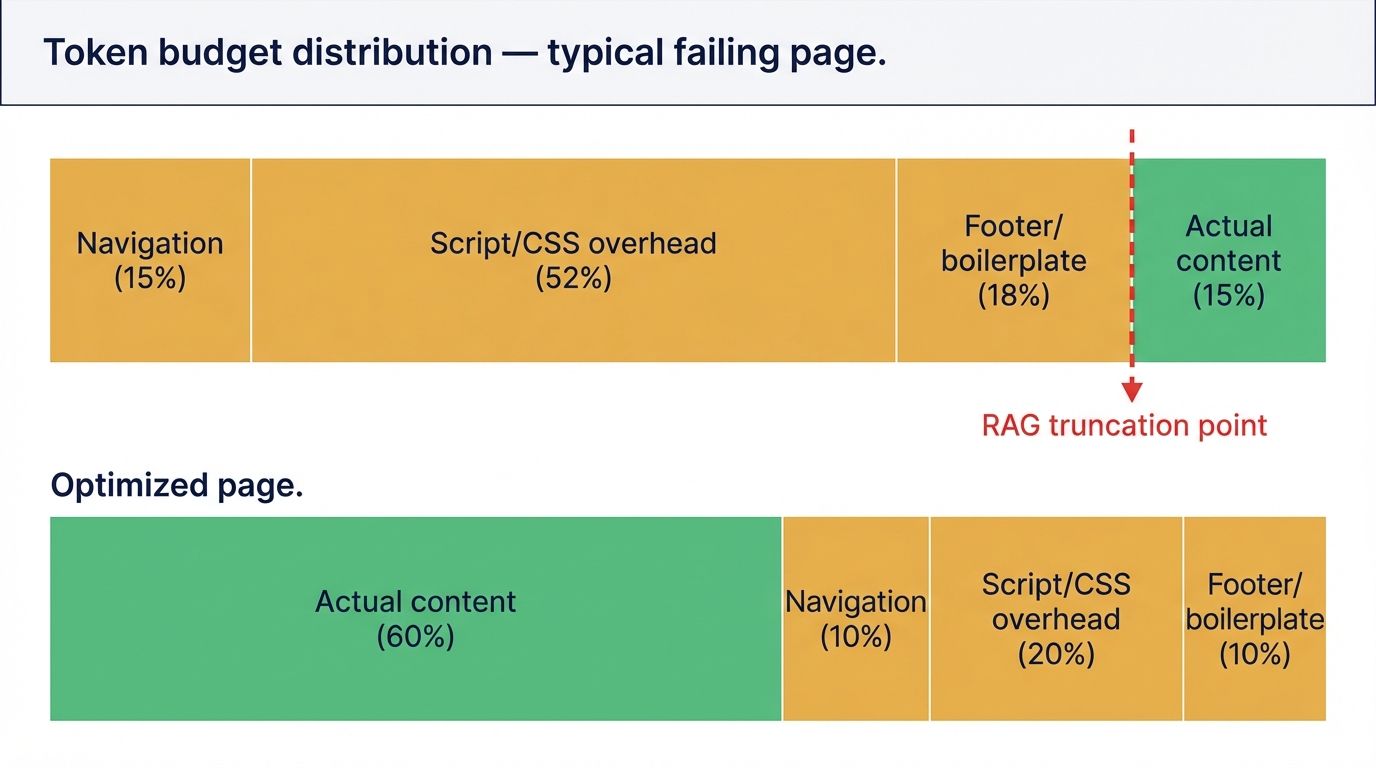

3. Signal Category: Token Efficiency (Weight: High)

What it measures. The ratio of semantically valuable tokens to structural noise tokens across the full HTML response. Structural noise includes: navigation markup, footer content, inline JavaScript that was not stripped server-side, decorative CSS class strings in the DOM, tracking pixel initialization code, and repeated boilerplate across page sections.

How it is scored. The engine strips defined noise categories and calculates the signal-to-noise ratio. A ratio above 0.65 (65% of tokens are semantic signal) scores in the Optimized range. A ratio below 0.30 scores as a Fail. Additionally, the engine checks the position of the first substantive semantic content — if the brand name, primary category, and primary value proposition do not appear within the first 100 tokens of readable content, a 100-Token Rule penalty is applied regardless of the overall ratio.

What failure looks like. A marketing site serving 180KB of Tailwind CSS class strings, tracking scripts, and animation library code before a 400-word page body. A Next.js page where the first readable text an AI encounters is the site navigation: "Home / Products / Blog / Contact / Login."

The validated fix. For the ratio problem: serve different HTML to bot user-agents using middleware that strips non-semantic elements. For the 100-Token Rule failure: restructure the page template so the <main> content opens with a definition-style lede naming the entity, its category, and its primary function before any other content.

4. Signal Category: Entity Clarity (Weight: Medium-High)

What it measures. The degree to which the brand or organization represented on the page can be resolved as a distinct, unambiguous entity in a knowledge graph. Checks include: presence of sameAs properties in Organization schema linking to canonical external authority nodes; presence and validity of author disambiguation on content pages; and consistency between the entity name used on the page and the entity name registered in external reference databases.

How it is scored. Pages with no sameAs links score at the floor of this signal. Each verified sameAs reference to an authority node (Wikidata, LinkedIn company page, Crunchbase, Wikipedia) adds to the score. Three or more verified links score in the Optimized range. Author disambiguation is a secondary check: content pages where the author is linked to a verified Person entity receive a bonus to this signal.

What failure looks like. An organization schema block that lists the company name and website URL with no external corroboration. A multi-author blog where every article is attributed to "Admin." A brand whose name is a common English word or acronym with no disambiguation markup — a company called "Apex" with no sameAs links is indistinguishable from hundreds of other entities with the same name.

The validated fix. Create or claim profiles on LinkedIn (company page), Crunchbase, and Wikidata. Add their canonical URLs to your Organization schema's sameAs array. For content pages: replace generic author attribution with a Person entity linking to a verified author profile.

5. Signal Category: Crawl Access (Weight: Medium)

What it measures. Whether the page is accessible to AI crawlers as defined by the robots.txt policy, and whether the site has implemented the llms.txt standard. The signal distinguishes between intentional and unintentional blocking, and between blanket policies and selective user-agent rules.

How it is scored. A valid, accessible robots.txt that explicitly permits GPTBot, PerplexityBot, and ClaudeBot scores at the top of this signal. A site with no robots.txt scores in the mid-Readable range — technically accessible, but without the explicit permission signal that some crawlers treat as a positive indicator. A site blocking any major AI user-agent scores in the Fail range for this signal. Presence of a valid llms.txt file at the domain root applies a score bonus.

What failure looks like. A User-agent: * / Disallow: / rule deployed to production from a staging environment. A WordPress security plugin that added a blanket deny rule during hardening. A robots.txt last edited in 2021 with no rules for GPTBot, PerplexityBot, or ClaudeBot because those user-agents did not exist yet.

The validated fix. Edit robots.txt to add explicit Allow: / rules for each major AI user-agent above any blanket disallow rules. Deploy a llms.txt file at the domain root. Re-audit confirms the crawl access signal moves from Fail to Pass.

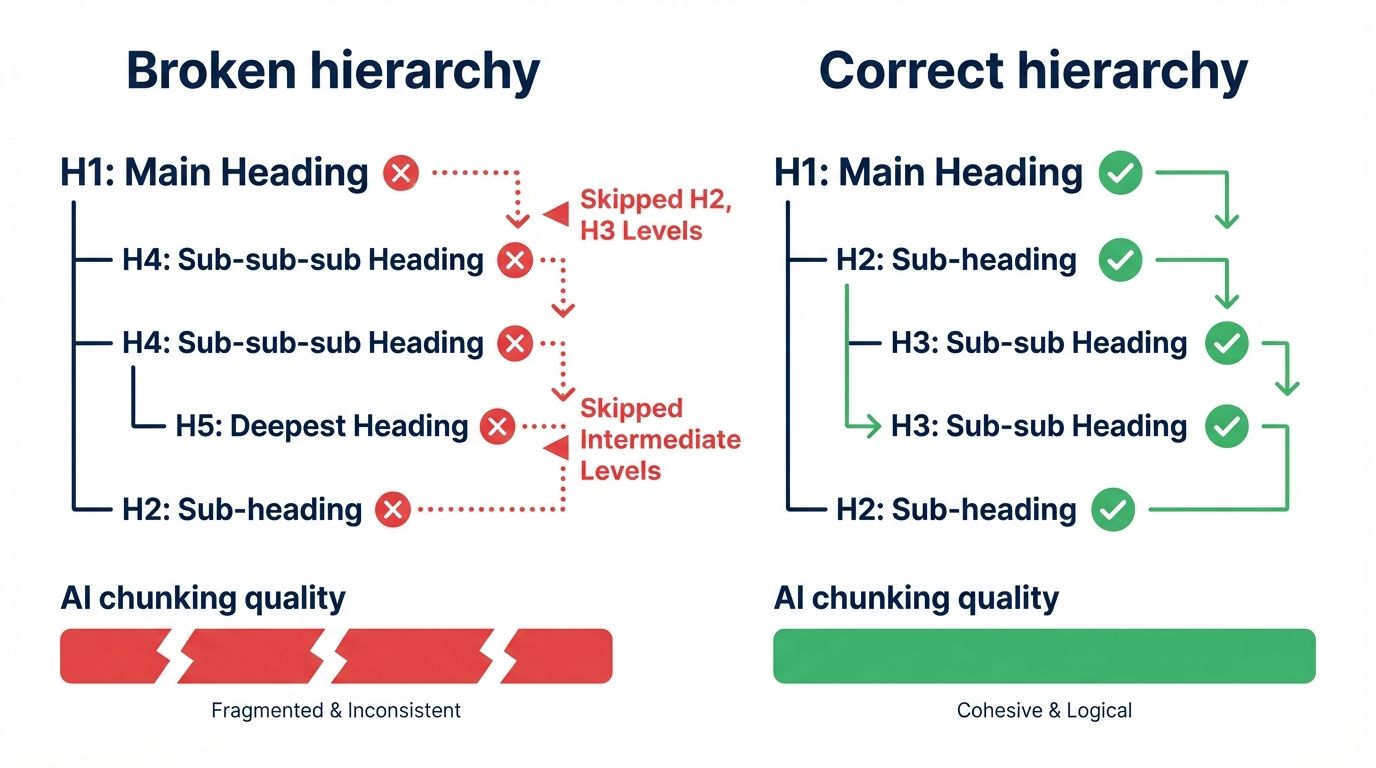

6. Signal Category: Semantic Structure (Weight: Medium)

What it measures. The integrity of the HTML heading hierarchy and the correct use of semantic HTML elements that AI chunking systems use as boundary and classification signals. Specific checks: heading tag sequence (H1 → H2 → H3 with no skipped levels); use of <article>, <section>, <main> as structural containers; and presence of <details> and <summary> tags for Q&A content patterns.

How it is scored. A page with a valid, unbroken heading hierarchy, correct semantic container usage, and at least one structured Q&A pattern using <details>/<summary> scores in the Optimized range. A page where headings skip from H1 to H4 scores in the Fail range for this signal. Each hierarchy break is a discrete penalty.

What failure looks like. A page where the H1 is the page title, followed by H4 subheadings because the designer wanted small decorative text and used header tags to achieve it. A long-form article with a single H1 and no H2 or H3 structure, making it one undifferentiated semantic chunk from the AI's perspective.

The validated fix. Audit the heading sequence using a browser extension or the document outline view in accessibility tools. Replace any H4 or H5 tags used for styling with semantically correct alternatives — use CSS classes to control font size rather than heading level. Add <details>/<summary> markup to Q&A content.

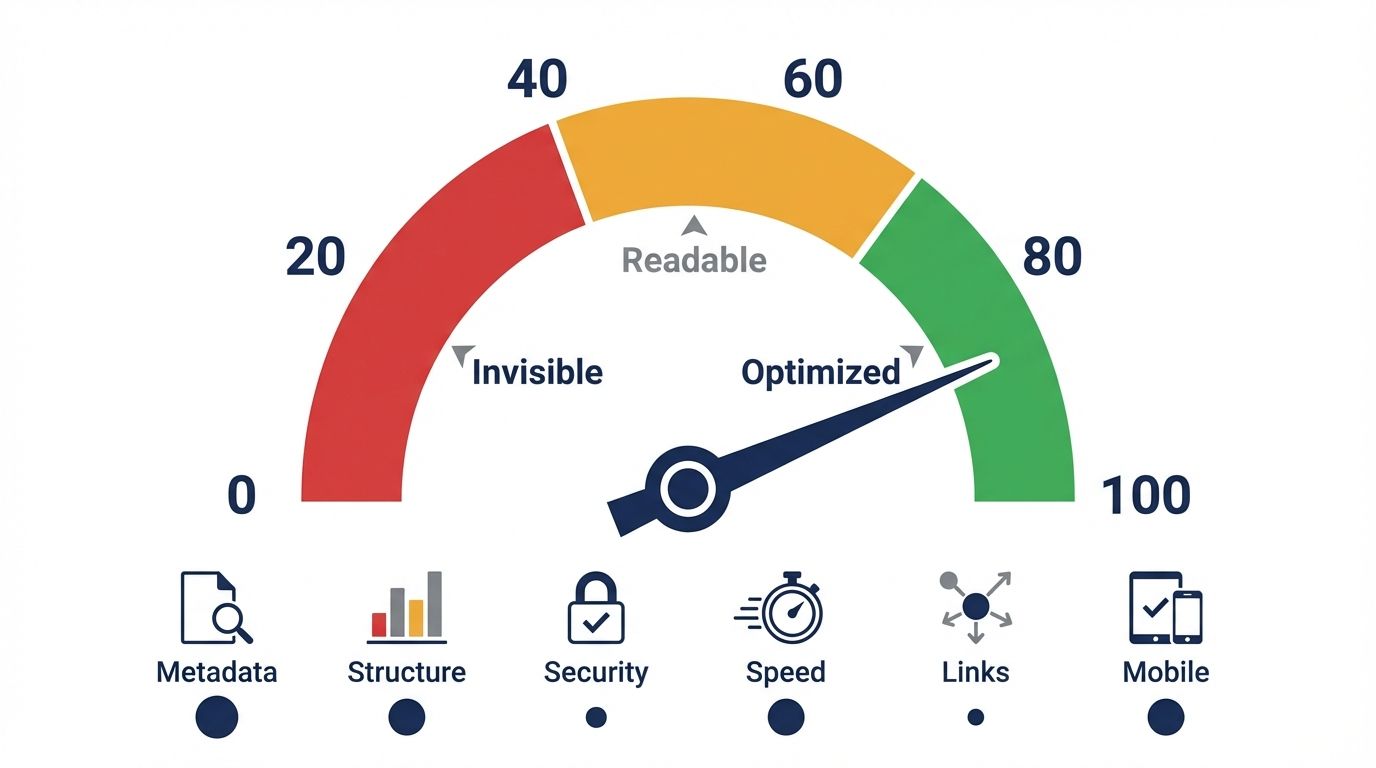

7. How the Signals Combine Into a Final Score

The six signals are not weighted equally, and the composite score is not a simple average.

Schema Validity, Token Efficiency, and Rendering carry the highest individual weights because they have the highest variance in our dataset — the difference between a passing and failing score in these categories has the most direct impact on whether an AI retrieval pipeline can produce a usable representation of the page.

Entity Clarity and Crawl Access carry medium weights. Semantic Structure carries the lowest individual weight but acts as a multiplier: a page with excellent scores across the other five signals but a broken heading hierarchy will see its composite score capped below the Optimized threshold.

A score of 71 or above clears the structural barriers to AI citation. A score of 85 or above indicates a page that AI systems will find reliable enough to use as a primary source. Scores at 95 and above are rare and typically require deliberate optimization of every signal category plus a GIST-compliant content strategy that is outside the scope of structural auditing alone.

Reference Sources

- Schema.org: Complete type library and property definitions. https://schema.org

- Google Rich Results Test: Validation tool for JSON-LD structured data. https://search.google.com/test/rich-results

- OpenAI GPTBot Documentation: User-agent specification and robots.txt guidance. https://platform.openai.com/docs/gptbot

- llms.txt Standard: Jeremy Howard's specification. https://llmstxt.org

- Aggarwal et al. (2023): Generative Engine Optimization. https://arxiv.org/abs/2311.09735

- GIST Algorithm: Fahrbach et al. (2025), NeurIPS. https://arxiv.org/abs/2405.18754