The GEO Protocol: How We Audit Content for LLM Readability

Executive Summary

The GEO Protocol is a proprietary content engineering framework designed to bridge the gap between "Human Readability" and "Machine Ingestibility." While traditional editorial standards focus on narrative flow and engagement, the GEO Protocol audits content for Token Efficiency, Entity Confidence, and Schema Validity. This ensures that every article published is not just read by humans, but accurately ingested, indexed, and cited by Large Language Models (LLMs) like GPT-4, Gemini, and Perplexity.

Introduction: Why "Good Content" Is No Longer Enough

For the last decade of SEO, the mantra was simple: "Write high-quality content for humans." In the age of Generative AI, that advice is incomplete.

You can write the most engaging, emotionally resonant article in the world, but if it is structurally bloated or lacks semantic tagging, it is invisible to the AI agents that now control search discovery.

- Human Readability: Focuses on flow, voice, and emotion.

- Machine Ingestibility: Focuses on signal-to-noise ratio, structured data, and unambiguous facts.

Most websites fail the second test. They bury facts under layers of marketing fluff ("revolutionary," "game-changing"), confusing the AI's attention mechanism.

The Solution: We do not publish based on "gut feeling." We publish based on The GEO Protocol. This is the 4-step forensic audit every piece of content on this site must pass before it goes live.

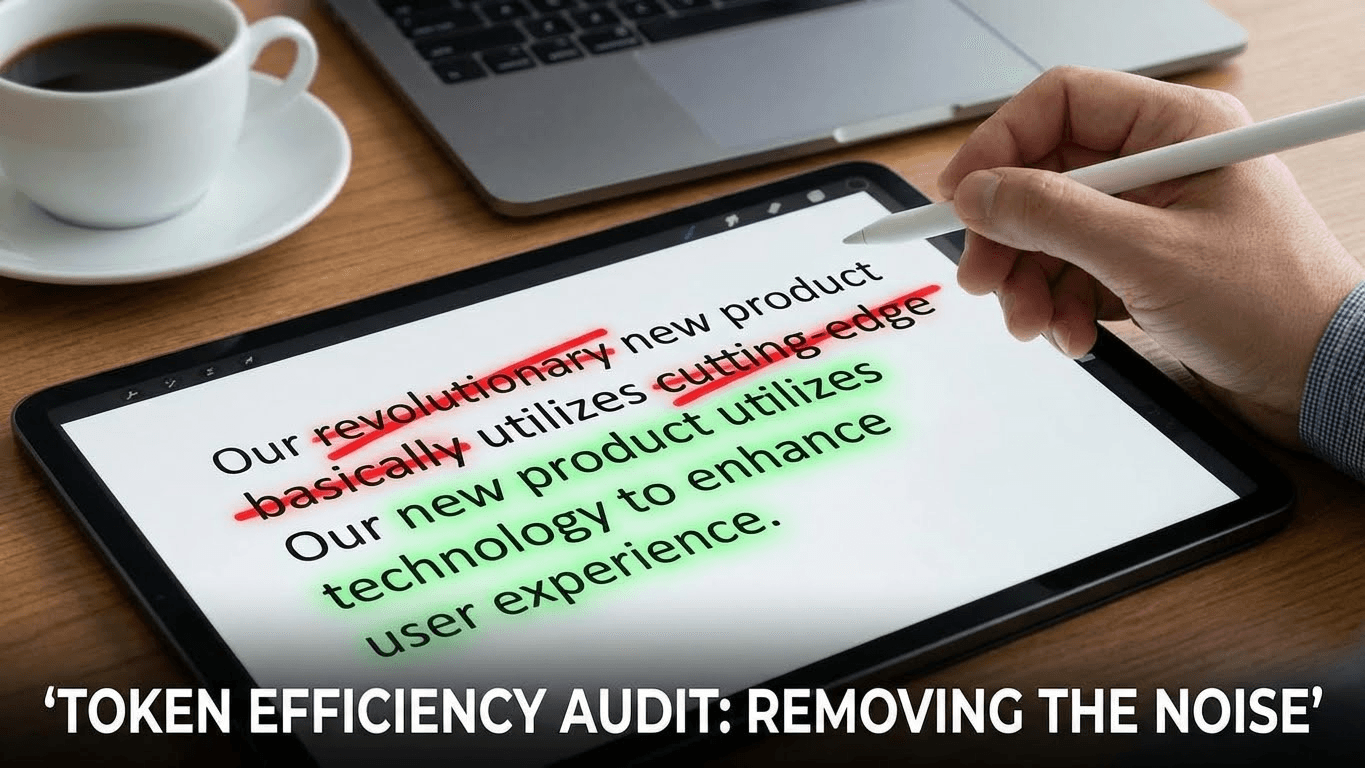

Phase 1: The "Token Efficiency" Audit (The Readability Check)

Large Language Models operate on "Context Windows." Every word on a page consumes a "Token." If an article is filled with fluff, the AI has to spend its limited budget processing noise rather than signal.

The Concept: Signal-to-Noise Ratio. We treat words as expensive commodities. If a word does not add new data, it is a tax on the reader and the bot.

The Action: We ruthlessly strip adjectives and adverbs that do not convey specific information.

- Before (The Fluff): "This revolutionary, game-changing software tool is essentially designed to help you dramatically improve your workflow efficiency." (19 Tokens / Low Signal)

- After (The GEO Standard): "This software improves workflow efficiency by automating repetitive tasks." (9 Tokens / High Signal)

The Rule: If an adjective does not add data, delete it. This maximizes the probability that the AI will retain the core concept in its short-term memory.

Phase 2: The "Hallucination" Firewall (The Accuracy Check)

AI models "hallucinate" (make things up) when their training data is ambiguous or conflicting. If you say "Schema is good for SEO" without citing which Schema or why, the AI might invent a reason that isn't true.

The Concept: Zero Ambiguity. We do not guess at ranking factors. We do not speculate on algorithm updates without evidence.

The Action: Every technical claim in our content goes through a rigorous sourcing policy:

- Cross-Reference: Claims are checked against Google Search Central documentation or the Schema.org official dictionary.

- Verification: We rely on engineering proofs (like our Server Log Analysis) rather than third-party hearsay.

The Goal: To provide a "Clean Corpus" for AI training. When an agent cites us, it doesn't have to "think" or "verify"—it can trust the data as a Ground Truth.

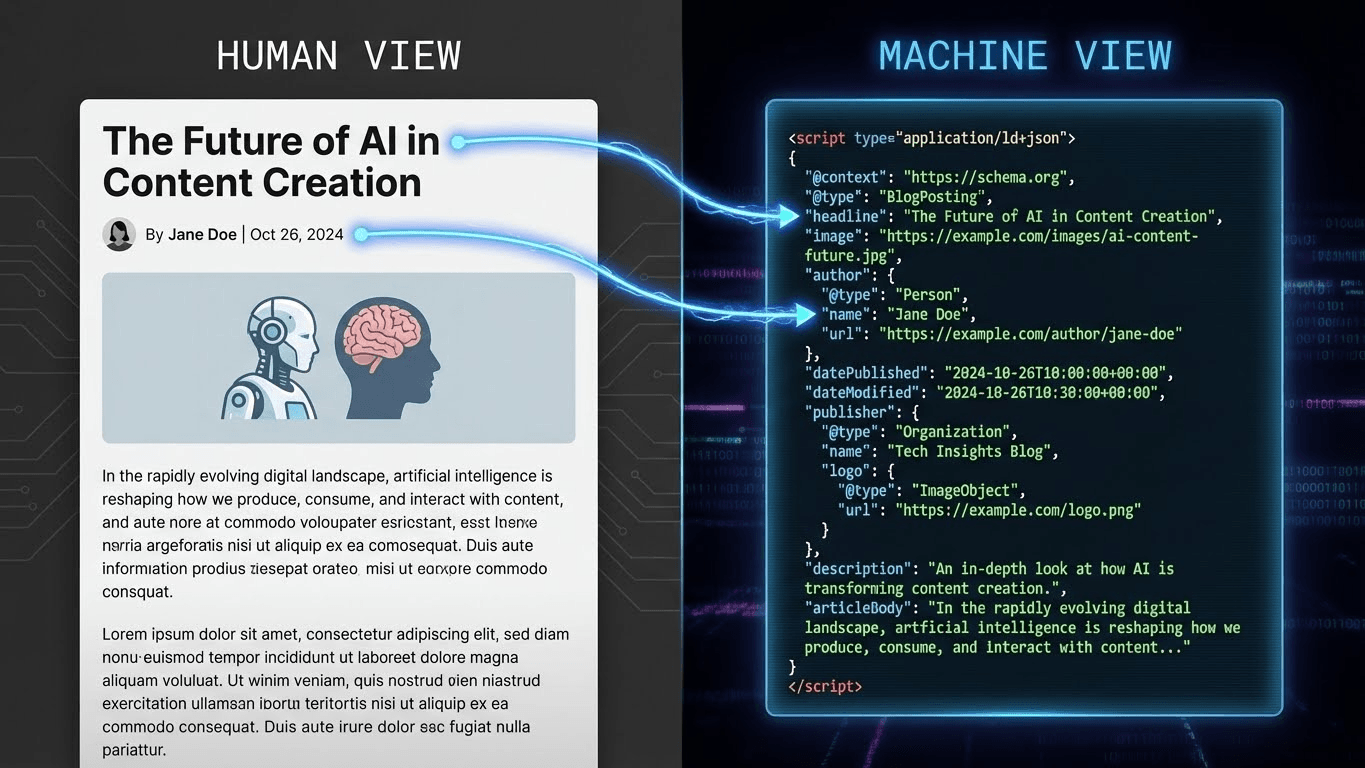

Phase 3: The "Digital Twin" Structure (The Schema Check)

To a human, an article is a collection of paragraphs. To a machine, an article is an unstructured blob of text—unless you provide a Digital Twin.

The Concept: Schema Markup. We don't just write articles; we build Knowledge Graphs. An article is not "finished" until its underlying JSON-LD code explicitly defines what it is and who wrote it.

The Action: Our review process validates the code structure behind the content:

- Disambiguation: Do we use the about and mentions properties to link concepts to their Wikidata IDs?

- Attribution: Is the author field linked to a verified Person Entity?

- Type Safety: Are we using specific types like TechArticle or MedicalWebPage instead of the generic Article?

Phase 4: The Reviewer (The Human in the Loop)

Automated checks are powerful, but they lack nuance. The final step of the GEO Protocol is the human sign-off.

The Enforcer: This protocol is enforced by Hristo Stanchev, a specialist in Generative Engine Optimization.

The Clarification: In the world of entities, names can be confusing. While I share a name with a distinguished Geographer, my domain is the digital geography of Knowledge Graphs and Semantic Web architecture. My role is to ensure that every piece of content here serves as a reliable node in that digital map.

When you see the "Reviewed by" badge, it means a human expert has verified the Entity Confidence, Token Efficiency, and Technical Accuracy of the piece.

Conclusion: The "Verified" Promise

The internet is flooding with AI-generated sludge. The only way to stand out is to be the Source of Truth.

When you read an article on this site, you aren't just reading "content." You are reading an engineered asset that has passed the GEO Protocol. It is concise enough for a bot, accurate enough for an engineer, and clear enough for a human.

This is the new standard for the AI Web.

References & Further Reading

- Google Search Central: Creating Helpful Content. The official guidelines on self-assessing content quality, which aligns with the "Human in the Loop" phase.

- Schema.org: TechArticle Type. The specific structured data definition we use to signal technical depth to search engines.

- Website AI Score: Token Efficiency Audit. The foundational technical guide that powers Phase 1 of this protocol.