Server Log Analysis: Tracking GPTBot Visits in Nginx/Apache

Definition

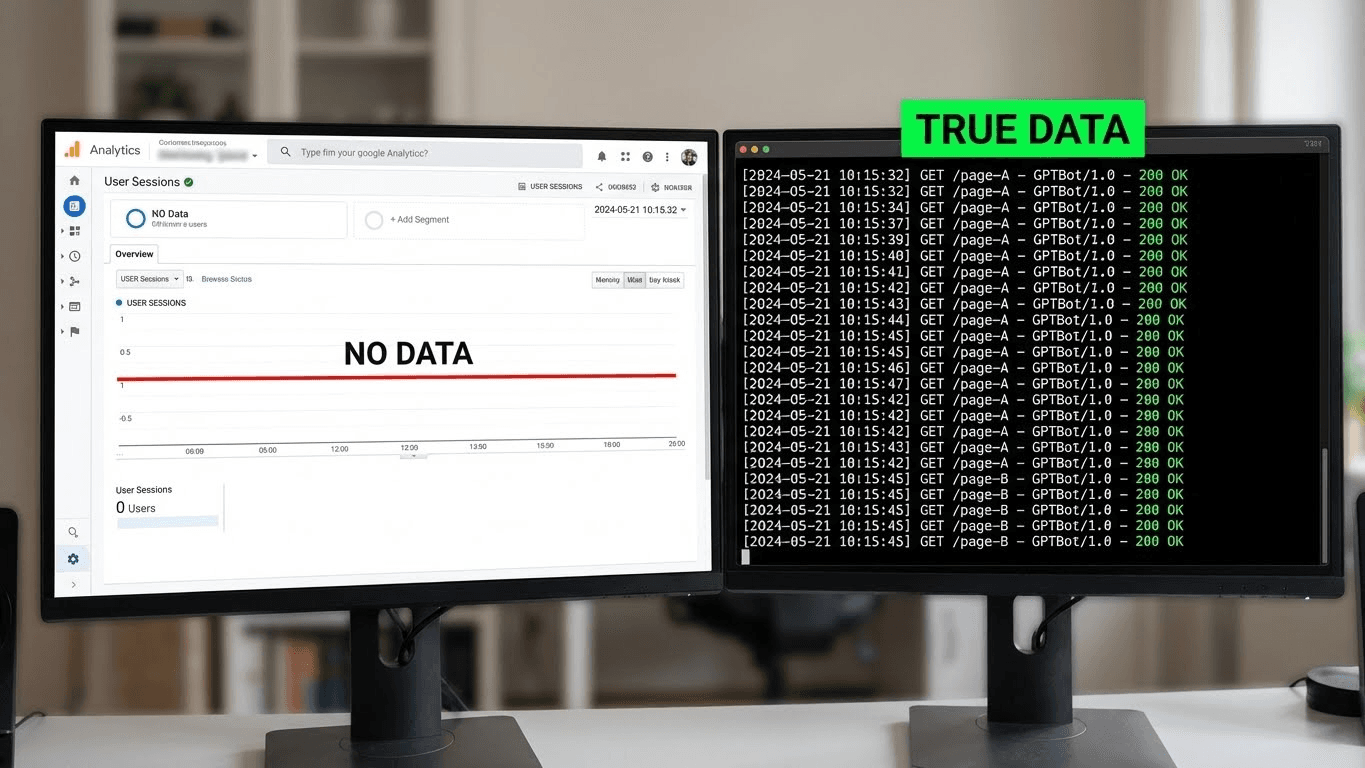

Server Log Analysis for AI is the practice of mining raw access logs (from Nginx, Apache, or CDN edges) to filter, verify, and quantify requests specifically from AI User-Agents like GPTBot or ClaudeBot. Unlike client-side analytics (Google Analytics) which rely on JavaScript execution—something most AI crawlers generally do not perform—log analysis is the only definitive source of truth for measuring the crawl frequency, depth, and status codes of AI ingestion attempts.

The Problem: The "Ghost Traffic" of AI

Marketing teams live in Google Analytics (GA4). They look for "Sessions" and "Users."

However, AI crawlers are not users. They are headless scripts.

- No JavaScript: Most bots grab the raw HTML and leave. They do not execute the GA4 tracking script, meaning they never trigger a "pageview" event in your dashboard.

- No Sessions: A bot might hit 5,000 pages in 2 minutes (Crawl) or 1 page once (RAG Retrieval). It does not have "Time on Site."

- Invisible Errors: If you blocked GPTBot by accident in your WAF, GA4 will show nothing. Your traffic just drops, and you don't know why.

The Reality:

You might have had 10,000 visits from OpenAI this month, effectively training GPT-5 on your product. But your analytics dashboard shows 0. To see the truth, you must go to the metal: The Server Logs.

The Solution: GREP and The User-Agent String

The solution is to bypass the frontend entirely and query the backend access logs. Every request made to your server is recorded with a timestamp, IP address, Status Code, and User-Agent.

We need to filter for the specific User-Agents of the major AI labs:

- OpenAI: GPTBot, ChatGPT-User, OAI-SearchBot

- Anthropic: ClaudeBot

- Common Crawl: CCBot

- Perplexity: PerplexityBot

By analyzing these logs, we can answer three critical AEO questions:

- Crawl Frequency: How often is my Entity Home being updated in the vector database?

- Status Health: Are bots getting 200 OK or 403 Forbidden?

- High-Value Targets: Which specific pages are they reading the most?

Technical Implementation: Command Line Analysis

If you have SSH access to your server, you can use standard Linux tools (grep, awk, goaccess) to extract this data instantly.

1. Identifying the Log Location

- Nginx: Usually /var/log/nginx/access.log

- Apache: Usually /var/log/apache2/access.log

2. The "Pulse Check" Command

Run this command to count how many times OpenAI has hit your site today.

Bash

# Count GPTBot hits in the log file

grep "GPTBot" /var/log/nginx/access.log | wc -l

3. The "Crawl Depth" Analysis

This command lists the specific pages OpenAI is crawling, sorted by popularity. This reveals what the AI finds valuable on your site.

Bash

# Find top 20 pages crawled by GPTBot

grep "GPTBot" /var/log/nginx/access.log | awk '{print $7}' | sort | uniq -c | sort -rn | head -20

4. The "Health Check" (Status Codes)

Are you accidentally blocking them? This shows the HTTP status codes (200, 403, 404, 500) returned to the bot.

Bash

# Show status codes for GPTBot requests

grep "GPTBot" /var/log/nginx/access.log | awk '{print $9}' | sort | uniq -c

Interpreting the Output:

- 200: Success. The bot ate the content.

- 403: Forbidden. Your WAF or robots.txt is blocking them. You need to verify your firewall settings immediately.

- 401: Unauthorized. The page is behind a login.

- 500: Server Error. The crawler crashed your site (Rate Limit needed).

Comparison: Google Analytics vs. Server Logs

Feature | Google Analytics (GA4) | Server Log Analysis |

Data Source | Client-Side JS (gtag.js) | Server-Side Text File |

Tracks AI Bots? | No (JS usually disabled) | Yes (Records every handshake) |

Metric Focus | Human Engagement (Time/Clicks) | Technical Access (Hits/Bytes) |

Reliability | Medium (Blocked by AdBlock) | High (Absolute Truth) |

Setup Cost | Low (Copy-paste snippet) | Medium (SSH/Terminal access) |

AEO Utility | Low | Critical |

Code Example: Automated Daily Report (Bash Script)

You don't want to SSH in every day. Use this simple bash script to email yourself a daily summary of AI activity.

Bash

#!/bin/bash

# AI Bot Report Script

# Save as /usr/local/bin/ai-report.sh and add to cron

LOG_FILE="/var/log/nginx/access.log"

TODAY=$(date +%d/%b/%Y)

REPORT="/tmp/ai_report.txt"

echo "AI Bot Report for $TODAY" > $REPORT

echo "---------------------------------" >> $REPORT

# Loop through major bots

for bot in GPTBot ClaudeBot CCBot PerplexityBot; do

COUNT=$(grep "$TODAY" $LOG_FILE | grep "$bot" | wc -l)

echo "$bot Hits: $COUNT" >> $REPORT

done

echo "---------------------------------" >> $REPORT

echo "Top 5 Pages Crawled by GPTBot:" >> $REPORT

grep "$TODAY" $LOG_FILE | grep "GPTBot" | awk '{print $7}' | sort | uniq -c | sort -rn | head -5 >> $REPORT

# Output the report (or pipe to mail command)

cat $REPORT

Key Takeaways

- GA4 is Blind: Stop looking for AI traffic in your marketing dashboard. If it relies on JavaScript, it’s missing 99% of bot activity.

- Status Codes Matter: A thousand hits means nothing if the status code is 403. Always audit the result of the request, not just the volume.

- The "RAG Signal": A sudden spike in OAI-SearchBot (SearchGPT) traffic usually precedes a spike in human referrals. It is a leading indicator of Share of Model growth.

- Bot Segregation: Use grep to separate GPTBot (Training) from ChatGPT-User (Live Query). This tells you if you are being studied or being cited.

- CDN Logs: If you use Cloudflare, you might not see these logs on your origin server. You must use Cloudflare Logpush or Analytics to see the edge hits.

References & Further Reading

- Nginx Documentation: Configuring Logging. Official guide on customizing access logs to capture User-Agents clearly.

- GoAccess: Real-time Web Log Analyzer. An open-source tool for visualizing server logs in the terminal.

- Link: https://goaccess.io/

- OpenAI: Crawler IP Ranges. How to verify that a request claiming to be GPTBot is actually from OpenAI (and not a spoofer).