Blocking CCBot vs. GPTBot: A Granular Robots.txt Strategy

Definition

A Granular Robots.txt Strategy is the practice of selectively allowing or disallowing specific AI crawlers based on their downstream utility (Training vs. Retrieval) rather than applying a blanket "Block AI" directive. This approach distinguishes between Foundation Crawlers (like CCBot) which build the open datasets used by nearly all LLMs, and Proprietary Crawlers (like GPTBot) which feed data to specific commercial models, allowing site owners to balance Data Sovereignty with Answer Engine Visibility.

The Problem: The "Nuclear Option" Backfire

When website owners panic about their content being "stolen by AI," they often copy-paste a massive block list into their robots.txt file.

Plaintext

User-agent: *

Disallow: /

Or they block the biggest name they know: CCBot.

This is a strategic error.

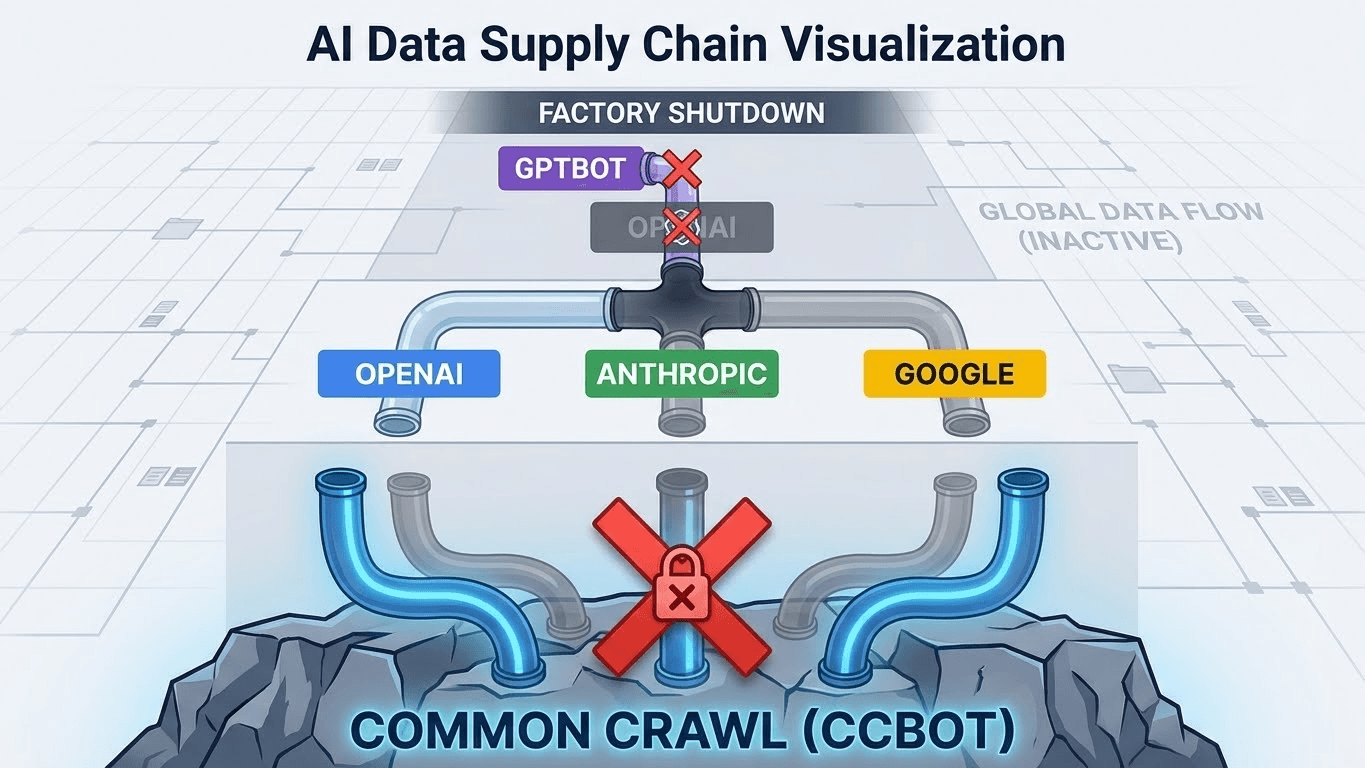

To understand why, you must understand the Data Supply Chain.

- CCBot (Common Crawl): This is a non-profit "Foundation Crawler." It takes a snapshot of the entire internet and dumps it into a publicly available dataset (WARC files).

- Who uses it? Everyone. OpenAI, Anthropic, Google, Apple, and academic researchers all download Common Crawl to pre-train their base models.

- The Risk: If you block CCBot, you remove your site from the entire future history of the internet. You aren't just blocking ChatGPT; you are blocking the "Base Layer" of knowledge for models that haven't even been invented yet.

- GPTBot (OpenAI): This is a "Proprietary Crawler." It scrapes data specifically to fine-tune OpenAI’s models (GPT-4, GPT-5).

- The Risk: If you block GPTBot, you only hurt OpenAI. You do not hurt Anthropic (Claude) or Google (Gemini).

The Consequence of Blanket Blocking:

If you block CCBot, you effectively "erase" your brand from the foundational training data of the next generation of AI. When a user asks a future model "Who is the leader in [Your Industry]?", the model won't hallucinate; it will simply have zero tokens associated with your brand. You become a digital ghost.

The Solution: The "surgical" Block

The optimal strategy for most commercial brands is Surgical Permissiveness.

You want to be in the Foundation (so models know you exist), but you may want to opt-out of Proprietary Training (if you sell content) or Live Retrieval (if you want users to click through).

However, for AEO (Answer Engine Optimization), we generally recommend Allowing retrieval bots while strictly managing Training bots if you are protecting IP.

The 3-Tier Bot Taxonomy

To execute this, you must categorize bots in your robots.txt:

- Foundation Bots (High Risk to Block): CCBot. Blocking this destroys your long-term Entity Home authority across all models.

- Training Bots (Business Decision): GPTBot, ClaudeBot, FacebookBot. These scrape content to build products. If you sell data, block them. If you sell services, allow them (for visibility).

- Retrieval/Search Bots (Do Not Block): OAI-SearchBot, ChatGPT-User, PerplexityBot. These bots act like users. They fetch your page in real-time to answer a question. Blocking these is equivalent to blocking a user from visiting your site.

Technical Implementation: The Granular File

Do not rely on the default settings. You must explicitly define your stance.

Scenario A: The "Maximum Visibility" Strategy (Recommended for SaaS/Service)

You want every model to know who you are, and you want every RAG agent to cite you.

Plaintext

User-agent: CCBot

Allow: /

User-agent: GPTBot

Allow: /

User-agent: ChatGPT-User

Allow: /

Scenario B: The "Data Sovereignty" Strategy (For Publishers)

You want to be in the foundation (so the AI knows you exist), but you refuse to let OpenAI train on your latest articles for free. Crucially, you still allow the "Search" bot so users can find you.

Plaintext

# 1. Allow the Foundation (Base Knowledge)

User-agent: CCBot

Allow: /

# 2. Block Proprietary Training (Protect IP)

User-agent: GPTBot

Disallow: /

# 3. Allow Live Retrieval (Get Traffic)

User-agent: OAI-SearchBot

Allow: /

User-agent: ChatGPT-User

Allow: /

Note: OpenAI has split their bot definitions. GPTBot is for training. OAI-SearchBot is for SearchGPT. This separation allows the exact granularity we need.

Comparison: CCBot vs. GPTBot

Feature | CCBot (Common Crawl) | GPTBot (OpenAI) |

Owner | Non-Profit Organization | OpenAI (Commercial) |

Purpose | Archiving the Web (Open Data) | Training Proprietary Models |

Downstream Usage | Used by ALL AIs (OpenAI, Anthropic, Meta) | Used ONLY by OpenAI |

Blocking Impact | Removes you from the "Global Base Layer" | Removes you from GPT-5 Training |

Traffic Referral | Zero (It is an archive) | Low (It is a training scraper) |

AEO Risk | Extreme (Total invisibility long-term) | Moderate (Invisible to GPT only) |

Code Example: The "AEO Safe" Robots.txt

Here is a modern robots.txt template that protects against Empty Shell issues (by allowing inspection) while managing crawler access.

Plaintext

# ==========================================

# FOUNDATION LAYER (Do Not Block for AEO)

# ==========================================

User-agent: CCBot

Allow: /

# ==========================================

# TRAINING LAYER (Block if you sell content)

# ==========================================

# If you are a Publisher, uncomment Disallow:

User-agent: GPTBot

# Disallow: /

Allow: /

User-agent: ClaudeBot

# Disallow: /

Allow: /

# ==========================================

# RETRIEVAL LAYER (Never Block)

# ==========================================

# These bots drive traffic via citations

User-agent: OAI-SearchBot

Allow: /

User-agent: ChatGPT-User

Allow: /

User-agent: PerplexityBot

Allow: /

# ==========================================

# SITEMAPS

# ==========================================

Sitemap: https://websiteaiscore.com/sitemap.xml

Sitemap: https://websiteaiscore.com/llms.txt

Note: We included the llms.txt reference here, which we discussed in our guide on The /llms.txt Standard.

Key Takeaways

- Common Crawl is the Root: CCBot is not just another crawler; it is the library of record for the AI age. Blocking it is a permanent opt-out from the general intelligence of future models.

- Granularity is Power: OpenAI split GPTBot (Training) and OAI-SearchBot (Search) for a reason. Use this distinction to protect your IP while keeping your traffic.

- Robots.txt is Law: Unlike meta tags which can be ignored, reputable AI companies (OpenAI, Anthropic, Google) strictly adhere to robots.txt directives.

- Audit Your WAF: Sometimes your robots.txt is perfect, but your Cloudflare/WAF is blocking "Unknown Bots" by default. Ensure CCBot is whitelisted in your firewall.

- Monitor with Logs: Use server logs to see if ChatGPT-User is visiting your specific high-value pages. If not, check your Share of Model metrics.

References & Further Reading

- Common Crawl: CCBot Documentation. Official specifications for the Common Crawl bot and its user-agent string.

- OpenAI: Bot Names and User Agents. The official list distinguishing between GPTBot, ChatGPT-User, and OAI-SearchBot.

- Dark Visitors: AI Agent List. A database of active AI scrapers and their behaviors.