Token Efficiency: Audit Your Site's "Cost to Read"

Definition

Token Efficiency is the ratio of "Semantic Signal" (valuable information) to "Structural Noise" (HTML tags, CSS classes, script payloads) within a web page's source code. In the era of Generative AI, web performance has shifted from a metric of Time (Latency/TTFB) to a metric of Cost (Dollars per Token). Token Efficiency measures the economic burden your website places on an AI agent (like ChatGPT, ClaudeBot, or Perplexity) to ingest, process, and index your content. A low-efficiency site imposes a "Token Tax" that degrades retrieval accuracy, forces context window truncation, and ultimately lowers your Share of Model.

Executive Summary: The Economics of the AI Web

For the past twenty years, the entire discipline of Web Performance Optimization (WPO) has focused on Human Constraints.

- Bandwidth: We minify JPEG images and gzip assets because humans have limited data plans.

- Latency: We optimize Critical Rendering Paths because humans have an attention span of ~200 milliseconds.

- Interactivity: We ship massive JavaScript bundles (React, Vue) to create "app-like" experiences because humans crave responsiveness.

Today, we face a new consumer with entirely different physics: The AI Agent.

AI Agents (crawlers like GPTBot, ClaudeBot, and RAG retrieval systems) do not care about "Cumulative Layout Shift" (CLS). They do not care about "Interaction to Next Paint" (INP). They care about one metric above all else: Context Window Economy.

Every time an AI reads your website, it incurs a hard cost.

- Ingestion Cost: The model provider (OpenAI, Anthropic) pays for GPU compute to tokenize and embed your HTML.

- Context Cost: The limited memory of the model (e.g., 128k tokens) means every byte of code you serve competes with your actual content for space.

If your website is wrapped in 5,000 lines of nested <div> tags, utility-first CSS classes, and redundant hydration state JSON, you are forcing the AI to "pay" for noise. Eventually, the RAG (Retrieval-Augmented Generation) pipeline will optimize its own costs by ignoring your page in favor of a competitor who provides the same information in a cleaner, cheaper format.

This is the "Token Tax." And if you do not audit it, you are pricing yourself out of the AI market.

Part 1: The Physics of Tokenization (Why Code is Expensive)

To understand why "Token Efficiency" is a valid engineering metric, we must look at how Large Language Models (LLMs) actually "read." They do not read words; they read Tokens.

The BPE Mechanism (Tiktoken)

Most modern models (including GPT-4o and Llama 3) use a tokenizer based on Byte-Pair Encoding (BPE). This algorithm is optimized for natural language (English prose), not for computer syntax.

- Clean Text Efficiency: The sentence "The quick brown fox" is efficiently compressed into 4 tokens. The tokenizer recognizes common words as single integers.

- HTML Inefficiency: The code <div> is a disaster for BPE.

- < (1 token)

- div (1 token)

- class (1 token)

- = (1 token)

- " (1 token)

- text (1 token)

- - (1 token)

- lg (1 token)

- ...and so on.

A simple HTML wrapper can easily cost 15-20 tokens while contributing zero semantic value. If your page has a list of 50 FAQs, and each FAQ is wrapped in complex HTML, you might be spending 1,000 tokens just to render the structure of the list, before the AI even reads the first answer.

The "Haystack" Problem in RAG

In a RAG pipeline, the system retrieves your webpage, chunks it, and feeds it into the LLM to answer a user's question. This is often described as finding a "Needle in a Haystack."

Token Bloat increases the size of the Haystack without increasing the size of the Needle.

Scenario: A user asks, "What is the API rate limit for the Enterprise Plan?"

- Site A (Token Efficient): Returns a 500-token Markdown table.

- Result: The LLM reads it instantly. The "Signal Density" is high. The answer is retrieved with 99% confidence.

- Site B (Token Bloated): Returns a 15,000-token raw HTML dump full of navigation links, scripts, and deep DOM nesting.

- Result: The LLM's attention mechanism is diluted. Research into the "Lost in the Middle" phenomenon shows that as the input context grows with irrelevant data (noise), the model's ability to retrieve specific facts degrades. The AI might hallucinate or simply return "I don't know" because the signal-to-noise ratio was too low.

Part 2: The Three Sources of Token Bloat

Where are these wasted tokens coming from? Based on our audits of Fortune 500 sites, the "Token Tax" stems from three modern web development practices that are hostile to AI ingestion.

1. "Class-itis" (The Utility CSS Tax)

Utility-first CSS frameworks like Tailwind CSS have revolutionized frontend development speed. However, they transfer the complexity of styling from the CSS sheet to the HTML DOM.

The Code Reality:

HTML

<div>

<p>Hello World</p>

</div>

The Token Audit:

- Text Content: "Hello World" (2 tokens).

- Structural Overhead: The class string alone is ~45 tokens.

- Ratio: 95% Noise / 5% Signal.

If this pattern repeats for every item in a product grid or FAQ accordion, you are wasting tens of thousands of tokens on styling instructions (colors, margins, shadows) that the AI explicitly ignores. The LLM does not care if your padding is p-6 or p-8; it only wants the text.

2. The Hydration State (The Invisible Killer)

This is often the most dangerous offender because it is invisible to the human eye.

As we detailed in our Client-Side Rendering (CSR) Audit, frameworks like Next.js, Nuxt, and Remix act as a double-edged sword. To make the page interactive, they inject a massive JSON blob at the bottom of the HTML (often labeled __NEXT_DATA__ or window.__INITIAL_STATE__).

The Redundancy:

This JSON blob is a complete duplicate of every piece of data on the page.

- HTML Layer: The server renders <h1>Product Price: $50</h1>.

- JSON Layer: The script tag contains {"product": {"price": 50, "name": "..."}}.

The AI View:

The crawler downloads the page. It sees the content twice.

- Bloat Factor: We frequently audit e-commerce product pages where the raw HTML is 50KB, and the Hydration JSON is 250KB. The JSON often contains data that isn't even rendered—user IDs, inventory codes, timestamps, and draft variations.

- Cost: You are paying to tokenize the same information twice, plus a massive amount of JSON syntax overhead ({, }, :, "), which is extremely token-expensive.

3. DOM Depth (Div Soup)

Modern UI component libraries (like Material UI or Chakra UI) often solve layout problems by wrapping elements in divs.

- Structure: Section > Container > Grid > Col > Box > Card > CardBody > Text

- Token Cost: Each layer adds an opening tag (<div>) and a closing tag (</div>).

- Cumulative Effect: For a page with complex navigation, sidebars, and footers, the "Structural Skeleton" can account for 60% of the total token count.

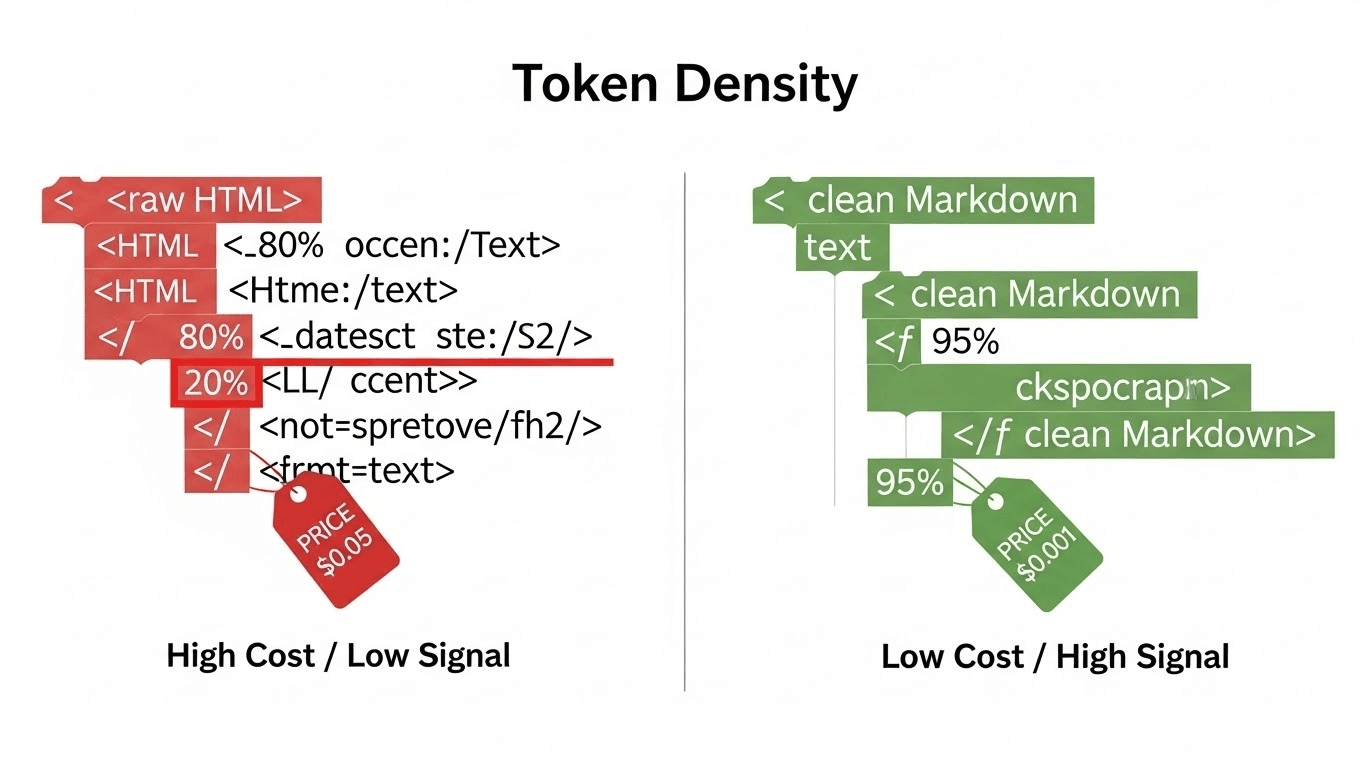

Part 3: The Metric – "Token Density"

You cannot fix what you do not measure. We introduce a new KPI for the AEO Era: Token Density.

The Formula

$$\text{Token Density} = \frac{\text{Content Tokens}}{\text{Total Source Tokens}} \times 100$$

- Content Tokens: The number of tokens in the visible, plain-text body of the page (the "Meat"). This is what the user reads.

- Total Source Tokens: The number of tokens in the raw HTML source code (the "Wrapper"). This is what the bot pays to ingest.

Benchmarks

- Excellent (>50%): Documentation sites, Blogs using SSG (Static Site Generation), Raw Markdown, or sites implementing the /llms.txt Standard.

- Average (20-40%): Standard WordPress sites, clean E-commerce implementations.

- Critical Failure (<10%): Heavy SPAs (Single Page Apps), Enterprise Marketing sites with excessive tracking scripts, Tailwind-heavy landing pages with hydration blobs.

The "Critical Failure" Zone:

If your Token Density is under 10%, you are effectively serving spam to the AI. You are asking the model to dig through 90% garbage to find 10% value. In a competitive retrieval environment (like SearchGPT selecting which source to cite), the algorithm is economically incentivized to drop your page in favor of a denser source.

Part 4: The Audit Protocol – How to Measure Your Cost

Do not rely on "File Size" (KB). KB is a proxy for bandwidth, not compute. You must measure Tokens.

Here is the engineering protocol to audit your site's "Cost to Read" using Python and the tiktoken library (the exact tokenizer used by GPT-4).

Prerequisite: Install the Library

Bash

pip install tiktoken requests beautifulsoup4

The Audit Script

Save this script as audit_tokens.py. It spoofs the GPTBot User-Agent to ensure you are auditing exactly what OpenAI sees (including any server-side blocking or rendering differences).

Python

import requests

import tiktoken

from bs4 import BeautifulSoup

def audit_token_density(url):

print(f"Analyzing: {url} ...")

# 1. Define the User-Agent (Spoofing GPTBot)

# This ensures we see what the AI sees, bypassing any bot-specific blocks

headers = {

'User-Agent': 'Mozilla/5.0 AppleWebKit/537.36 (KHTML, like Gecko); compatible; GPTBot/1.0; +https://openai.com/gptbot'

}

try:

# 2. Fetch the Raw HTML (The Payload)

response = requests.get(url, headers=headers, timeout=10)

response.raise_for_status()

raw_html = response.text

except Exception as e:

print(f"Error fetching URL: {e}")

return

# 3. Extract the "Content" (The Signal)

soup = BeautifulSoup(raw_html, 'html.parser')

# Remove scripts and styles for the content calculation

# These are "Noise" to the semantic meaning

for script in soup(["script", "style", "svg", "noscript"]):

script.decompose()

# Get text, strip whitespace

text_content = soup.get_text(separator=' ', strip=True)

# 4. Tokenize Both (Using GPT-4's encoding)

encoder = tiktoken.get_encoding("cl100k_base")

total_tokens = len(encoder.encode(raw_html))

content_tokens = len(encoder.encode(text_content))

# Avoid division by zero

if total_tokens == 0:

print("Error: No tokens found.")

return

# 5. Calculate Metrics

density = (content_tokens / total_tokens) * 100

# Estimate Cost (Based on Gemini 1.5 Pro Pricing ~ $2.50 / 1M tokens)

# This is the cost for an AI to read your page 1,000 times

cost_per_1k_visits = (total_tokens * 1000) / 1000000 * 2.50

# 6. Output Results

print(f"--- Token Efficiency Report ---")

print(f"Total Source Tokens (Cost): {total_tokens:,}")

print(f"Useful Content Tokens (Value): {content_tokens:,}")

print(f"Token Density Score: {density:.2f}%")

print(f"Est. Ingestion Cost (1k hits): ${cost_per_1k_visits:.2f}")

# 7. Diagnostic Logic

if density < 10:

print("\n❌ CRITICAL FAIL: Site is <10% signal. RAG truncation likely.")

print(" Action: Check for Hydration Bloat (__NEXT_DATA__) or heavy CSS classes.")

elif density < 30:

print("\n⚠️ WARNING: Low efficiency. Consider semantic flattening.")

else:

print("\n✅ PASS: High signal-to-noise ratio. Optimized for AI.")

# Run the Audit

# Replace with your target URL

audit_token_density("https://websiteaiscore.com/blog/share-of-model-vs-rank-tracking")

How to Interpret the Data:

- Total Source Tokens > 50,000: This is a red flag for a single page. If your page is 50k tokens, it consumes ~40% of a standard 128k context window. RAG pipelines will often "summarize" or "truncate" this page before processing, leading to data loss.

- Density < 15%: This confirms that 85% of your transmission bandwidth is wasted on structure.

Part 5: Optimization Strategies – Reducing the Cost

Once you have audited your site and identified the bloat, you need an engineering strategy to fix it. You do not need to redesign the visual experience for humans; you need to refactor the delivery mechanism for bots.

Strategy 1: The "Slim" Render (Dynamic Stripping)

Just as you "tree-shake" JavaScript to remove unused code for the browser, you must tree-shake your HTML for the bot.

- Technique: Use Middleware (Vercel Middleware, Cloudflare Workers, or Nginx) to detect the User-Agent.

- Logic:

- If User-Agent contains GPTBot, ClaudeBot, or PerplexityBot:

- Strip Attributes: Remove all style="...", class="...", data-*, and aria-* attributes using a regex or HTML processor. The bot does not have eyes; it does not need to know the text is text-gray-900.

- Nuke Scripts: Remove all <script> tags, especially the hydration state JSON and tracking pixels (Google Analytics, Meta Pixel). Bots don't execute them, but they still pay to tokenize them.

- Simplify: Serve semantic HTML only (<h1>, <p>, <table>, <ul>).

This single step can often increase Token Density from 10% to 60% without changing a single word of content.

Strategy 2: The "LLM-First" Sitemap

The ultimate optimization is to bypass HTML entirely. As we detailed in our guide on The /llms.txt Standard, you can offer a text-only version of your site.

- Implementation: Create a file at /llms.txt.

- Content: This file links to /docs/pricing.md instead of /pricing.

- Result: Smart RAG agents will look for this file first. If found, they ingest the Markdown directly. Markdown is the "Native Language" of LLMs. It has near 100% Token Density.

Strategy 3: Flattening the DOM

Refactor your component library to avoid "Div Soup."

Bad:

HTML

<div>

<div>

<div>

<div>

<h3>Title</h3>

</div>

</div>

</div>

</div>

Good: Use modern CSS Grid/Flexbox to handle layout on the parent, removing the need for wrapper divs.

HTML

<article>

<h3>Title</h3>

</article>

Strategy 4: Verifying the Gain

After implementing these changes, you must verify that the AI is actually seeing the lighter version.

- Use the Server Log Analysis technique we discussed previously.

- Check the bytes_sent field in your Nginx logs.

- Goal: You should see that for requests from GPTBot, the average response size has dropped significantly (e.g., from 150KB to 20KB). This confirms your Dynamic Stripping is working.

Part 6: Strategic Advantage – The "Cheapest Source" Wins

Why does this matter for your bottom line? It comes down to the RAG Selection Bias.

Future search engines (SearchGPT, Perplexity) operate on tight inference budgets. When they have to answer a query like "Compare HubSpot vs. Salesforce Pricing," they retrieve 10 potential sources.

- Source A: 100k tokens (Bloated HTML, Hydration JSON).

- Source B: 5k tokens (Clean Semantic HTML).

The system is economically and computationally incentivized to process Source B.

- Speed: It tokenizes 20x faster.

- Accuracy: It has less noise to confuse the attention mechanism.

- Cost: It saves the search engine money.

By optimizing Token Efficiency, you are effectively lowering the "Gas Fee" for AI to interact with your brand. The lower the fee, the higher the transaction volume.

This correlates directly with your Share of Model. The easier you are to read, the more often you will be read, and the more often you will be cited.

Part 7: Conclusion & Action Plan

The era of "Human-First" web development is ending. We are entering the "Hybrid Era," where your website must serve two masters: the visual human and the textual robot.

Your 7-Day Engineering Action Plan:

- Run the Audit: Use the audit_tokens.py script provided above to test your Top 10 organic landing pages.

- Identify the Bloat: If your density is <15%, manually inspect the source code. Is it Tailwind classes? Is it __NEXT_DATA__?

- Implement Middleware: Configure your CDN (Cloudflare/Vercel) to serve a stripped HTML version to the GPTBot User-Agent.

- Publish /llms.txt: Create a Markdown map of your most critical content (Pricing, Features, About Us).

- Monitor Logs: Watch your server logs to confirm that bot payload sizes are decreasing.

Token Efficiency is not just a technical cleanup task; it is a Distribution Strategy. In an AI world, the leanest signal wins.

References & Further Reading

- OpenAI Cookbook: Counting Tokens with Tiktoken. The official guide to understanding how GPT models tokenize text and code.

- Anthropic: Context Window Economics. Analysis of the cost/performance trade-offs in large context windows.

- Vellum.ai: Programmatic Token Counting. Guide on integrating token audits into CI/CD pipelines.