Client-Side Rendering (CSR) vs. AI: The "Empty Shell" Audit

Definition

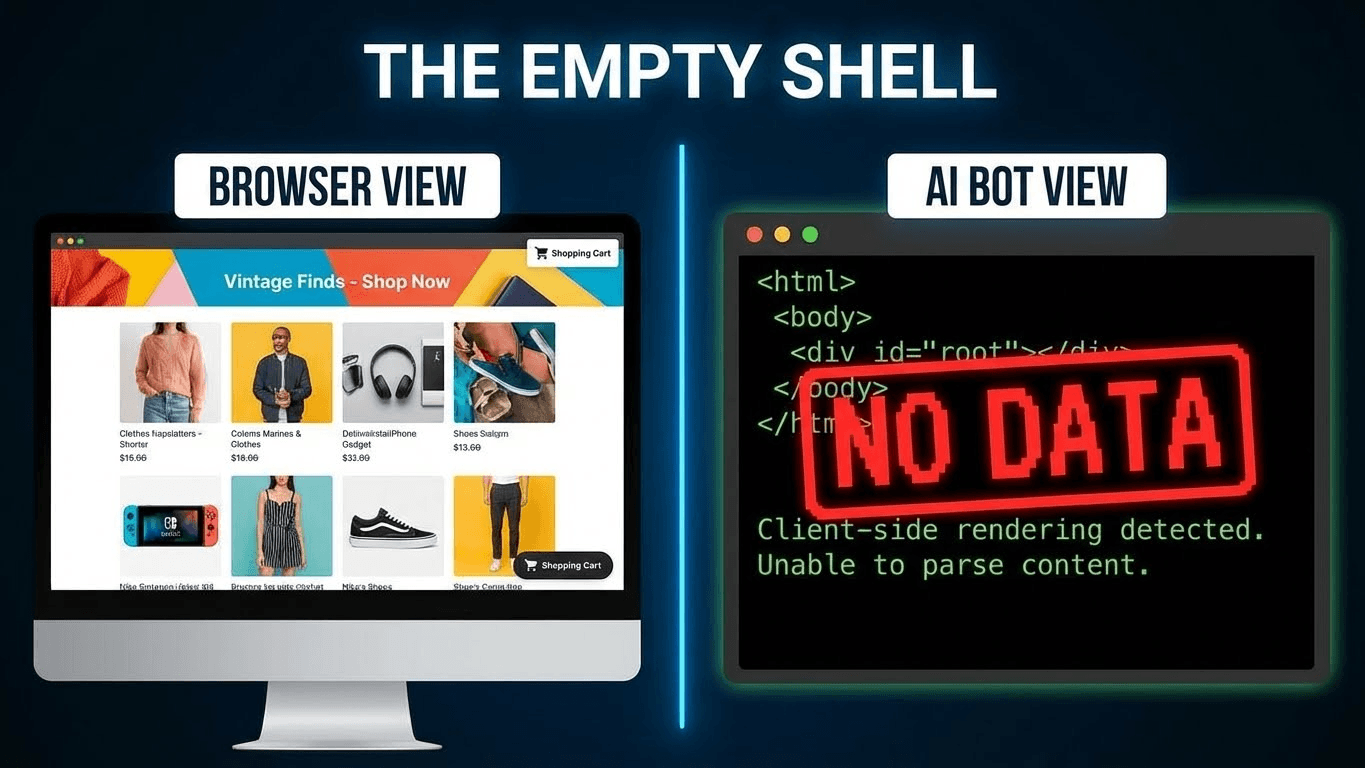

The "Empty Shell" effect is a critical crawl failure where an AI agent or search bot receives a blank or near-empty HTML document (often containing only a root <div>) because the page content relies entirely on Client-Side JavaScript execution. Unlike Googlebot, which has a dedicated rendering queue to execute scripts, most RAG (Retrieval-Augmented Generation) agents and LLM crawlers (like GPTBot, ClaudeBot, and Common Crawl) typically do not execute JavaScript. This renders Client-Side Rendered (CSR) websites effectively invisible to the AI ecosystem, regardless of their visual quality for human users.

Part 1: The Mechanics of Invisibility

To understand why your modern React application is invisible to ChatGPT, we have to look at the economic physics of web crawling.

For the last decade, web development has drifted toward a "Client-First" architecture. Frameworks like React, Vue, and Angular popularized the Single Page Application (SPA). In this model, the server’s job is easy: send a tiny HTML skeleton and a massive bundle of JavaScript. The browser (the Client) does the hard work of executing that JavaScript to fetch data, build the DOM, and paint the pixels.

The Human Experience:

- Request Page.

- Receive blank HTML.

- Browser spins (Loading...) while downloading 2MB of JS.

- JS executes, fetches JSON data from an API.

- Content Appears.

Total time: ~0.8 seconds. The user barely notices the "flicker."

The AI Experience (The "View Source" Trap):

- Request Page.

- Receive blank HTML.

- Stop.

Why does the AI stop? Why doesn't it wait for the JavaScript?

The Economics of RAG vs. Googlebot

Google has spent billions of dollars building the "WRS" (Web Rendering Service)—a massive headless Chrome farm that executes JavaScript for indexed pages. It essentially "views" your page like a human.

AI Crawlers (GPTBot, Anthropic, Perplexity) do not do this.

The reason is Compute Cost vs. Token Value.

- Standard Crawl (HTTP GET): Costs fractions of a penny. It downloads text strings. It is fast, lightweight, and scalable to billions of pages.

- Headless Render (Puppeteer/Selenium): Costs 10x to 50x more compute. It requires allocating CPU and RAM to run a browser instance, compile JavaScript, and wait for network idle time.

For an AI company training a model or a RAG agent answering a query in real-time, waiting 3 seconds for a React hydration event is unacceptable. It breaks the latency budget.

Therefore, most RAG pipelines default to Text-Only Extraction. They download the raw HTTP response. If that response is <div></div>, that is exactly what gets indexed. To the AI, your website is quite literally an empty shell.

Part 2: The Audit – How to See What the Bot Sees

Most developers fail to diagnose this because they audit their site using Chrome's "Inspect Element" tool.

"Inspect Element" is a lie. It shows you the current state of the DOM after JavaScript has run. It shows you the human view. To audit for AEO (Answer Engine Optimization), you must look at the Raw HTTP Response.

The "View Source" Test

Open your most important product page. Right-click and select "View Page Source" (or Ctrl+U).

- Do you see your product description text?

- Do you see your pricing numbers?

- Do you see your Entity Home data?

If you see only <script> tags and a root div, you have failed the audit.

The Command Line Audit (Spoofing the Agent)

To be scientifically precise, you should audit your site by acting like a bot. Open your terminal and use curl to fetch your site masquerading as GPTBot.

Bash

# Spoofing GPTBot to check for Empty Shell

curl -A "Mozilla/5.0 AppleWebKit/537.36 (KHTML, like Gecko); compatible; GPTBot/1.0; +https://openai.com/gptbot" https://yourdomain.com/pricing

Analyze the Output:

- Pass: You see <h1>Enterprise Pricing</h1> and <table>...</table> in the terminal output.

- Fail: You see <div></div> and a wall of minified JavaScript.

Part 3: Architectural Solutions

If you identify an "Empty Shell" problem, you cannot fix it with meta tags or robots.txt. This is a fundamental architectural flaw in how your server delivers data. You must shift the rendering workload from the Client back to the Server.

Here are the three architectural patterns that solve this, ranked by AI effectiveness.

1. Static Site Generation (SSG) – The "Gold Standard"

- Best For: Marketing pages, Blogs, Documentation, "About Us" pages.

- Frameworks: Next.js (Static Exports), Gatsby, Hugo, Astro.

How it works:

With SSG, your build server (e.g., Vercel, Netlify) runs the React code once during deployment. It builds a .html file for every route on your site. When a user or bot requests page.html, the server simply hands over that pre-built file.

Why AI Loves It:

- Zero Latency: There is no database query and no rendering time. The "Time to First Byte" (TTFB) is instantaneous.

- Perfect Fidelity: The HTML is fully formed text. It is 100% ingestible with zero risk of truncation or timeout.

- Token Efficiency: SSG removes the need for client-side hydration scripts for content-heavy pages, reducing the HTML file size (token density) significantly.

2. Server-Side Rendering (SSR) – The "Dynamic Standard"

- Best For: Pricing pages with real-time currency conversion, Inventory feeds, User-specific dashboards.

- Frameworks: Next.js (getServerSideProps), Nuxt (asyncData), Remix.

How it works:

When a request hits your server, the server spins up, queries the database, renders the React/Vue components into an HTML string, and sends that string to the client.

Why AI Loves It:

Like SSG, the initial response contains the full content. The AI does not need to execute JS to see the price.

- Trade-off: It is slower than SSG because the server has to "think" for every request. If your database is slow, your TTFB increases, which can hurt crawling budgets.

3. Dynamic Rendering – The "Legacy Patch"

- Best For: Massive legacy React/Angular apps that cannot be rewritten in Next.js/Nuxt.

- Tools: Prerender.io, Rendertron.

How it works:

This is "Cloaking for Good." Your server installs middleware that checks the User-Agent of the incoming request.

- If User-Agent is Human (Chrome/Safari): Serve the standard Client-Side React app.

- If User-Agent is Bot (Googlebot/GPTBot/Slack): Redirect the request to a headless browser service (like Prerender.io), which executes the JS, takes a snapshot of the HTML, and serves that static snapshot to the bot.

Why AI Accepts It:

It works. The bot gets the HTML it needs. However, it introduces a "cache lag." If you update a price, the human sees it instantly, but the bot sees the cached snapshot until the cache expires. This can lead to Brand Hallucinations where the AI quotes an outdated price.

Part 4: Comparative Analysis of Rendering Modes

Feature | Client-Side (CSR) | Server-Side (SSR) | Static (SSG) | Dynamic Rendering |

Initial HTML | Empty (<div>) | Full Content | Full Content | Full Content (for bots) |

JS Execution | Client (Browser) | Server | Build Time | Third-Party Service |

AI Visibility | Zero (Invisible) | High | Maximum | High |

Latency (TTFB) | Fast | Medium | Instant | Slow (First hit) |

Compute Cost | Low (User pays) | High (You pay) | Low (Build once) | Medium (Service fee) |

Maintenance | Easy | Moderate | Moderate | High (Middleware config) |

Part 5: A Technical Case Study – The "Invisible SaaS"

Let’s look at a hypothetical disaster scenario to illustrate the stakes.

The Company: "CloudFlow," a B2B SaaS platform.

The Move: They migrated their marketing site from WordPress (SSR) to a "modern" React SPA (CSR) to improve transition animations and user experience.

The Result:

- Traffic Drop: Organic traffic dipped slightly, but not catastrophically (Googlebot eventually rendered the JS).

- The Invisible Crisis: Six months later, marketing noticed that when users asked ChatGPT, "What is the best workflow tool for enterprise?", CloudFlow was never mentioned. Even when asked specifically about CloudFlow, ChatGPT replied: "I don't have specific pricing or feature details for CloudFlow."

The Diagnosis:

The Head of AEO ran a curl audit.

Bash

curl https://cloudflow.io/features

Output:

HTML

<div>Loading features...</div>

Because CloudFlow’s feature list was locked behind a JS bundle, the LLMs (which had re-crawled the web recently) effectively "forgot" everything about the product. The AI training data contained only the "Loading..." text.

The Fix:

CloudFlow didn't need to scrap React. They migrated to Next.js and utilized Incremental Static Regeneration (ISR).

- The /features and /pricing pages were pre-built as static HTML.

- The /dashboard remained CSR (since bots don't log in).

The Outcome:

Within 3 weeks of the next crawl cycle, Perplexity and ChatGPT began citing CloudFlow’s features again. Their Share of Model metrics rebounded from near-zero to 45%.

Part 6: Key Takeaways for the CTO

- JavaScript is an Accessibility Barrier for AI: Just as you optimize for screen readers, you must optimize for "Token Readers." If content requires a click, scroll, or script execution to appear, it does not exist in the Vector Database.

- Server-Side is the Safe Side: For any page that is public-facing (Marketing, Blog, Docs, Pricing), SSR or SSG is mandatory. Reserve CSR strictly for private, logged-in user states.

- Audit with curl, not Chrome: Your eyes deceive you. The only truth is the raw HTTP response. Make curl audits a part of your CI/CD pipeline.

- HTML Structure Matters: Once you enable SSR, ensure your HTML is semantically rich. As discussed in our HTML Formatting Guide, clean tags (<table>, <article>) help the AI parse the content you just made visible.

- Performance = Ingestibility: Static Site Generation (SSG) is not just faster for humans; it is cheaper for AI crawlers. Low-latency, high-fidelity text pages are prioritized by ingestion pipelines because they maximize token efficiency.

References & Further Reading

- Google Search Central: Understand the JavaScript SEO Basics. Google's own guide on the limitations of JS-heavy sites and the processing resources required to render them.

- Vercel / Next.js: Rendering Fundamentals. Deep technical documentation on the architectural differences between Client-Side, Server-Side, and Static rendering.

- Prerender.io: How Dynamic Rendering Works. A comprehensive guide to the middleware approach for serving static snapshots to crawlers.

- OpenAI: GPTBot Documentation. Technical specs for OpenAI's crawler, confirming its preference for clean text and limitations regarding complex execution.