Imagine spending thousands of dollars on a beautiful, modern website. It has interactive product carousels, dynamic content that loads as you scroll, and a sleek, single-page application (SPA) design. To a human visitor, it is a masterpiece of user experience (UX). But to the most powerful artificial intelligence models on the planet—the ones powering the future of search—it might as well be a blank page.

This is the alarming reality of the "AI Readability Gap." As the digital landscape shifts from traditional Search Engine Optimization (SEO) to Generative Engine Optimization (GEO), a new and dangerous problem has emerged: technical blindness.

Your website might be perfectly optimized for a human's eyes and formatted for a 2015-era Google crawler, but it is failing the test that matters most for the next decade: being readable by Large Language Models (LLMs) like ChatGPT, Claude, and Gemini. If these "Answer Engines" cannot parse your code, they cannot recommend your brand.

This article will dissect why this phenomenon is happening, the catastrophic consequences for your brand's visibility, and the specific technical solutions you must implement to ensure your digital presence survives the transition to AI-first search.

The Great Disconnect: Human vs. Machine Perception

The core of the problem lies in a fundamental misunderstanding of how humans and machines consume web content. We often assume that what we see in our web browser is what the bot sees. In the era of static HTML, this was largely true. In the era of the modern web, it is a dangerous fallacy.

A human "reads" a fully rendered webpage. This is the final product after your browser has downloaded the HTML, fetched the CSS styles, and executed the JavaScript files that build the page layout and populate the text.

An AI crawler, by default, often "reads" the raw initial HTML response from your server. For many modern websites built with heavy JavaScript frameworks like React, Angular, or Vue, that initial HTML is often just a hollow shell. It is a container waiting for JavaScript to fetch and populate the actual content.

- Human Readability: Relies on visual layout, interactivity, colors, and fully rendered content.

- LLM Readability: Relies on the raw HTML source code, text density, semantic structure, and structured data protocols.

If your content only exists after JavaScript runs, you are effectively serving a blank plate to the AI. The crawler requests your URL, receives a nearly empty file, and moves on. The image below illustrates this dramatic disconnect between the rich experience you think you are providing and the empty code the AI is actually receiving.

The "View Source" vs. "Inspect Element" Trap

To understand this technically, one must distinguish between "View Source" and "Inspect Element." When a developer checks a site, they often use "Inspect Element," which shows the DOM (Document Object Model) after the browser has done the hard work of building the page.

However, many AI bots operate closer to "View Source"—they look at the raw file delivered by the server. If your product descriptions, pricing, and unique selling propositions are injected dynamically via JavaScript, they do not exist in the "View Source" code. To the AI, your "Best CRM for Small Business" landing page is just a generic loading spinner.

The JavaScript Barrier: The "Render Budget" Problem

You might be thinking, "Doesn't Google render JavaScript? Won't AI models eventually do the same?" The answer is complex. While Google has the capability to render JavaScript, doing so is computationally expensive. It requires significantly more processing power (CPU cycles), memory, and time than simply crawling static HTML.

Because of this, search engines and AI companies operate with a strict "render budget." They cannot afford to execute the heavy JavaScript code for every single page on the internet instantly. They must prioritize.

As outlined in the official Google Search Central documentation on JavaScript SEO basics, the rendering process is often deferred. Googlebot may crawl the raw HTML immediately but queue the rendering for later—sometimes hours, sometimes weeks.

For real-time AI models that need instant answers to user queries, this delay is unacceptable. If an LLM is scanning the web to answer a user's specific question right now, and your site's content is locked behind a JavaScript execution queue, the AI will simply skip your site. It is a matter of efficiency and economics. The AI chooses the path of least resistance: the static, text-heavy site that requires zero rendering time.

The Economics of AI Crawling

We must also consider the cost. OpenAI, Google, and Perplexity are burning billions of dollars in compute costs. Rendering a JavaScript-heavy page costs significantly more than parsing a static HTML page. As these companies look to optimize their own margins, they will inevitably deprioritize sites that are "expensive" to read. If your site requires a supercomputer to render just to find out the price of a pair of shoes, you are creating economic friction that will result in your exclusion from the index.

Unstructured Chaos: The "HTML Soup" Dilemma

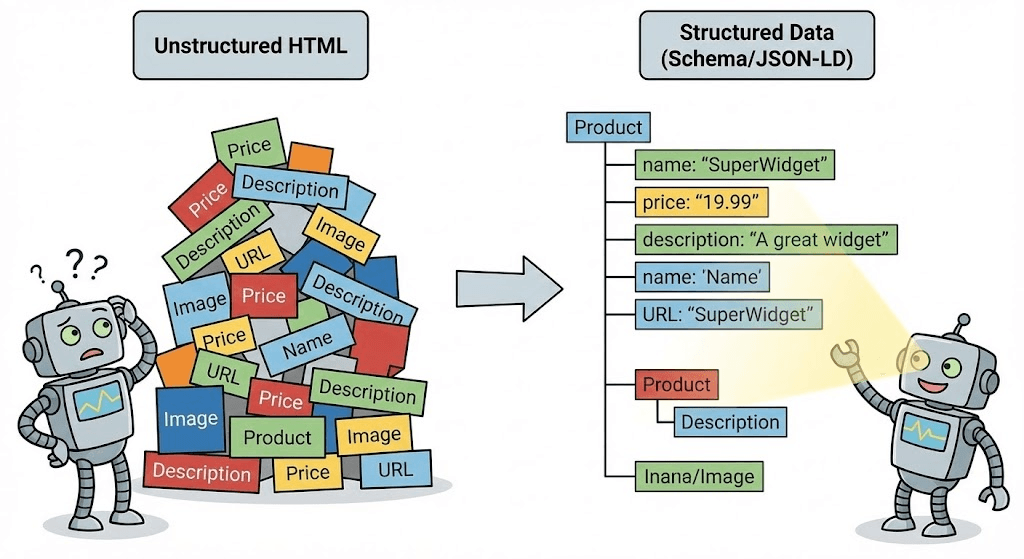

The problem isn't limited to JavaScript. It also stems from the quality and structure of your HTML. Even if the AI can see your text, it might not understand it.

LLMs process information by creating "vector embeddings"—numerical representations of text that capture meaning and context. To do this accurately, they rely heavily on the semantic structure of your HTML. They look for cues that indicate hierarchy and importance.

If your website is a messy "soup" of generic <div> and <span> tags with no meaningful hierarchy, the AI struggles to parse the information. A human visualizes hierarchy through font size and bold text; a machine needs semantic tags like <h1>, <article>, <header>, and <footer> to understand the relationship between different pieces of content.

Without this structure, your content is a jumbled mess of words. The AI cannot easily distinguish a product name from a navigation link, or a price from a phone number. This increases the "perplexity" of your page—a measurement of how confused the model is. High perplexity leads to low trust, and low trust leads to zero visibility.

The Consequences of Being Invisible

The consequences of technical blindness are far more severe than just losing a few organic clicks. In the AI era, invisibility can actively damage your brand's reputation and revenue stream.

1. Hallucinations and Brand Damage

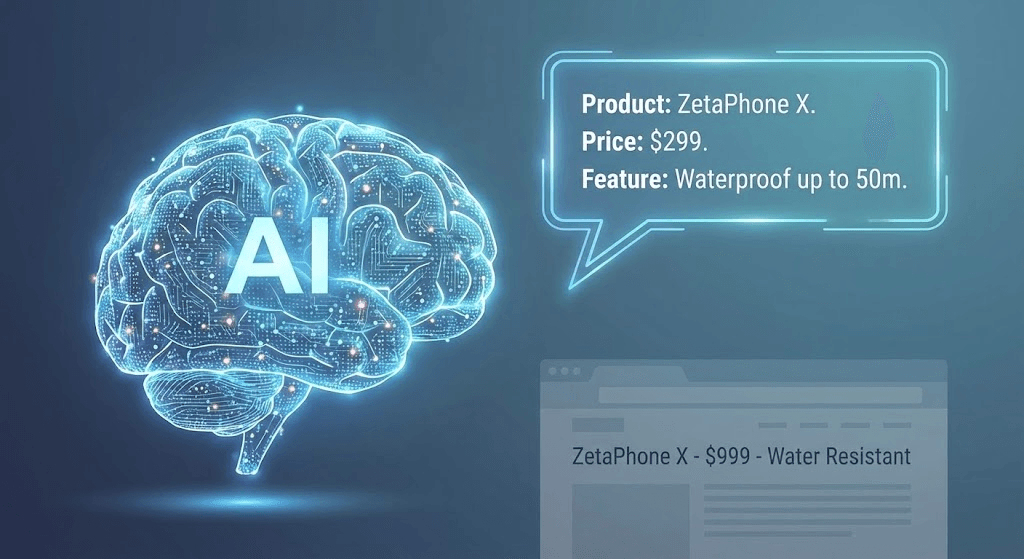

LLMs are designed to be helpful above all else. If a user asks a question about your product, the AI will try to provide an answer. If it cannot "read" your website to find the correct, real-time information (e.g., your current pricing, return policy, or feature list), it won't simply say "I don't know."

Instead, it may hallucinate. It will confidently invent an answer based on outdated data from its training set, probabilistic guesses, or information found on third-party aggregator sites.

This is a brand safety nightmare. Imagine an AI telling a potential customer that your product costs $299 when it actually costs $999, or that it has a feature you discontinued three years ago. This misinformation is delivered with the absolute authority of an omniscient AI, and the user is likely to believe it, leading to customer support disputes and eroded trust.

2. Losing the "Share of Model" (SoM)

The new metric of success is no longer just "Share of Voice" or "Traffic"; it is "Share of Model" (SoM). This refers to the frequency with which your brand is cited or recommended in AI-generated responses.

When a user asks a conversational question like, "What is the best CRM for a small business?", the AI will provide a synthesized answer, often recommending one or two top brands. This is the "Zero-Click" future. If your content is invisible to the crawler, you are not in the running for this recommendation. You won't just lose the click; you will lose the entire conversation. You are effectively being erased from the primary interface through which future consumers will discover products.

3. Competitor Espionage and Displacement

While you struggle with heavy JavaScript and unstructured code, your competitors may be optimizing for AI. If a competitor has a clean, static, text-heavy site with excellent schema markup, the AI will prioritize their content.

This leads to a phenomenon where the AI might answer a query about your industry using their data. Even worse, if the AI cannot read your value proposition, it cannot defend your brand against competitors. If a user asks, "Why is Brand X better than Brand Y?" and the AI can read Brand Y's site but not Brand X's, the answer will be heavily biased toward Brand Y.

The Litmus Test: Are You Invisible?

How can you tell if your website suffers from technical blindness? You do not need to be a developer to perform a preliminary audit. There is a simple diagnostic test you can perform in seconds.

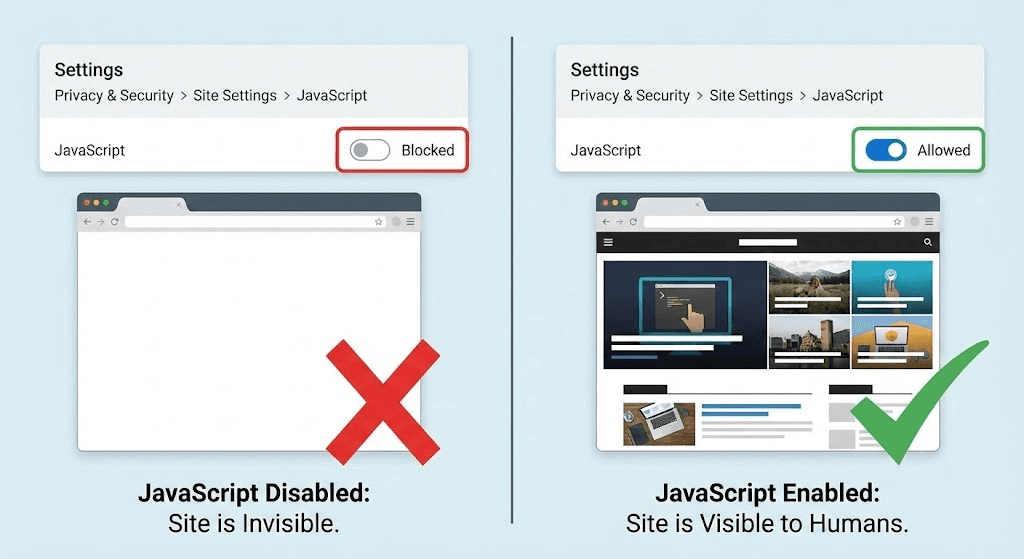

Since many crawlers (and potentially LLMs in "fast mode") do not execute JavaScript by default, you can simulate their view by disabling JavaScript in your browser.

- Open your website in Google Chrome or your preferred browser.

- Go to Settings: Navigate to Privacy and security > Site Settings > JavaScript.

- Disable JavaScript: Select "Don't allow sites to use JavaScript."

- Refresh your website.

What do you see?

If your site displays a blank white screen, a broken layout, or is missing key content like product descriptions or articles, then you are largely invisible to AI crawlers. If the text remains visible and readable, even if the fancy animations are gone, you are in a much better position.

The Solution: Bridging the Readability Gap

The solution is not to abandon modern web technologies or return to the design aesthetics of 1999. Instead, you must adapt your architecture for a dual audience: providing a rich experience for humans and a structured, pre-rendered experience for bots.

Server-Side Rendering (SSR) and Prerendering

The most effective technical fix is to ensure that your server sends a fully populated HTML document to the crawler in the initial request. This is known as Server-Side Rendering (SSR).

Instead of sending an empty shell and a pile of JavaScript, your server executes the code first, generates the final HTML, and sends that complete page to the bot. This eliminates the need for the crawler to expend its precious "render budget" on your site. It gets the content instantly, in a format it can immediately parse and understand.

If moving to full SSR is too complex for your current tech stack, Dynamic Rendering is an alternative. This involves detecting if the visitor is a bot (like Googlebot or GPTBot) and serving them a pre-rendered, static HTML version of the page, while serving the heavy JavaScript version to human users.

The New Language: Schema Markup (JSON-LD)

If SSR is the delivery mechanism, Structured Data (using the Schema.org vocabulary and JSON-LD format) is the language of LLMs. It is the single most important action you can take to communicate with AI.

Think of Schema as a direct API for your content. It allows you to explicitly tell the AI, "This string of text is a product name," "This number is a price," "This date is an event start time," and "This URL is the author." You are no longer relying on the AI to guess the meaning of your HTML soup; you are feeding it hard-coded facts.

By implementing comprehensive structured data, you are essentially creating a machine-readable version of your website that exists alongside the human-readable one. This is the key to "grounding" LLMs in your truth. As explained in the Google Search Central guide to structured data, this markup is what allows search engines to categorize and index content with high precision.

Semantic HTML: The Foundation

Finally, you must return to the basics of Semantic HTML. Every tag on your site should tell the truth about what is inside it.

- Use <nav> for navigation, not <div>.

- Use <article> for your main content, not <div>.

- Use <table> for tabular data (LLMs are exceptionally good at reading tables), not a grid of divs.

This semantic clarity helps the AI's "attention mechanism" focus on the parts of your page that actually contain the answer to the user's query.

Conclusion

The web is undergoing a seismic shift. We are moving from a "search-and-click" ecosystem to an "ask-and-answer" one. In this new reality, your website is not just a storefront for humans; it is a data source for machines.

If your site is technically invisible due to heavy JavaScript, client-side rendering, or poor HTML structure, you are writing yourself out of the future of digital discovery. You cannot afford to ignore the "AI Readability Gap."

By embracing technical solutions like Server-Side Rendering and treating Schema markup as a fundamental requirement, you can ensure your brand is not just visible, but truly understood by the AI models that will define the next decade of commerce. The future belongs to those who write for the machine as eloquently as they write for the human.

References

- Google's Official Stance on JavaScript: A comprehensive overview of how Google processes JavaScript and the implications of the "render queue."

- Source: Google Search Central

- Link: https://developers.google.com/search/docs/crawling-indexing/javascript/javascript-seo-basics

- The Guide to Structured Data: The definitive documentation on how to implement JSON-LD to help machines understand your content.

- Source: Google Developers

- Link: https://developers.google.com/search/docs/appearance/structured-data/intro-structured-data

- Overview of AI Crawlers: Technical documentation regarding OpenAI's web crawler, GPTBot, and how it accesses web content.

- Source: OpenAI Platform Documentation

- Link: https://platform.openai.com/docs/gptbot