Definition

The /llms.txt standard is a proposed web convention—similar to robots.txt—that provides Large Language Models (LLMs) with a curated, Markdown-formatted index of a website’s most valuable content. Unlike a traditional sitemap.xml which lists URLs for crawling, an llms.txt file is designed to explicitly guide AI agents to clean, token-efficient text files, stripping away the HTML boilerplate that confuses ingestion pipelines.

The Problem: The High Cost of HTML Noise

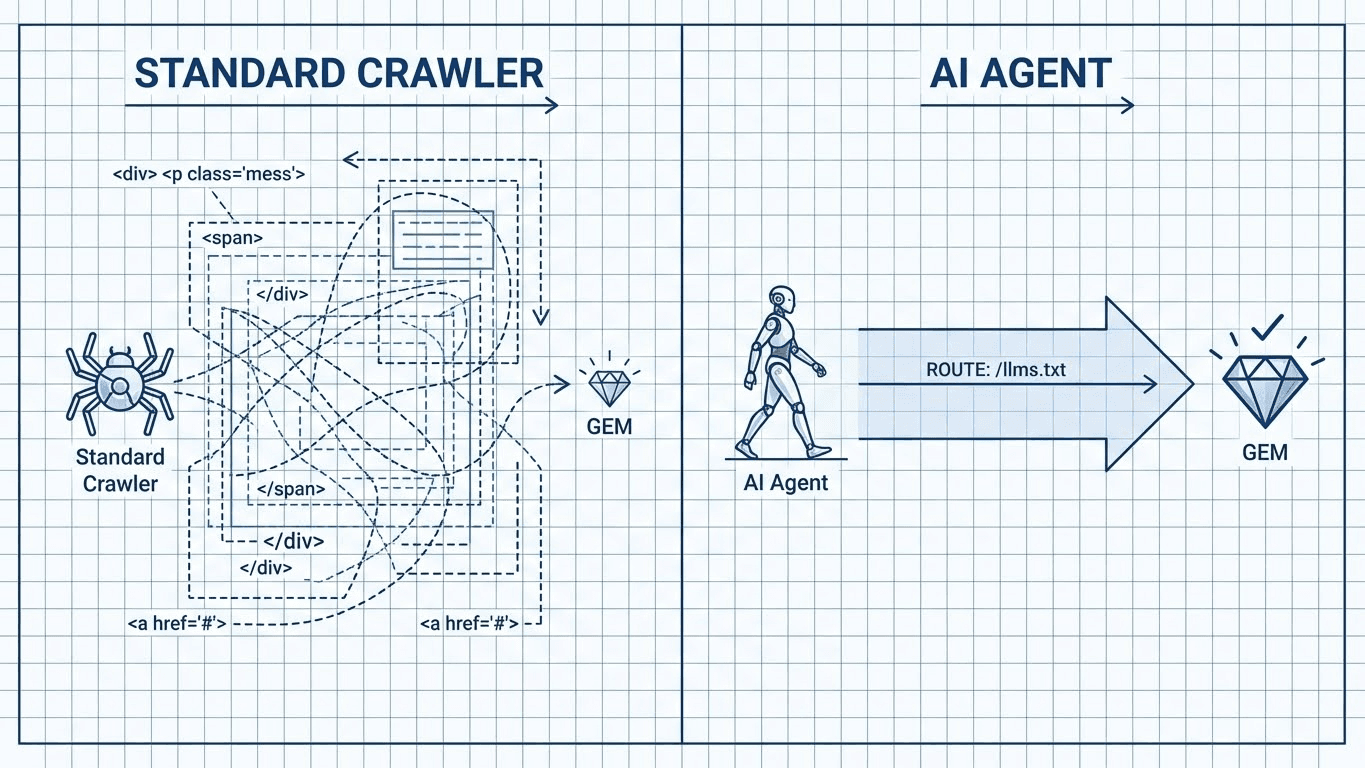

For the past 20 years, we have optimized websites for the Document Object Model (DOM). We built complex hierarchies of divs, scripts, and styles to render visual interfaces for humans.

For an AI crawler, this visual layer is toxic waste.

When an LLM (like GPT-4 or Claude) scrapes your landing page, it has to burn valuable Context Window tokens processing your navigation bar, your footer links, your tracking pixels, and your CSS classes.

- Token Waste: A standard webpage might be 50KB of code for 2KB of actual text.

- Hallucination Risk: As we discussed in our guide on the Chunking Mismatch, messy HTML increases the chance of the ingestion "guillotine" slicing your content in the wrong place.

If you rely solely on standard crawling, you are asking the AI to dig for gold in a landfill. The /llms.txt standard solves this by handing the AI a map directly to the gold vault.

The Solution: The Markdown Sitemap Strategy

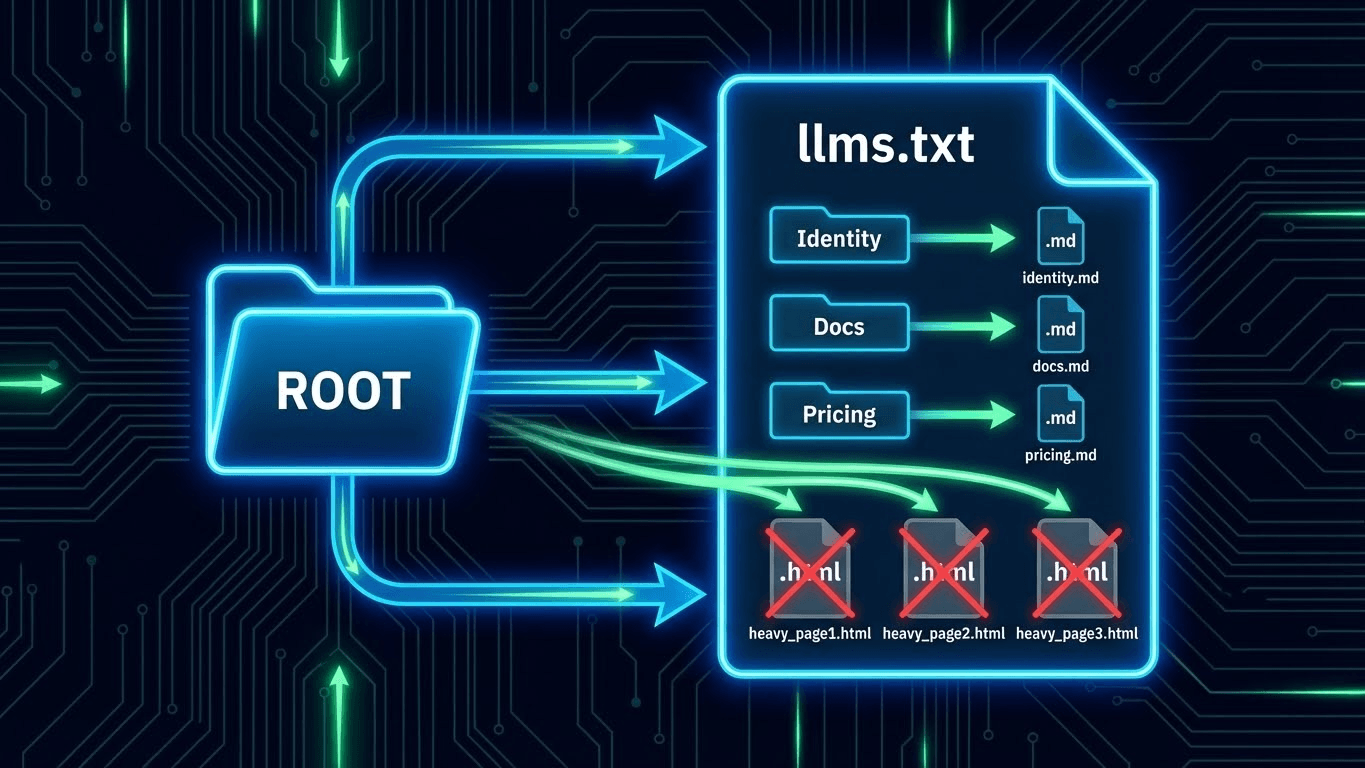

The core philosophy of the /llms.txt standard is "Text-First Delivery."

Instead of forcing the AI to scrape your HTML pages, you provide a text file at the root of your domain (yourdomain.com/llms.txt). This file lists your core entities and documentation, but crucially, it points to Markdown (.md) or plain text versions of those pages if they exist.

Why Markdown?

Markdown is the native tongue of LLMs. It represents structure (# H1, **Bold**, - List) without the overhead of HTML tags. By serving Markdown, you maximize Information Density—ensuring every token the AI reads adds semantic value.

Technical Implementation: Building Your File

A robust /llms.txt file follows a specific hierarchy. It is not just a list of links; it is a Semantic Table of Contents.

Step 1: File Location & Permissions

Place the file at the root: https://example.com/llms.txt.

Ensure your robots.txt allows access to it.

- Note: This does not replace sitemap.xml (which is for Google Search Console). This is an additive layer for Answer Engine Optimization.

Step 2: The Structure

The file should be divided into sections that mirror your Entity Home structure. Use H2 headers within the text file to group content.

Recommended Sections:

- Core Identity: Who you are (Link to your Entity Home).

- Product Documentation: Deep technical specs (Link to .md files).

- Pricing & Policies: The "Truth" data to prevent Brand Hallucinations.

Step 3: The "Concise" Flag

The standard also supports an optional section for a "concise" summary—a single file that compresses your entire site into less than 10,000 tokens for rapid ingestion.

Comparison: sitemap.xml vs. llms.txt

Feature | sitemap.xml (Traditional) | llms.txt (The New Standard) |

Target Audience | Googlebot, Bingbot | ChatGPT, Claude, Perplexity |

File Format | XML (Extensible Markup Language) | Markdown (Human/AI Readable) |

Content Goal | Indexing (Find the URL) | Ingestion (Read the Content) |

Link Destination | HTML Pages (Heavy) | Markdown/Text Files (Light) |

Context | URL + Last Modified Date | Title + Description + Context |

Code Example: A Perfect /llms.txt File

Here is a boilerplate you can deploy today. Copy this structure, modify the links, and upload it to your root directory.

Markdown

# Website AI Score - LLM Sitemap

# This file provides a curated list of pages optimized for AI ingestion.

## Core Identity

- [Entity Home](https://websiteaiscore.com/company-profile.md): Definitive data on our founding, mission, and leadership.

- [AI Score Tool](https://websiteaiscore.com/tools/ai-score.md): Documentation on our proprietary scoring methodology.

## Technical Guides (AEO)

- [Search Everywhere Strategy](https://websiteaiscore.com/blog/the-aeo-playbook.md): Full technical playbook for AEO.

- [Token Optimization](https://websiteaiscore.com/blog/context-window-economy.md): How to optimize context windows.

- [Rank Tracking vs Share of Model](https://websiteaiscore.com/blog/share-of-model.md): New metrics for 2025.

## Pricing & Legal

- [Pricing Tiers](https://websiteaiscore.com/pricing.md): Current pricing tables (Anchored Data).

- [Terms of Service](https://websiteaiscore.com/legal/tos.txt): Plain text legal terms.

## Optional

- [Full Concise Summary](https://websiteaiscore.com/llms-full.txt): A single text file containing all above content merged for RAG.

Developer Note: If you do not have a CMS that generates .md files automatically, you can point these links to your standard HTML pages. However, ensure those HTML pages are strictly formatted with semantic tags (<article>, <table>) as per our HTML Formatting Guide to ensure they are parsed correctly.

Key Takeaways

- Reduce Token Cost: llms.txt creates a friction-free path for AI crawlers, stripping away HTML noise.

- Control the Context: By curating this list, you decide which pages the AI prioritizes, preventing it from indexing low-value "tag" or "category" pages.

- Markdown is King: Whenever possible, serve content in Markdown format to maximize ingestion speed and accuracy.

- Parallel Infrastructure: This does not replace sitemap.xml; it runs parallel to it specifically for the Search Everywhere ecosystem.

- Future Proofing: Agents like Perplexity are already prioritizing sites that make data retrieval easy. This is a low-effort, high-reward signal of technical competence.

References & Further Reading

- llms.txt Proposal: The Unofficial Standard for AI Crawlers. Documentation on the emerging convention for text-first delivery.

- Link: https://llmstxt.org/

- OpenAI Documentation: Optimizing Context Windows. Best practices for feeding data to GPT models, emphasizing token efficiency.

- Google Search Central: Robots.txt Specifications. The foundational standard for crawler directives that inspired llms.txt.