For twenty years, "bounce rate" was the metric of doom. If a human visitor landed on your page and left within three seconds, you failed. You tweaked your design, improved your load times, and polished your headlines to keep them there.

But today, in the era of Generative Engine Optimization (GEO), there is a new, invisible bounce rate that is far more dangerous: Context Truncation.

When an AI crawler like GPTBot or a Retrieval-Augmented Generation (RAG) system scans your website, it doesn't read like a human. It reads in "chunks." It has a limited "attention span" governed by a strict token budget. If your core value proposition, your pricing, or your unique answer is buried in paragraph four—underneath a fluffy introduction about the history of your industry—it effectively does not exist.

The AI simply truncates the text, ignores the middle, and often "hallucinates" an answer based on your competitor's better-optimized content. This phenomenon isn't just a theory; it is a documented technical flaw known as the "Lost-in-the-Middle" effect.

In this guide, we will break down the physics of the "Context Window Economy," why AI models struggle to read linear narratives, and how you can engineer your content using the "Inverted Pyramid for Vectors" to ensure your brand is never truncated again.

The Physics of "Lost-in-the-Middle"

To understand why your content is failing to appear in AI answers, you first have to understand how a Large Language Model (LLM) "reads."

Humans read linearly. We appreciate a narrative arc: a hook, an introduction, a rising action, and a conclusion. We are taught to tell stories.

LLMs, however, read via an Attention Mechanism. They ingest a massive sequence of text (converted into "tokens") and try to determine which words are statistically relevant to the user's query. However, this attention is not evenly distributed across the document.

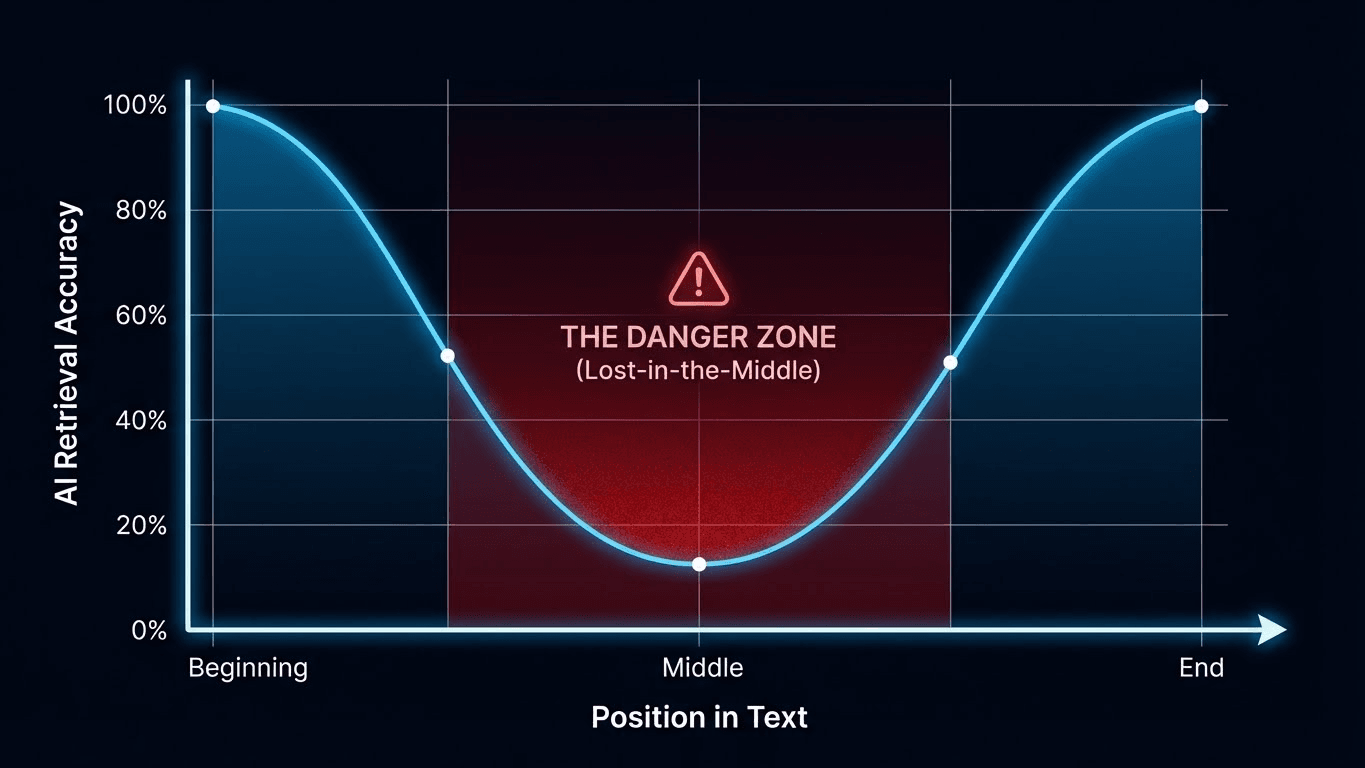

The U-Shaped Curve

In a landmark paper titled "Lost in the Middle: How Language Models Use Long Contexts" (Liu et al., 2023), researchers discovered a startling limitation in modern LLMs. When models are presented with a long document, they are highly accurate at retrieving information at the very beginning (Primacy Bias) and the very end (Recency Bias).

But for information located in the middle? Performance collapses.

If your article is 2,000 words long, and the specific answer to the user's question is hidden around word 800, the probability of the AI successfully extracting that answer drops significantly—often below 40%. The model essentially has a massive blind spot in the center of its context window.

The "Render Budget" vs. The "Token Budget"

We often talk about the "Render Budget" (the computational cost of executing JavaScript). Now, you must also worry about the Token Budget.

Most AI search systems (like Perplexity or Google's AI Overviews) use Vector Databases. When your content is indexed, it is often chopped into smaller "chunks" (e.g., 512 tokens per chunk) to be stored efficiently.

- The Cut-Off: If your introductory storytelling takes up 600 tokens, and the vector chunk size is 512, your actual answer is pushed to "Chunk 2."

- The Failure Mode: If the retrieval system pulls "Chunk 1" because it contains your Title and H1 (high relevance), it will see no answer inside. It may then discard your site entirely rather than fetching "Chunk 2."

The Solution: Inverted Pyramid Optimization for Vectors

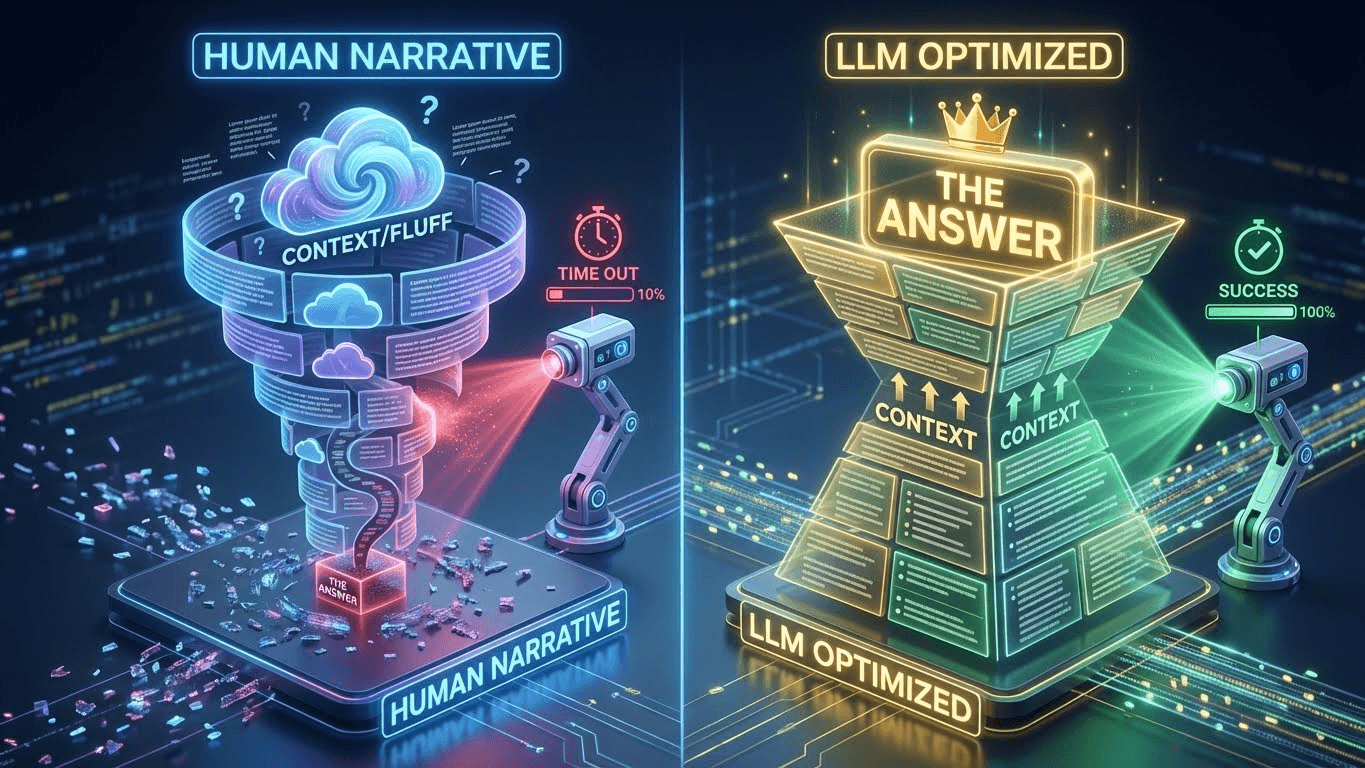

So, how do you fix this? You must fundamentally change the way you write for the web. You must move from "Storytelling" to "Semantic Front-Loading."

This strategy is what we call Inverted Pyramid Optimization for Vectors.

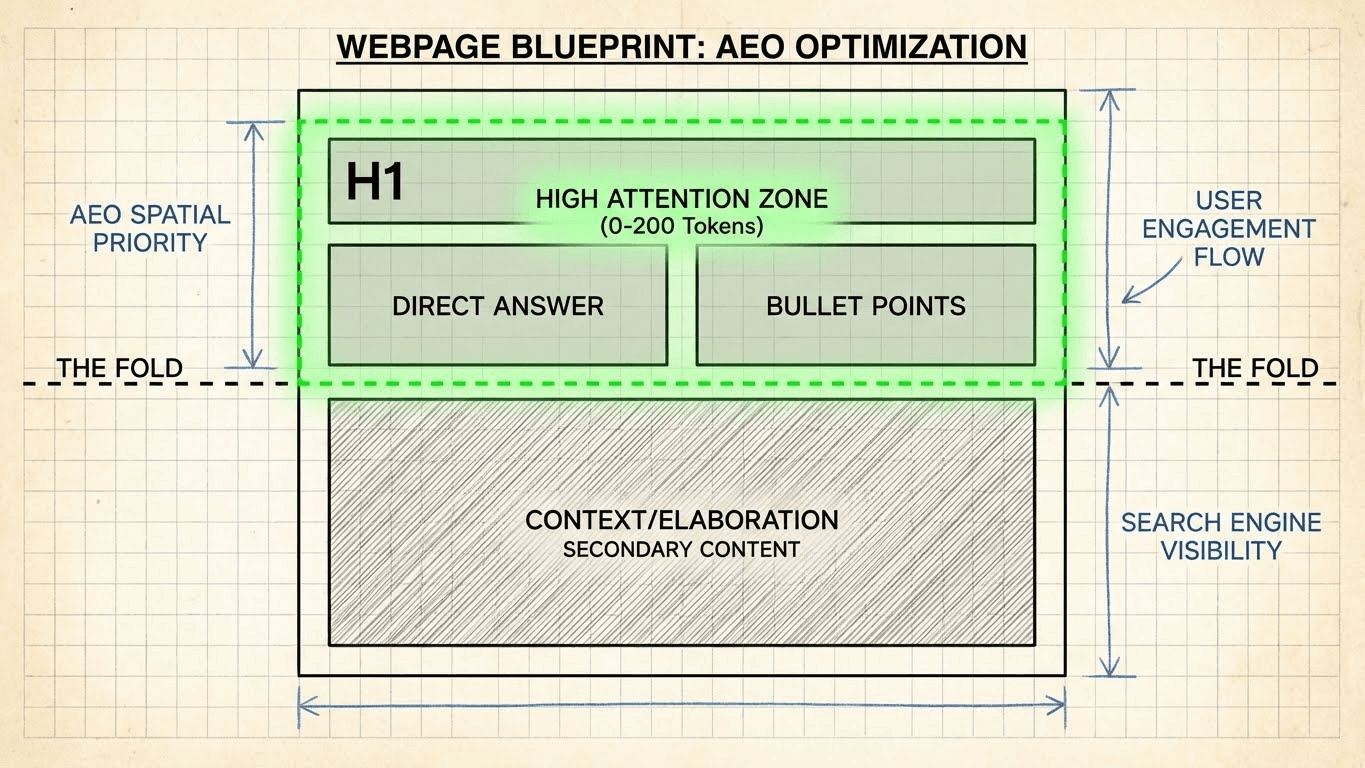

Journalists have used the Inverted Pyramid for a century: Who, what, where, when, and why must appear in the first paragraph. In GEO, we take this further. We need to place the Definitive Answer, the Core Entities, and the Key Definitions within the first 200 tokens (approximately 150 words).

Why 200 Tokens?

The first 200 tokens are the "VIP Section" of your content.

- Snippet Generation: Google's AI Overview often generates its summary exclusively from this section.

- Primacy Bias: As per the "Lost-in-the-Middle" research, LLMs pay the most mathematical attention to these early tokens.

- Vector Chunking: This ensures your topic and your answer stay in the same "chunk," maximizing your "retrieval probability."

The "Fact-First" Architecture

Every article or landing page you publish should follow this strict hierarchy:

- H1: The Direct Question or Topic.

- The "TL;DR" Block: A 2-3 sentence direct answer or summary.

- The Evidence: Data tables or bullet points supporting the answer.

- The Context: The detailed explanation (formerly your introduction).

Side-by-Side: Old SEO vs. New GEO

Let’s look at a concrete example to see the difference. Imagine you are selling software and your article targets the query: "What is the best CRM for a 5-person real estate team?"

Here is how a traditional SEO introduction compares to a GEO-optimized introduction.

🔴 The Old Way: Storytelling (Bad for AI)

H1: Best CRM for Real Estate in 2025

Intro: When running a small business, efficiency is everything. I remember when my uncle started his real estate agency back in 2010, he used sticky notes to track every lead. It was a nightmare. But today, technology has changed the game. There are so many options on the market, from Salesforce to HubSpot, that it can be overwhelming to choose. In this guide, we will explore the history of CRMs, why you need one, and eventually, which one is right for your specific needs... (The answer is buried 800 words later).

- AI Analysis:

- Token Waste: The first 100 tokens are about "my uncle" and "sticky notes."

- Entity Density: Low. It mentions generic brands but no specific features or differentiators.

- Result: The AI classifies this as a "generic blog post." It likely truncates the read before finding the recommendation.

🟢 The New Way: Semantic Front-Loading (Perfect for AI)

H1: Best CRM for Small Real Estate Teams (3-10 Agents)

Direct Answer: The best CRM for small real estate teams in 2025 is [Your Product], followed by HubSpot and Pipedrive.

Key Takeaways:

- Top Pick: [Your Product] (Best for automated follow-ups).

- Pricing: Starts at $29/user/month.

- Critical Feature: Native MLS Integration and SMS automation.

Context: For small teams of under 10 agents, enterprise tools like Salesforce are often too complex. This guide compares the top 3 options based on Price-to-Value, MLS Connectivity, and Ease of Use.

- AI Analysis:

- Token Efficiency: The answer is in the very first sentence.

- Entity Salience: Explicitly links "Real Estate" to "MLS Integration" and "SMS automation" immediately.

- Structure: Uses bullets (LLMs excel at parsing list structures) to define attributes.

- Result: The AI extracts the snippet: "The best CRM for small real estate teams is [Your Product] because of its native MLS integration." You win the citation.

Technical Implementation: The data-nosnippet Trick

Advanced GEO practitioners can use HTML attributes to force the AI to focus on the right content.

Using data-nosnippet

Google supports the data-nosnippet attribute. You can wrap your "fluff" (the story about your uncle) in a <span> with this tag.

- Effect: It tells the crawler, "Do not use this text for the snippet."

- Result: It forces the AI to look at the rest of your content (your actual data) to generate the description, artificially increasing the density of your helpful content.

The <summary> Tag

For FAQ sections, use the HTML <details> and <summary> tags.

- Why: This creates a clean "Question/Answer" pair in the DOM (Document Object Model).

- LLM Benefit: Many scrapers prioritize the text inside a <summary> tag because it signals a high-level definition or answer.

Optimizing for the Vector Space

Finally, we must discuss Vector Proximity.

When your content is ingested, it is turned into numbers. "Real Estate" might be converted to vector [0.82, 0.11]. "CRM" might be [0.81, 0.12]. Because these numbers are close, the AI understands they are related.

However, if you use vague language, you drift away in vector space.

- Vague: "Our tool helps you sell more houses." (Vector: Generic Sales).

- Precise: "Our Real Estate CRM features IDX Integration to capture Zillow Leads." (Vector: Highly Specific Niche).

The Action Plan:

- Identify your Core Entity: (e.g., "Real Estate CRM").

- Identify Attribute Entities: (e.g., "IDX," "MLS," "Zillow," "Drip Campaigns").

- Front-Load the Cluster: Ensure these words appear together in the first paragraph. This creates a "heavy" gravity well in the vector space, making it impossible for the AI to misinterpret your page's topic.

Conclusion: Don't Bury the Lede

The era of "reading for pleasure" on the commercial web is over. Robots are your primary audience, and they are impatient, expensive to run, and prone to forgetting the middle of the story.

To survive the Context Window Economy, you must be ruthless with your structure.

- Audit your top 10 pages.

- Read the first 150 words.

- Do you answer the user's question?

If the answer is "No," you are not just boring the user; you are invisible to the machine. Flip the pyramid. Front-load the value. Make sure that even if the AI stops reading after 200 tokens, it knows exactly who you are and why you matter.

References

- The "Lost in the Middle" Paper: Liu, N. F., et al. (2023). Lost in the Middle: How Language Models Use Long Contexts. This Stanford/Berkeley/Samaya paper proves the U-shaped accuracy curve of LLMs.

- Source: arXiv

- Link: https://arxiv.org/abs/2307.03172

- Tokenization and Context Windows: A technical explanation of how LLMs process text chunks and the limits of context windows.

- Source: OpenAI Developer Platform

- Link: https://platform.openai.com/tokenizer

- Google Search Central on Snippets: Documentation on how to use data-nosnippet to control what Google displays.

- Source: Google Search Central

- Link: https://developers.google.com/search/docs/crawling-indexing/special-tags

- Vector Embeddings Guide: An overview of how text is converted to numbers and why semantic proximity matters.

- Source: Pinecone Learning Center

- Link: https://www.pinecone.io/learn/vector-embeddings/