Definition

Table Optimization for LLMs is the process of structuring tabular data in a format that minimizes token usage while maximizing semantic clarity for Large Language Models. Specifically, it involves choosing between Markdown Tables (pipe-delimited syntax) and HTML Tables (<table> tags) based on the complexity of the data, to ensure that RAG (Retrieval-Augmented Generation) pipelines can ingest, parse, and cite the data without hallucination or truncation.

The Problem: The "Token Tax" of HTML Tables

For decades, we have used standard HTML <table> tags to display data. It offers rich styling, merged cells (rowspan/colspan), and accessibility features.

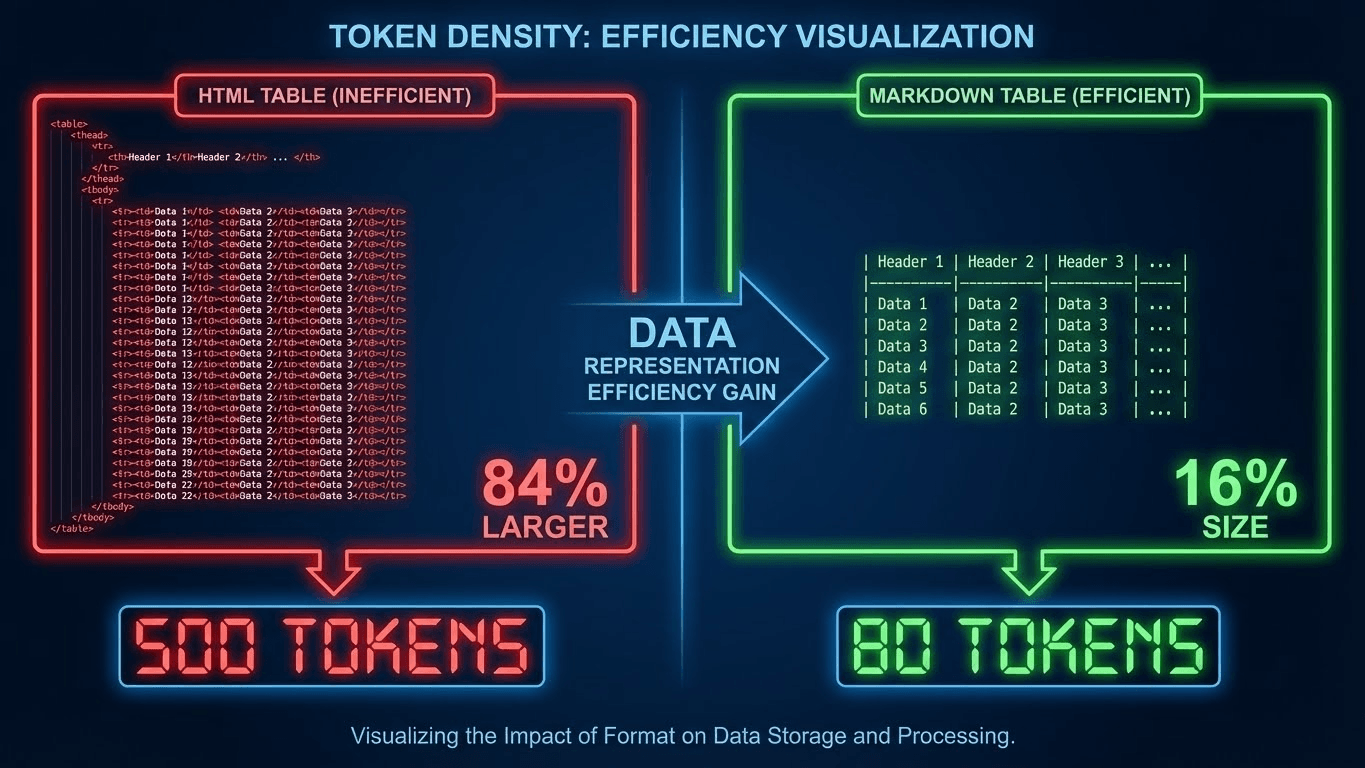

However, for an AI ingestion pipeline, standard HTML tables impose a massive "Token Tax."

Consider a simple 3x3 table.

- HTML: Requires <table>, <thead>, <tr>, <th>, <tbody>, <tr>, <td>, </td>, </tr>... for every single cell.

- Markdown: Requires only pipes | and hyphens -.

The Impact on RAG:

- Context Window Waste: HTML tables can be 3x-5x more token-heavy than Markdown. If you have a long pricing sheet, the HTML version might push your chunk over the limit, causing the "Guillotine Effect" (truncation).

- Parsing Failure: RAG splitters (like LangChain's default recursive splitter) often struggle to chop HTML tables cleanly. They might cut a table in the middle of a <tr>, leaving the AI with a list of orphaned <td> values (e.g., "$50") with no header context (e.g., "Price").

- Hallucination: When an LLM sees a broken HTML structure, it attempts to "auto-complete" the missing tags, often misaligning columns and attributing the wrong value to the wrong attribute.

The Solution: The "Markdown-First" Strategy

The solution is to default to Markdown Tables for all data fed to LLMs, reserving HTML only for complex, merged-cell structures that Markdown cannot handle.

Rule 1: Use Markdown for Standard Data

For 90% of use cases (pricing tiers, feature comparisons, spec sheets), use standard GitHub-Flavored Markdown (GFM).

- Why: It is token-efficient and "native" to LLM training data (GPT-4 was trained heavily on Markdown files).

Rule 2: Use Semantic HTML for Complex Data

If your table requires rowspan or colspan (e.g., a financial report where "Q1" spans three months), Markdown breaks. In this specific case, you must use Semantic HTML, but you must strip all attributes.

- Bad: <table>

- Good: <table> (Clean, raw tags only).

Rule 3: The "Flattening" Technique

If you have nested tables (tables inside tables), flatten them. LLMs cannot reliably parse nested grids. Break them into two separate H2-headed sections.

Technical Implementation: converting Your Tables

To implement this, you typically need a "Transformer" step in your RAG pipeline or a change in your CMS output.

The Conversion Logic

If you are generating static pages for llms.txt, use a library like Turndown (JS) or Pandas (Python) to convert HTML tables to Markdown strings.

Python Example (Pandas):

Python

import pandas as pd

# Read HTML Table

dfs = pd.read_html('https://example.com/pricing')

# Convert to Markdown

markdown_table = dfs[0].to_markdown(index=False)

print(markdown_table)

Comparison: Markdown vs. HTML for RAG

| Feature | Markdown Tables (|) | HTML Tables (<table>) | | :--- | :--- | :--- | | Token Efficiency | High (Minimal syntax) | Low (Heavy tag overhead) | | Parsing Reliability | High (Clean semantic chunks) | Medium (Prone to "orphan" tags) | | Complex Layouts | No (Cannot merge cells) | Yes (Supports rowspan/colspan) | | LLM Preference | Native (GPT-4 prefers this) | Secondary (Understands, but costly) | | Best For | Pricing, Specs, Lists | Financial Reports, Calendars |

Code Example: The Optimized Table Format

Here is how your data should look in your /llms.txt file or API payload.

❌ AVOID (Heavy HTML):

HTML

<table>

<thead>

<tr>

<th>Feature</th>

<th>Basic</th>

<th>Pro</th>

</tr>

</thead>

<tbody>

<tr>

<td>Users</td>

<td>1</td>

<td>5</td>

</tr>

</tbody>

</table>

✅ USE (Clean Markdown):

Markdown

| Feature | Basic | Pro |

| :--- | :--- | :--- |

| Users | 1 | 5 |

| Price | $10 | $50 |

| Support | Email | 24/7 |

Note: This Markdown format allows the AI to "see" the column alignment instantly, ensuring precise data retrieval without the Adjacency Optimization issues of HTML.

Key Takeaways

- Count Your Tokens: Before feeding a table to an AI, ask: "Can this be Markdown?" If yes, convert it.

- Strip the Styles: If you must use HTML, strip all class, id, and style attributes. The AI only wants the data, not the CSS.

- Avoid Merged Cells: Whenever possible, un-merge cells. Repeat the data in each cell (e.g., instead of spanning "Q1" across 3 rows, write "Q1 - Jan", "Q1 - Feb"). This aids Data Anchoring.

- Test the Split: Run your tables through a chunking visualizer. If the splitter cuts your table header off from the data rows, your RAG pipeline is broken.

- LLM-First Indexing: Use this formatting specifically for your llms.txt file to ensure maximum ingestibility.

References & Further Reading

- LangChain Documentation: Text Splitters. How recursive character splitting affects table integrity.

- OpenAI Cookbook: Data Formatting for RAG. Best practices for structuring data for GPT models.