You have done everything right. You performed the rigorous keyword research, you wrote the authoritative guide, and you marked it up with valid Schema.org. To a human user, your page looks perfect. The design is clean, the "hero" images are stunning, and the copy flows beautifully from one section to the next.

But when you ask ChatGPT, Perplexity, or a custom RAG (Retrieval-Augmented Generation) agent a specific question based on your content, it fails. It says "I don't know," or worse, it "hallucinates" an answer from a competitor's site that has objectively worse content than yours.

Why?

The answer lies in the invisible, violent process of Ingestion.

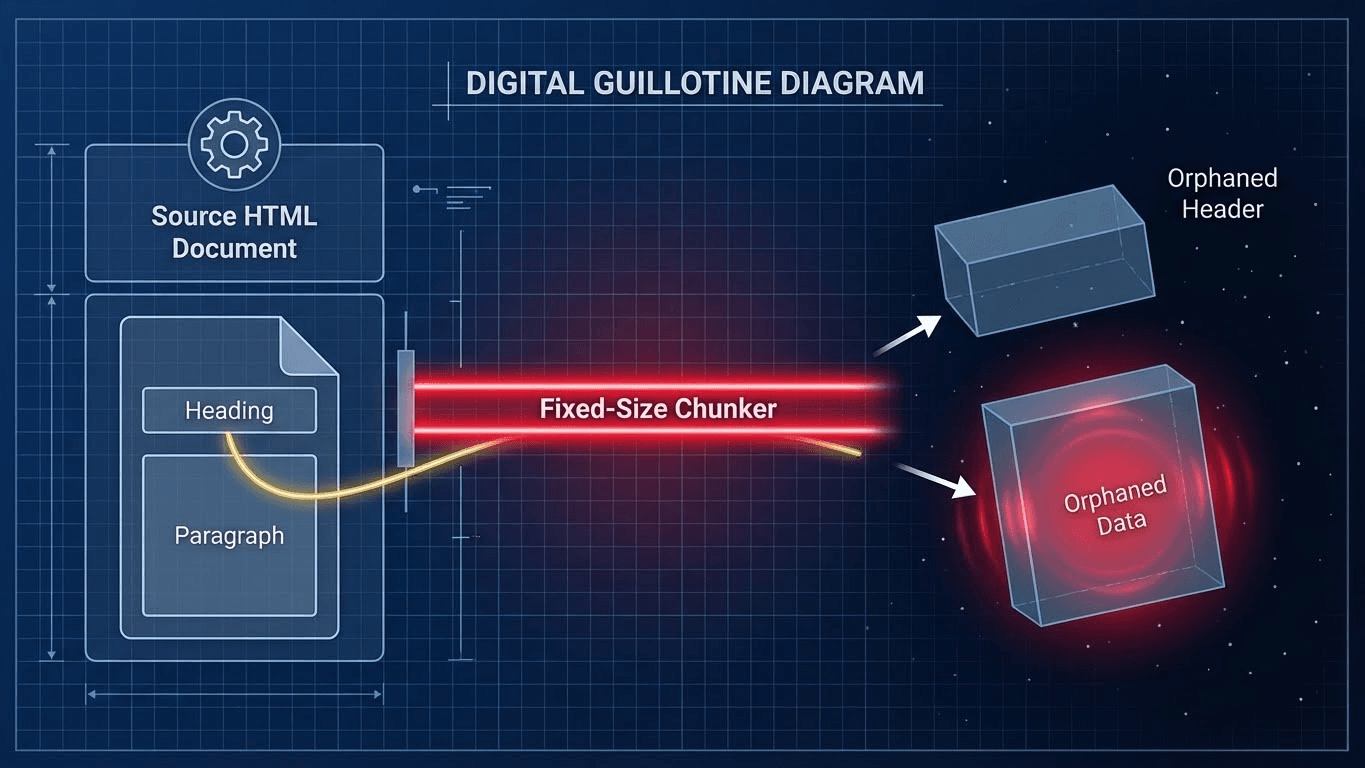

Before an AI can read your content, it must "eat" it. To do this, RAG pipelines chop your elegant, cohesive HTML document into tiny, fixed-size pieces called "chunks." If your formatting doesn't anticipate where the knife falls, you are serving the AI broken data.

This phenomenon is called the RAG Chunking Mismatch, and it is the single most common reason why high-quality content fails to rank in the age of Answer Engines. It is a silent killer because it doesn't show up in Google Search Console errors or PageSpeed Insights. Your site is technically healthy, but semantically broken.

In this deep-dive technical breakdown, we will dissect the mechanics of this failure, explain the "Guillotine Effect" of vector ingestion, and introduce a new strategic framework for 2025: Chunk-Aware Formatting.

The Physics of Failure: How Vector Databases "Eat" Your Site

To understand the problem, you must first understand the architecture of the modern search engine. We are no longer in the era of simple keyword matching. We are in the era of Vector Search.

When a crawler (like GPTBot, Google-Extended, or BingBot) scrapes your URL, it doesn't store the page as one long, continuous scroll. Storing massive documents in their entirety is computationally inefficient and makes retrieval slow. Instead, the ingestion pipeline runs your text through a Chunker.

The "Fixed-Size" Trap

The vast majority of RAG pipelines today utilize "Fixed-Size Chunking." This method is the industry standard because it is cheap, fast, and scalable. The system simply counts a specific number of tokens (e.g., 500 tokens) or characters (e.g., 1000 characters) and makes a cut. Then it counts another 500 and cuts again.

This process is blind. It does not care about your paragraphs, your logic, your carefully placed H2 tags, or your narrative arc. It acts like a guillotine dropping at regular intervals.

This mechanical segmentation creates a massive risk: Semantic Severance.

The Semantic Schism: A Practical Example

Consider a standard product landing page. You have a Section Heading (<h2>), a large visual element (Hero Image), and a specific data point (Price).

Your HTML Structure:

HTML

<h2>Enterprise Pricing Tier</h2>

<img src="hero-meeting.jpg" alt="Team meeting in a conference room">

<div></div>

<p>$50 / month / user</p>

To a human eye, the connection is obvious. The "$50" refers to the "Enterprise Pricing Tier" just above it. The brain bridges the gap instantly.

But a Fixed-Size Chunker might make the cut right after the image to fit its 500-token budget for "Chunk 1."

- Chunk A (The Label): Contains the words "Enterprise Pricing Tier" and the image alt text "Team meeting..."

- Chunk B (The Data): Contains the number "$50 / month / user" and the footer text.

This is the Semantic Schism.

1. The Retrieval Failure: When a user searches for "What is the Enterprise Pricing?", the Vector Database scans its index. It finds Chunk A because it contains the keywords "Enterprise" and "Pricing." However, Chunk A contains no answer—only the header. The LLM retrieves this chunk, reads it, sees no price, and outputs: "I'm sorry, I couldn't find specific pricing information."

2. The Vector Drift: The Vector Database likely ignores Chunk B entirely. Why? Because "$50 / month / user" isolated from its context looks mathematically like generic data. In the multi-dimensional vector space, Chunk B drifts away from the concept of "Software Pricing" and floats near generic concepts like "Cost" or "Money." It becomes "Orphaned Data"—a fact with no parent.

Strategic Framework: Chunk-Aware Formatting

We cannot force OpenAI, Google, or Perplexity to change their ingestion pipelines. Fixed-size chunking will remain the standard for the foreseeable future due to its speed.

However, we can engineer our content to survive the guillotine.

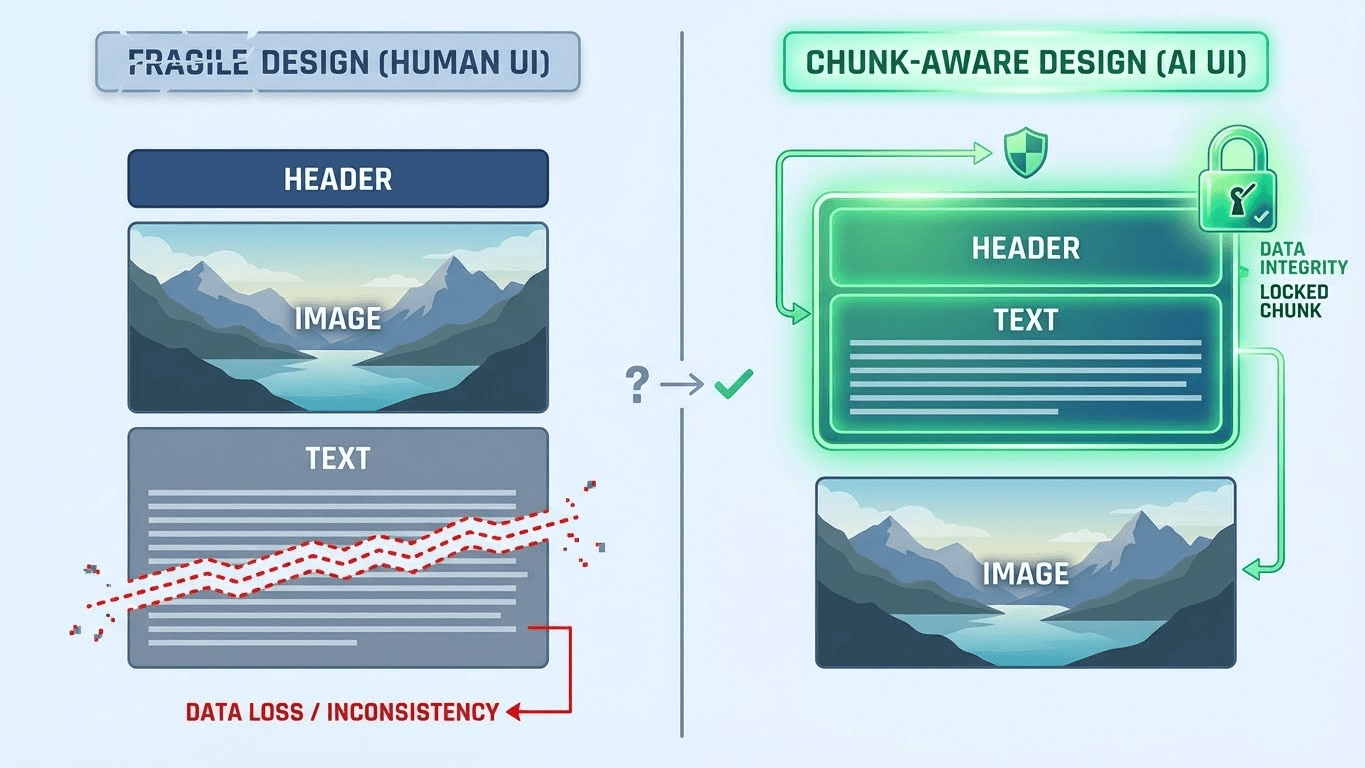

We call this discipline Chunk-Aware Formatting. It is the practice of aligning your HTML structure, your writing style, and your visual layout with the logic of ingestion. It moves beyond "Human Readability" and prioritizes "Machine Ingestibility."

Here are the three pillars of Chunk-Aware Formatting.

Strategy 1: The DOM Shield (Semantic Grouping)

Advanced RAG crawlers are slowly moving toward "DOM-Aware Chunking." Instead of blindly counting characters, these smarter parsers look for HTML5 semantic tags to identify logical boundaries.

If you use generic <div> tags for everything (a common habit in modern React/Next.js development), you offer no clues to the parser. It sees a sea of divs and falls back to character counting.

But if you wrap your content in specific semantic tags, you signal to the chunker: "Keep this together."

The Fix: Wrap every distinct Question/Answer pair or logical topic in a <section> or <article> tag.

The Logic: Even if a chunker is running a fixed token limit, many advanced parsers (like LangChain's HTML splitter) are programmed to respect these tags as "soft breaks." They will try to adjust the cut point to avoid slicing through a <section> tag if possible.

Bad (Fragile):

HTML

<div>

<h2>How does the API work?</h2>

</div>

<div>

<img src="api-diagram.png">

</div>

<div>

<p>The API functions by sending a POST request...</p>

</div>

Good (Chunk-Aware):

HTML

<section>

<h2>How does the API work?</h2>

<p>The API functions by sending a POST request...</p>

<img src="api-diagram.png">

</section>

By grouping the header and the text inside a <section>, you increase the probability that they stay in the same "digestible" unit.

Strategy 2: Vector-Ready Writing (Noun-Heavy Syntax)

The most powerful fix requires no code at all—only a shift in your editorial style. You must stop relying on Contextual Pronouns.

In traditional human writing, we are taught to avoid repetition. We use pronouns like "It," "They," "This," and "These" to flow smoothly from one sentence to the next.

Standard Prose: "The Tesla Model 3 is the most popular EV in California. It has a range of 350 miles and it charges in 20 minutes."

If the chunker cuts between sentence 1 and sentence 2, the second chunk becomes:

Chunk 2: "It has a range of 350 miles and it charges in 20 minutes."

To a Vector Database, "It" is a Stop Word. It is mathematically invisible. This chunk has no entity associated with it. The vector embedding for this text will not point to "Tesla." It will point to generic "Range" or "Charging." This data is effectively lost.

The Rule of Self-Contained Blocks: Write every paragraph as if it is the only paragraph the AI will ever see. Repetitive? Yes. Necessary? Absolutely.

Vector-Ready Prose: "The Tesla Model 3 is the most popular EV in California. The Tesla Model 3 Long Range has a range of 350 miles and the Model 3 charges in 20 minutes."

Now, if Chunk 2 is isolated, it still carries the full semantic weight of the entity "Tesla Model 3." It can be indexed, retrieved, and cited independently of the previous sentence.

Strategy 3: Adjacency Optimization (Closing the Gap)

Web designers love whitespace. They love "breathing room." They love placing a massive 800px high-resolution image or a dynamic ad slot between the "Problem" header and the "Solution" paragraph.

For RAG, this distance is fatal.

Every pixel of vertical height in the DOM is filled with code—image tags, spacer divs, script loaders, ad containers. These take up tokens. If you place 300 tokens of "fluff" (images, ads, spacers) between the Question and the Answer, you statistically increase the probability of a chunk boundary landing right in that gap.

The Action: Keep headers and their immediate body text physically adjacent in the code.

The Tactic: Place images after the core answer paragraph, not between the header and the text.

Fragile Layout:

- H2: "What is the return policy?"

- [Large Image of a Happy Customer] (Consumes 200 tokens of HTML/Alt text)

- P: "You can return items within 30 days."

Robust Layout:

- H2: "What is the return policy?"

- P: "You can return items within 30 days."

- [Large Image of a Happy Customer]

By locking the H2 and the P together, you ensure they enter the vector database as a single, unbreakable unit of knowledge.

The Future of Ingestion: From Parsing to Understanding

We are moving toward Agentic Parsing, where future AI agents will intelligently "read" a page to decide how to chunk it based on meaning rather than math. Tools are emerging that use "Vision-Language Models" to "see" the page layout and understand that the text below the image belongs to the header above it.

But until that technology is universal, cheap, and scalable enough for every crawler (from Google to Perplexity to Apple Intelligence) to use, we are stuck with fixed-size chunking.

The brands that win the "Answer Engine" race in 2025 will not be the ones with the prettiest prose or the most expensive design. They will be the ones that write in Atomic Units of Information—self-contained, noun-heavy, and semantically grouped blocks of data that survive the guillotine.

Your Audit Checklist for Retrieval Safety:

- Check your DOM: Are you using <section> and <article> tags to group logic, or is it just a soup of divs?

- Check your Pronouns: Scan the first sentence of every paragraph. Eliminate "It," "They," and "This." Replace them with the actual Brand or Product Name.

- Check your Adjacency: Are you putting huge images or ad blocks between your questions and your answers? Move them down.

Don't let the chunker kill your traffic. Format for the machine.

References & Further Reading

- LangChain Documentation: HTML Header Metadata Splitter. Detailed explanation of how modern parsers attempt to respect HTML hierarchy during chunking and why semantic tags matter.

- Pinecone Learning Center: Chunking Strategies for Large Language Models. A technical deep dive into fixed-size vs. semantic chunking and the trade-offs of each method.

- Microsoft Research: Retrieval-Augmented Generation for Knowledge-Intensive NLP Tasks. The foundational paper establishing the dependence of generation quality on retrieval precision.

- Google Search Central: Semantic HTML and Google Search. Official guidance on how semantic tags help Google understand page structure.