For the last 30 years, web development has focused on the human experience. We obsessed over CSS transitions, responsive layouts, font kerning, and the visual hierarchy of the homepage. We built the web to be looked at.

But in 2025, the primary consumer of your website is no longer just a human with a mouse; it is a machine with an API call.

AI agents, crawlers, and Large Language Models (LLMs) do not "look" at your website. They ingest it. They strip away the pretty CSS, ignore the images, and devour the raw text and code to understand who you are, what you sell, and if you can be trusted.

If your website is optimized only for humans, you are effectively asking these AI agents to read a book in a language they don't speak. You are creating friction. And in the world of computing, friction leads to exclusion.

To succeed in Generative Engine Optimization (GEO), you need to provide these agents with a "Digital Passport"—a set of files that explicitly tells them:

- Access: "You are allowed to be here" (robots.txt).

- Meaning: "This is what I am" (Schema.org).

- Context: "Here is the summary of my data" (llms.txt).

Most websites hand-code these files, leading to syntax errors, or worse, ignore them entirely. That ends today.

We are launching the GEO Asset Generator, a free tool that instantly builds this technical infrastructure for you. But before you generate the files, you need to understand exactly why they are the difference between being cited by ChatGPT and being ignored.

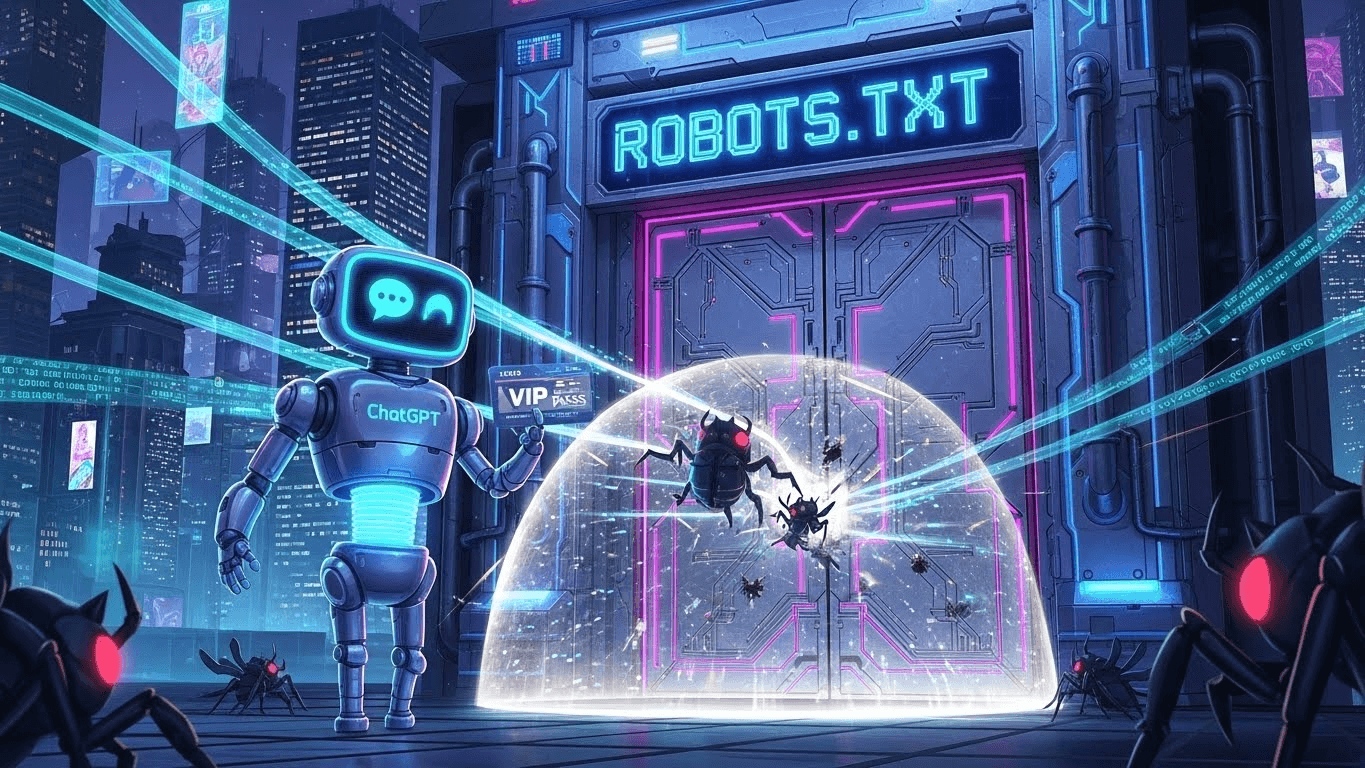

Part 1: Robots.txt — The Gatekeeper of the AI Era

The humble robots.txt file has existed since 1994. For decades, it was a simple "Keep Out" sign for annoying scrapers. Today, it is the sophisticated control room for your AI strategy.

The "Block Everything" Panic

When ChatGPT first launched, many webmasters panic-blocked GPTBot (OpenAI's crawler) in their robots.txt, fearing copyright theft. While valid for some publishers, for businesses trying to win Answer Engine Optimization (AEO), this was a self-inflicted wound.

If you block the bot, you cannot be part of the answer. You are opting out of the world's largest knowledge graph.

Granular Control: The Google-Extended Token

Modern AI strategy requires nuance. You might want to be indexed by Google Search (to get clicks) but not have your data used to train Google's future models without credit.

This is where tokens like Google-Extended come in. A properly configured robots.txt doesn't just say "Yes" or "No"; it defines the terms of engagement. It tells the crawler where your sitemap is, which directories are public, and which API endpoints are off-limits.

Our GEO Asset Generator builds a modern, AI-ready robots.txt file that ensures you are inviting the right bots in, while keeping the malicious ones out.

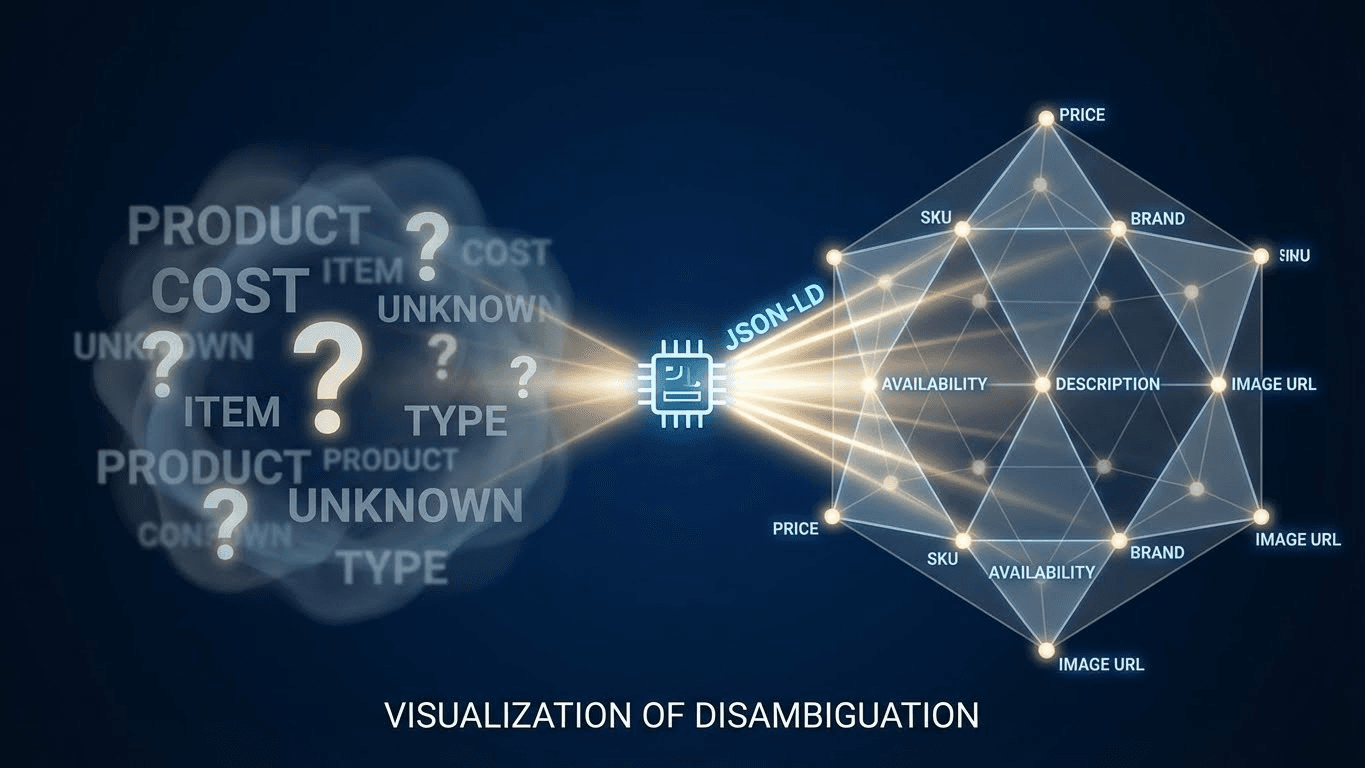

Part 2: Schema.org — The Native Tongue of LLMs

If robots.txt lets the AI into the building, Schema.org (JSON-LD) is the translator that explains what is inside.

We have written extensively about Schema before, but its importance cannot be overstated. LLMs are "probabilistic engines." When they read the word "Apple" on your site, they calculate the probability of it referring to a fruit vs. a technology company.

Schema eliminates probability and replaces it with certainty.

Moving from Strings to Things

In computer science terms, this is the shift from "Unstructured Strings" to "Structured Entities."

- Without Schema: "We sell the Python." (Is it a snake? A coding language? A roller coaster?)

- With Schema: You explicitly define the entity as Product, with the name "Python," the category "Reptile," and the price "$200."

The "Hallucination Killer"

Hallucinations occur when an AI tries to guess a fact to fill a gap in its knowledge. By providing deep, nested JSON-LD schema, you fill those gaps with hard-coded data. You are essentially API-ifying your content.

However, writing valid JSON-LD is difficult. A missing comma or an unclosed bracket breaks the entire code block, rendering it useless to Google. The GEO Asset Generator automates this syntax, ensuring your code is valid, nested correctly, and ready for ingestion.

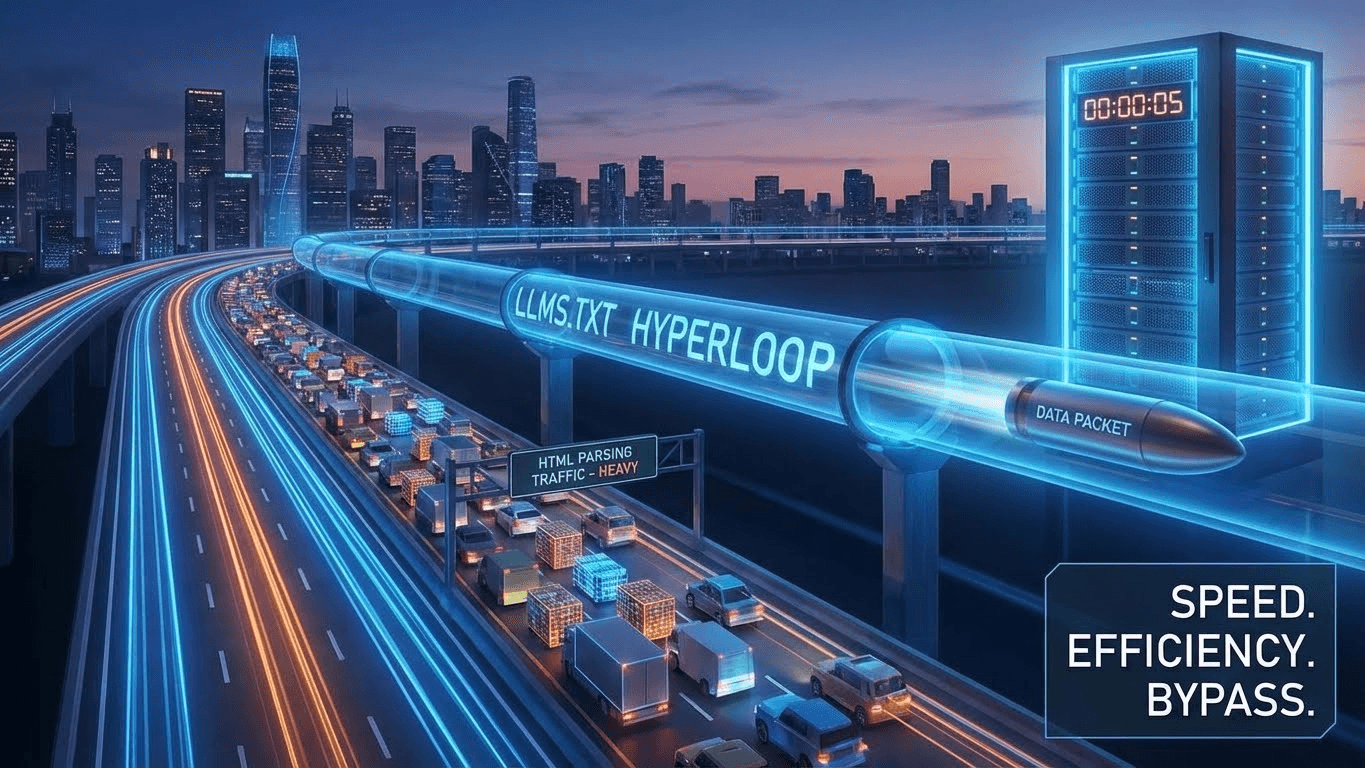

Part 3: LLMS.txt — The Fast Lane for AI Agents

This is the newest and most exciting development in the GEO landscape. While robots.txt and Schema are established standards, llms.txt is the emerging protocol designed specifically for the Agentic Web.

The Problem: HTML is Noisy

When an AI agent (like a customer service bot or a research agent) visits your website, it has to wade through megabytes of "noise." It has to parse your navigation bar, your footer links, your JavaScript trackers, and your CSS classes just to find the core text.

This burns "tokens" (the currency of AI compute) and increases the chance of error.

The Solution: The Markdown Manifest

The llms.txt proposal suggests a standard file location (e.g., yourdomain.com/llms.txt) that contains a clean, Markdown-formatted summary of your website's core information.

Think of it as a "Executive Summary" written specifically for robots. It might contain:

- Who you are.

- Links to your documentation.

- Your core pricing.

- Direct paths to your most important content.

When an AI agent detects this file, it can ignore the heavy HTML homepage and read the lightweight llms.txt instead. It is a "Fast Lane" for machines. By having this file, you are signaling to future AI agents (from Anthropic, OpenAI, and Google) that you are a machine-friendly entity.

Our tool is one of the first on the market to help you generate a compliant llms.txt file, future-proofing your site for the next wave of AI crawlers.

The "Invisible" Cost of Missing Assets

What happens if you ignore these three files?

You don't get a 404 error. Your site doesn't crash. To the human eye, everything looks fine.

But to the AI ecosystem, you become high-friction data.

- Because you lack a clean robots.txt, the crawler deprioritizes you to save resources.

- Because you lack Schema, the LLM fails to extract your pricing and hallucinates a competitor's price instead.

- Because you lack llms.txt, AI agents struggle to navigate your site structure and give up.

You are paying an "Invisible Tax" on every search query. You are losing citations and traffic that you don't even know exist.

The Solution: One Click to Compliance

We realized that asking business owners to hand-code JSON-LD or write a Markdown manifest for bots was unrealistic. The syntax is too specific, and the penalty for error is too high.

That is why we built the GEO Asset Generator.

How it Works:

- Input Your Details: Tell us your brand name, your key pages, and your bot preferences.

- Select Your Assets: Choose to generate Schema, Robots.txt, LLMS.txt, or all three.

- Generate & Deploy: The tool instantly writes the valid code. You simply copy and paste it into your website's root directory or header.

Why It Matters Now

We are in the early adoption phase of the Agentic Web. Most of your competitors do not have an llms.txt file. Most of them have broken or basic Schema. By implementing this "Digital Passport" today, you gain a structural advantage. You are making your brand the path of least resistance for the world's most powerful AI models.

Don't let syntax errors keep you out of the future.

Generate your AI assets for free here.

References

- The /llms.txt Standard: The emerging proposal for a standardized markdown file to help LLMs navigate websites efficiently.

- Source: llmstxt.org (Proposed Standard)

- Link: https://llmstxt.org/

- Robots.txt Specifications: Google's official guide on controlling crawler access and the Google-Extended token.

- Source: Google Search Central

- Link: https://developers.google.com/search/docs/crawling-indexing/robots-meta-tag

- Schema.org Vocabulary: The definitive resource for structured data types and properties used by major search engines.

- Source: Schema.org

- Link: https://schema.org/

- OpenAI Crawler Documentation: Technical details on GPTBot and how to control its access to your site.

- Source: OpenAI Platform

- Link: https://platform.openai.com/docs/gptbot