1. Most AEO advice ends at the fix. "Add JSON-LD schema." "Enable SSR." "Deploy an llms.txt file."

The instructions are clear enough. The problem is what comes after.

You implement the change. You wait. You check your traffic in GA4. Nothing moves. Or something moves, but you cannot tell if it was the fix or the algorithm update that dropped last Tuesday. The causal chain between action and outcome is permanently broken, and you are left optimizing on faith.

This is not a minor inconvenience. It is the core failure mode of most AEO programs — and it is entirely avoidable.

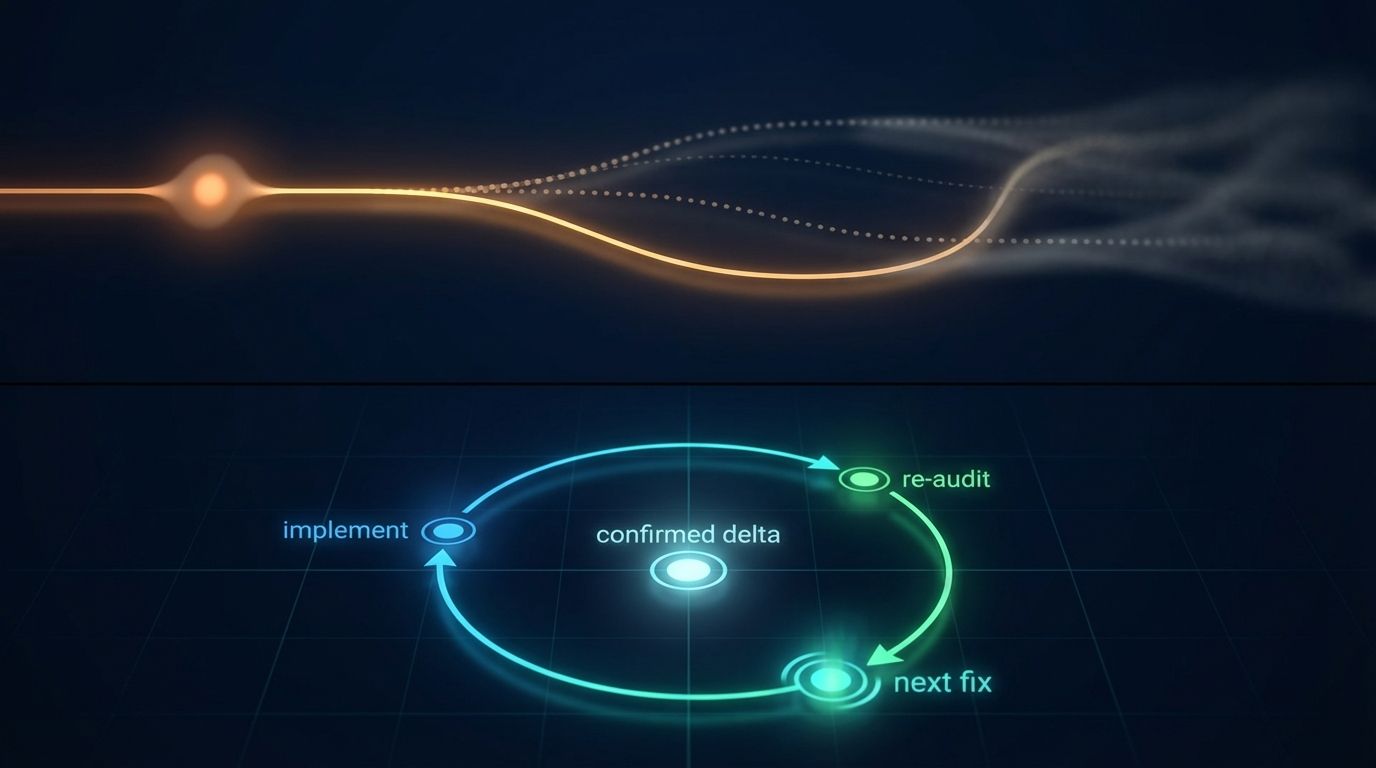

The AEO Validation Loop is the missing piece: a structured, signal-level feedback mechanism that gives you deterministic proof of whether each fix landed before you move to the next one.1. Why "Implement and Wait" Fails AEO

Traditional SEO operates on a delayed feedback loop by design. Google's crawl schedule, re-indexing latency, and the rolling nature of algorithm updates mean that a change you make today might not affect your rankings for weeks. Practitioners have accepted this as an immutable property of the discipline.

AEO does not have to work this way. The signals that determine whether an AI system can read and retrieve your content are structural properties of your page — not judgments made by a slowly updating ranking algorithm. They are either present or they are not. A JSON-LD block either validates or it does not. Your HTML either delivers full content on the initial server response or it produces an empty shell. These are binary, testable states.

When you treat AEO fixes as "deploy and move on," you are carrying forward a broken model from a discipline where delayed feedback was unavoidable. In AEO, the feedback can be immediate — if you structure the process to capture it.

2. The Four Stages of the Validation Loop

The loop has a fixed structure. Skipping or reordering any stage breaks the feedback chain.

Stage 1: Baseline Audit. Before touching anything, run a full audit of the URL in its current state. Record every signal score — not just the overall number, but the individual sub-scores for rendering, schema, token efficiency, entity clarity, crawl access, and semantic structure. This is your ground truth. Without it, you have no basis for comparison after the fix.

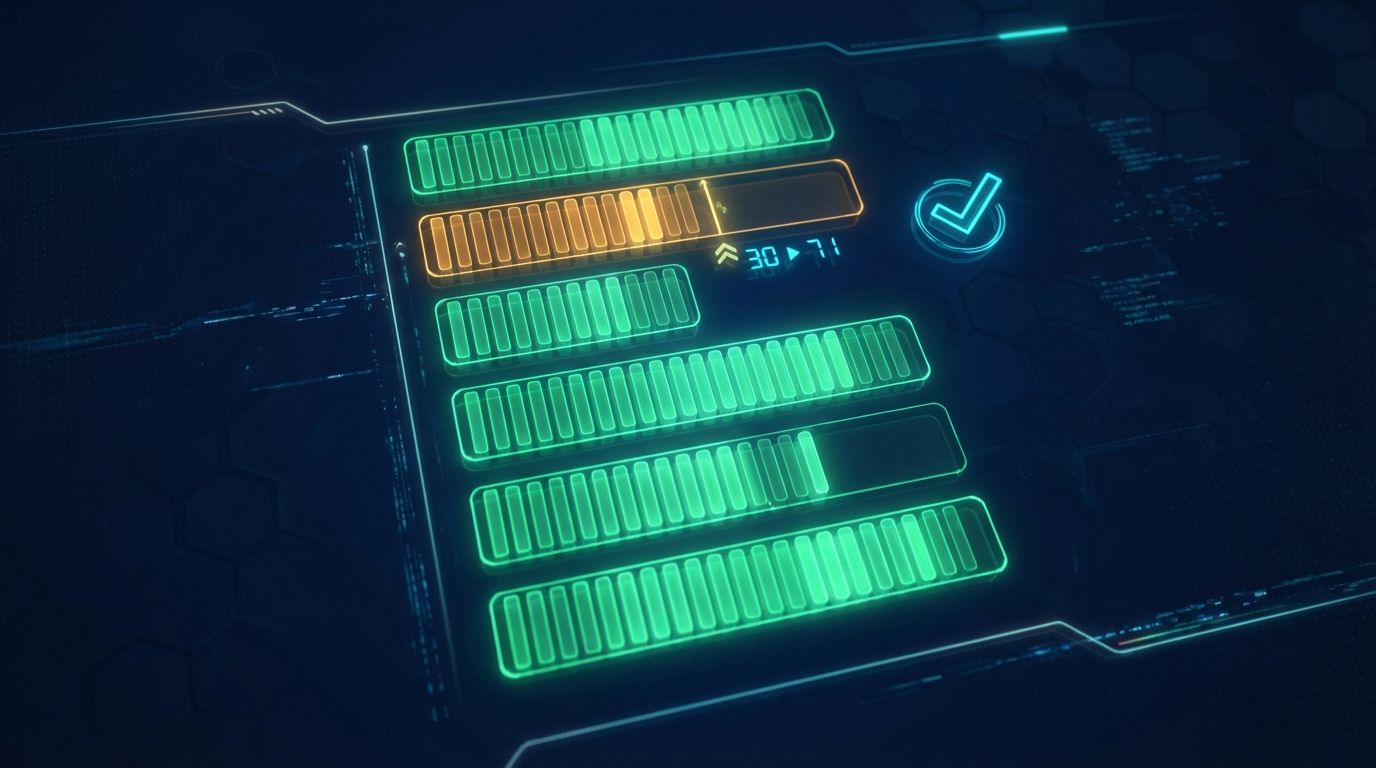

Stage 2: Single-Variable Implementation. Fix one signal category at a time. This is the most commonly violated rule in AEO optimization. If you add schema markup, fix your robots.txt, and enable SSR in the same deployment, and your score goes up by 18 points, you do not know which change was responsible. You cannot reproduce the result. You cannot prioritize the same fix across other pages. Single-variable implementation is not slow — it is the only method that produces actionable knowledge.

Stage 3: Re-Audit and Delta Capture. Submit the same URL for re-audit immediately after the fix is live. The engine runs the same six Architect checks against the same signals. The score delta — the difference between your baseline and the re-audit result — is the direct, isolated measurement of whether your fix produced the expected structural change. A rendering fix should move the rendering signal. A schema addition should move the schema signal. If the expected signal did not move, the fix did not land the way you thought it did.

Stage 4: Root Cause Analysis on Non-Movement. When a signal fails to move after a fix, the cause is almost always one of three things: the change was not actually deployed (caching, build pipeline issues), the change was deployed but targets a signal the audit does not directly measure (a common confusion between SEO and AEO signals), or the fix addressed a symptom rather than the root cause. A schema block added to a page that is still blocked by robots.txt will not improve your entity clarity score — because the entity cannot be reached by the crawler that would evaluate the schema. The audit result tells you which layer the problem sits in. Work from there.

3. What "Validated" Actually Means

A validated fix is not one that moved your overall score. It is one that moved the specific signal it was designed to address.

This distinction matters because the six audit signals are weighted differently and interact with each other. A large improvement in token efficiency might move your overall score by eight points even if your schema score stays flat. If you planned a schema fix and declare success based on the overall score movement, you may have shipped something entirely different from what you intended — and the schema problem is still live.

Signal-level validation also catches regressions. A new page template that improves your rendering score might simultaneously break your heading hierarchy, depressing the semantic structure signal. If you are looking only at the composite number, you miss the regression until it costs you retrieval quality at scale.

The rule: after every fix, the specific signal you targeted must have moved in the correct direction. If it has not, the fix is not validated regardless of what else changed.

4. The Cost of Skipping Validation

The compounding effect of unvalidated fixes is significant and underappreciated.

Consider a site with five critical AEO issues. The team implements all five fixes in a single sprint, sees the overall score rise by 22 points, and considers the project complete. Six months later, the site's AI citation rate has not improved materially. An audit reveals that three of the five original fixes are still broken — one due to a caching layer that never served the updated robots.txt to crawlers, one due to a JSON-LD block with a syntax error that prevented it from validating, and one due to a rendering fix that worked on the homepage but was not applied to the product pages where the original problem was detected.

All three failures would have been caught in real time by a validation re-audit immediately after each deployment. Instead, they compounded for six months while the team moved on to other priorities, believing the work was done.

Validation is not an extra step. It is the step that makes all the previous steps produce a result.

5. Applying the Loop at Scale

Single-URL validation is straightforward. Applying the loop across a large site introduces sequencing decisions.

Start with the pages most likely to generate AI citations in your category: your homepage, your primary product or service pages, and any high-traffic content that addresses informational queries in your space. These are the URLs an AI retrieval system will encounter first and evaluate most frequently.

Run the baseline audit across all target URLs before touching anything. This gives you a priority stack: the URLs with the lowest scores in the highest-impact signals get fixed first. Schema failures on your homepage outrank token efficiency issues on a low-traffic blog post.

After each fix and re-audit cycle, update your priority stack. Fixes in one signal category sometimes expose or correct adjacent signal issues — a rendering fix that now delivers clean HTML to crawlers may reveal schema markup that was previously unreachable and is now being evaluated for the first time. The stack is a living document, not a one-time triage.

6. Reference Sources

- Website AI Score Engine: Technical documentation of the six audit signals and how they are scored. https://websiteaiscore.com/blog/website-ai-score-audit-engine-explained

- Schema.org Validator: Google's official tool for verifying JSON-LD structured data implementation. https://validator.schema.org

- Google Search Central — Structured Data Testing: How structured data validation works and what common errors look like. https://developers.google.com/search/docs/appearance/structured-data

- Website AI Score Research: 1,500-site audit findings on implementation failure rates. https://websiteaiscore.com/blog/case-study-1500-websites-ai-readability-audit