The audit doesn't start when you press submit. It starts the moment our crawler requests your URL with a raw HTTP GET, strips every visual layer your browser would normally render, and begins reading your website the way a language model reads it — as a stream of tokens, structured signals, and semantic relationships.

Most website auditing tools measure what your site looks like. We measure what it means to a machine.

This is the complete technical breakdown of what happens inside the Website AI Score engine, why each signal was chosen, and how the 0-to-100 score maps to real-world AI citation probability.

1. The Audit Hypothesis: Websites Are Built for the Wrong Reader

When a user asks ChatGPT, Perplexity, or Gemini a question in 2026, the AI is not clicking a link and reading your page the way a human does. It is either working from training data that was ingested months ago, or it is running a live retrieval step where a RAG (Retrieval-Augmented Generation) pipeline fetches your content, chunks it, embeds it into a vector, and passes those vectors through a similarity search against the query.

In both cases, your website's ability to be cited depends on one thing: whether the raw signal your HTML produces is clean enough, structured enough, and semantically distinct enough to survive that pipeline intact.

Our audit engine is built to simulate exactly that process. Six independent "Audit Architects" each test a different layer of what we call AI Readability — the composite quality of a page as an input to an LLM pipeline, not as a visual experience.

The score is not a grade for your design. It is a diagnostic of your machine-readable infrastructure.

2. The Six Audit Architects

Each Architect tests a specific failure mode that our 1,500-site forensic study identified as a primary cause of AI invisibility. They run in parallel, and their weighted outputs sum to your final score.

Architect 1: Rendering Signal (The "Empty Shell" Test)

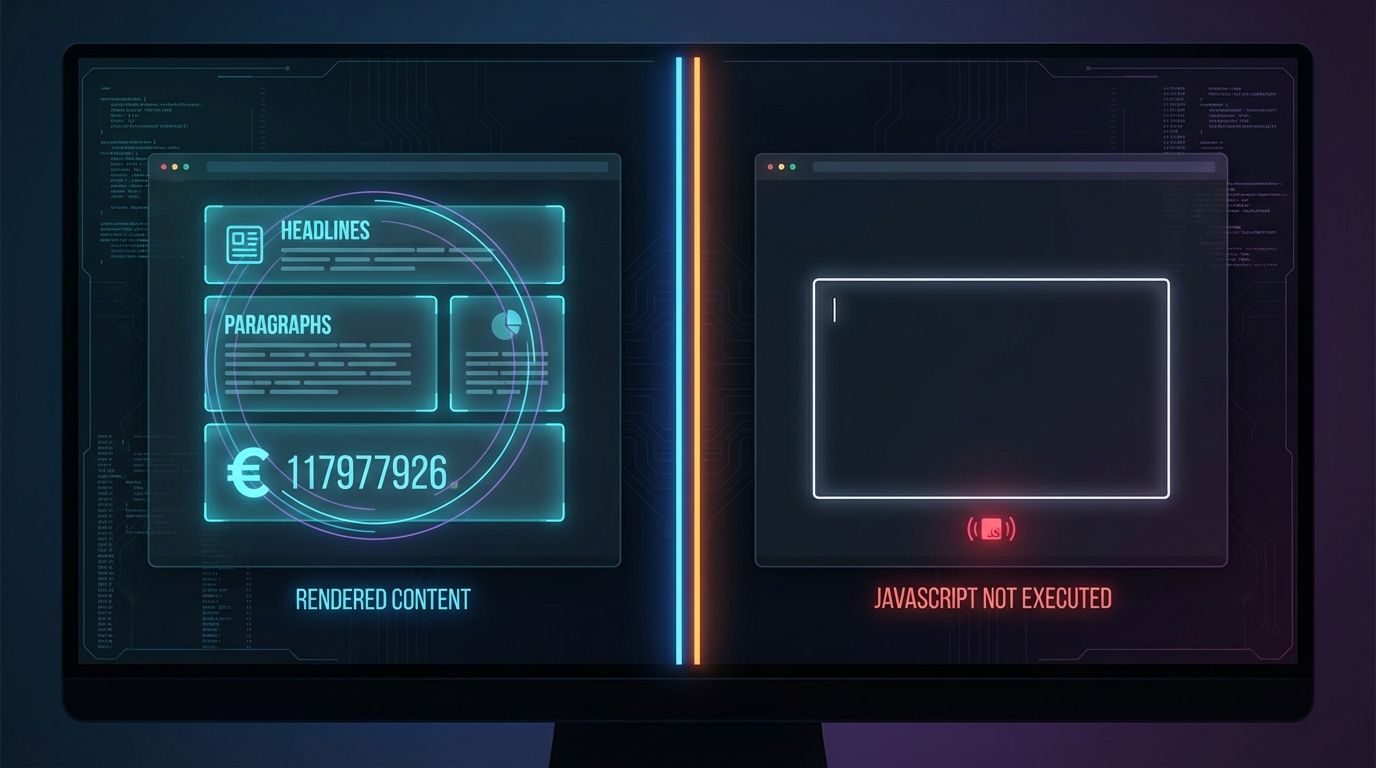

The first thing the engine checks is whether your HTML is rendered server-side or depends on client-side JavaScript hydration.

Modern JavaScript frameworks — Next.js in CSR mode, Vue, Angular, and similar — ship an "empty shell" to the initial HTTP response. The page looks complete in a browser because the browser executes the JavaScript and populates the DOM. An AI crawler that doesn't execute JavaScript receives a blank document.

Our engine makes two requests to your URL: one standard GET, and one that mimics the behavior of a bot that does not execute JavaScript. It then compares the token count of readable content between the two responses. A significant delta — specifically, core content like headings, body text, and pricing that only appears post-hydration — triggers a penalty.

Why this matters for LLMs: Perplexity's real-time retrieval agents, Common Crawl's ingestion pipeline (which feeds GPT and Claude training data), and most RAG tooling operate below the 200ms response threshold. They do not wait for JavaScript to hydrate. If your content is locked behind hydration, it is structurally absent from most AI systems.

The fix is not to abandon your framework. It is to ensure Server-Side Rendering (SSR) or Static Site Generation (SSG) delivers the full semantic payload in the initial response.Architect 2: Schema Validity (The Structured Data Audit)

Structured data is the closest thing to a direct API contract between your website and an AI system. When you implement Schema.org markup correctly, you are not asking the AI to infer what your page is about — you are telling it explicitly, in a machine-native language.

This Architect runs three layers of checks:

Presence check. Is any JSON-LD, Microdata, or RDFa present at all? In our 1,500-site study, 70% of pages had zero schema markup of any kind.

Type specificity check. Generic Organization or Article schema with only a name and URL provides minimal signal. The engine checks whether you are using specific, semantically rich types — TechArticle, Product, Service, FAQPage — and whether you are populating high-signal properties like knowsAbout, sameAs, mentions, and description.

Nesting integrity check. A common failure pattern we documented is "orphaned schema" — where a Product entity exists but its Offers, AggregateRating, or MerchantReturnPolicy child objects are either missing or disconnected. An orphaned entity tells the AI a product exists but cannot tell it the price, availability, or return terms. For AI buying agents, this is a complete disqualification.

The GIST implication. Schema markup directly affects how Google's GIST (Greedy Independent Set Thresholding) algorithm evaluates your entity distinctiveness. Well-defined entities with unique sameAs associations reduce the risk of your content being classified as a near-duplicate of a larger authority and excluded from the AI's source selection.

Architect 3: Token Efficiency (The Signal-to-Noise Ratio)

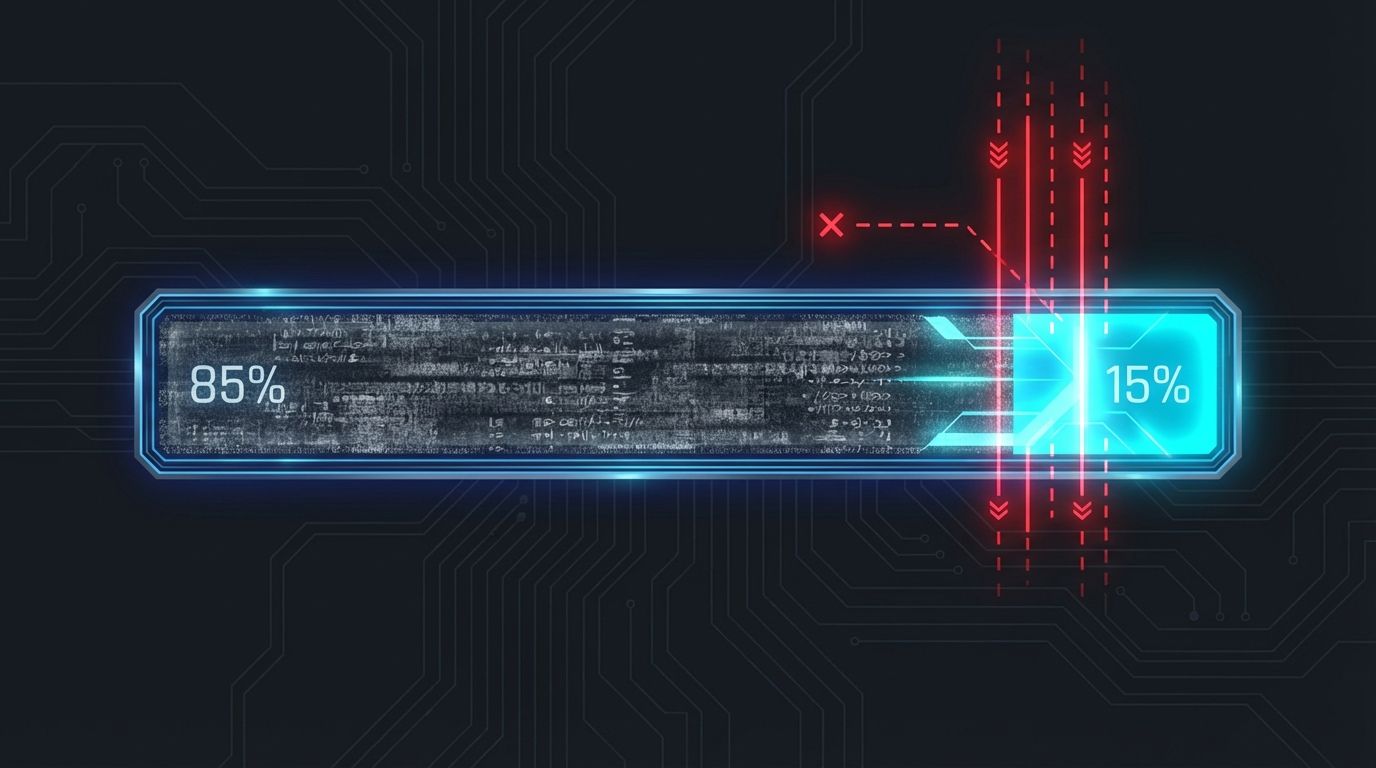

LLMs operate on token budgets. Every word, every HTML tag, every inline CSS class your page serves to a crawler costs tokens. If 80% of those tokens are structural noise — navigation elements, footer boilerplate, tracking script remnants, decorative CSS classes embedded in the DOM — the AI spends the majority of its budget before reaching your actual content.

This Architect calculates the semantic density ratio of your page: the proportion of tokens that carry extractable information versus tokens that carry structural or decorative overhead.

The calculation works like this:

- The raw HTML is stripped of

<script>,<style>,<nav>,<footer>, and<header>tags. - The remaining text content is tokenized using a BPE (Byte-Pair Encoding) approximation.

- That token count is divided by the total token count of the full raw HTML response.

- The ratio is compared against the benchmark distribution from our audit dataset.

A page that serves 150KB of HTML to display 500 words of content has a token efficiency ratio of roughly 0.03. Most RAG pipelines use chunking strategies that truncate pages after a fixed token window — typically 512 to 2,048 tokens. If your actual value proposition appears after that truncation point because of HTML overhead, it is never retrieved.

The 100-Token Rule is the practical application of this signal: the first 100 tokens of your page's readable content — what an LLM ingests before any chunking boundary — must contain your entity name, your primary service or product category, and your primary differentiator. If that information appears only in the 800th token because the first 700 were navigation and boilerplate, you fail the retrieval test even if your content is excellent.Architect 4: Entity Clarity (The Knowledge Graph Signal)

This is the most frequently misunderstood signal in AEO. Entity clarity is not about whether you have written your company name correctly. It is about whether an LLM reading your page can unambiguously resolve who you are in a global knowledge graph.

A "String" is a piece of text: "Apple." An "Entity" is a node in a knowledge graph: Apple Inc., the technology company headquartered in Cupertino, California, with Wikidata identifier Q312.

If your website contains the word "Apple" in multiple contexts without disambiguation markup, an AI system has to guess which Apple you mean. In a RAG pipeline, that ambiguity can cause retrieval failures or, worse, hallucinated associations.

This Architect checks for:

sameAsproperties in your Organization schema that link to canonical authority nodes: Wikidata, LinkedIn, Crunchbase, Wikipedia.- Author disambiguation. Are the authors of your content linked to verified Person entities? The

citation_authormeta tag, when properly implemented, carries your author's identity as a machine-readable signal rather than a byline string. - "About This Result" verifiability. Google's Knowledge Panel status for your brand is a proxy for entity resolution strength. A brand that fails Google's entity verification test has a high probability of being ignored or misrepresented by LLMs.

The practical fix for most sites is implementing a single, well-structured Organization JSON-LD block in the site-wide <head>, with sameAs linking to at least three canonical external references.

Architect 5: Crawl Access (The Front Door Audit)

Your robots.txt file is the access policy for the AI economy. Get it wrong, and it does not matter how optimized everything else is — you have locked the door.

This Architect parses your robots.txt and checks for three specific conditions:

Unintentional blanket blocks. A User-agent: * / Disallow: / rule, often left over from a staging environment, blocks every bot including GPTBot, PerplexityBot, and ClaudeBot. In our study, 30% of audited sites had active blocks of this type. None of them had made a strategic decision to exclude AI crawlers. The blocks were accidental.

Selective blocking accuracy. There is a legitimate strategic distinction between training bots (CCBot, which feeds Common Crawl and, by extension, most LLM training datasets) and retrieval bots (GPTBot for Perplexity's real-time mode, ClaudeBot for Anthropic's indexing). A site might choose to block training ingestion while permitting real-time retrieval. This Architect validates whether your current robots.txt configuration achieves your actual intent.

llms.txt presence. The llms.txt standard — a markdown-formatted file at the root of your domain that provides AI agents with a curated map of your most valuable content — is the new sitemap.xml for the AI web. Adoption in our study was 0.2%: 3 sites out of 1,500. This represents a significant first-mover advantage for any site that implements it correctly. The Architect checks for its presence and validates its structure.Architect 6: Semantic Structure (The HTML Hierarchy Audit)

This is the oldest signal on the list and still among the most violated. Semantic HTML is the document outline that LLMs use to understand the logical hierarchy of your content — which claims belong to which topics, how sections relate to each other, and where the primary thesis lives relative to supporting evidence.

The specific failure modes this Architect detects:

Header hierarchy abuse. Developers routinely use <h4> and <h5> tags for small decorative text because those tags render at a small font size. When a heading hierarchy jumps from <h1> to <h4>, it breaks the semantic document outline. An LLM using header tags to chunk your content will misassign supporting points to the wrong parent claims.

Chunking boundary misalignment. Fixed-size chunking strategies (splitting every 512 tokens regardless of semantic boundaries) are the default in most RAG pipelines. When your HTML hierarchy is broken, the chunking algorithm has no structural signal to use even if it is configured to respect semantic boundaries. Well-formed heading hierarchies give chunking systems a fallback boundary mechanism.

<details> and <summary> optimization. These native HTML elements, when properly used for Q&A patterns, are recognized by several AI retrieval systems as high-confidence answer candidates. Their structure explicitly signals: "This is a question. Here is its answer." Correct use increases retrieval recall for conversational query formats.

3. The Score: What 0 to 100 Actually Measures

The six Architects produce weighted sub-scores. Schema Validity and Token Efficiency carry the heaviest weights because they have the highest variance in our dataset and the most direct impact on retrieval quality. The final score is a composite:

| Score Range | Classification | Practical Meaning |

|---|---|---|

| 0 to 40 | Invisible | AI systems cannot reliably parse or retrieve your content. Citation probability is near zero. |

| 41 to 70 | Readable | AI can extract basic information but lacks the structured signals to use your site as a primary source. |

| 71 to 100 | Optimized | Your content is structured for reliable retrieval, entity resolution, and AI citation. |

A score of 71 or above does not guarantee citation — the quality of your actual content and its semantic distinctiveness relative to competitors remain critical. What the score does guarantee is that structural barriers to citation have been removed.

4. The Validation Loop: Confirming Fixes Actually Work

The most important feature of the platform is not the initial audit. It is the re-audit.

SEO has always had a verification problem: you implement a change, you wait three months, and you hope traffic moves in the right direction. The causal link between the change and the outcome is almost impossible to isolate.

Our validation loop is deterministic. When you implement a fix — adding schema markup, enabling SSR, deploying an llms.txt file — you submit your URL for re-audit. The engine runs the same six Architects against the same signals. The score delta is the direct measurement of whether your fix worked. There is no waiting period, no confounding variables, no ambiguity.

This is the core product loop: Audit. Identify. Fix. Validate.

Each credit in your account represents one complete run of all six Architects against one URL. Re-auditing a URL after implementing fixes costs one additional credit, which is why the fix-validate cycle is central to how credits are consumed in active optimization workflows.5. What the Engine Does Not Measure

Being precise about the scope of the audit matters. The Website AI Score engine measures structural AI readability. It does not measure:

Content quality. Whether your content is accurate, authoritative, or more useful than a competitor's is outside the scope of automated structural analysis. A site can score 95 and still fail to be cited because its content adds no unique information value. GIST compliance — ensuring your content is semantically distinct from existing sources — requires content strategy, not just structural fixes.

Training data inclusion. Whether your site has already been ingested by GPT-4, Claude, or Gemini's training datasets is a function of historical crawl access and timing. The audit measures your current structural readiness for future crawls and retrieval events.

Link authority. Domain authority, backlink profiles, and PageRank signals are not AEO signals. They remain relevant for traditional SERP rankings but do not directly influence whether an AI retrieval pipeline selects your content as a source.

6. Reference Sources

- Schema.org Technical Documentation: Organization, Product, and TechArticle type specifications. https://schema.org

- Common Crawl Foundation: Crawl data specifications and bot documentation. https://commoncrawl.org

- OpenAI GPTBot Documentation: Official disclosure of GPTBot user-agent and robots.txt guidance. https://platform.openai.com/docs/gptbot

- llms.txt Proposal: Jeremy Howard's specification for the llms.txt standard. https://llmstxt.org

- Website AI Score Research: 1,500-site forensic audit data. https://websiteaiscore.com/blog/case-study-1500-websites-ai-readability-audit

- GIST Algorithm Research: Reverse-engineering Google's Max-Min Diversity protocol. https://websiteaiscore.com/blog/gist-algorithm-explained