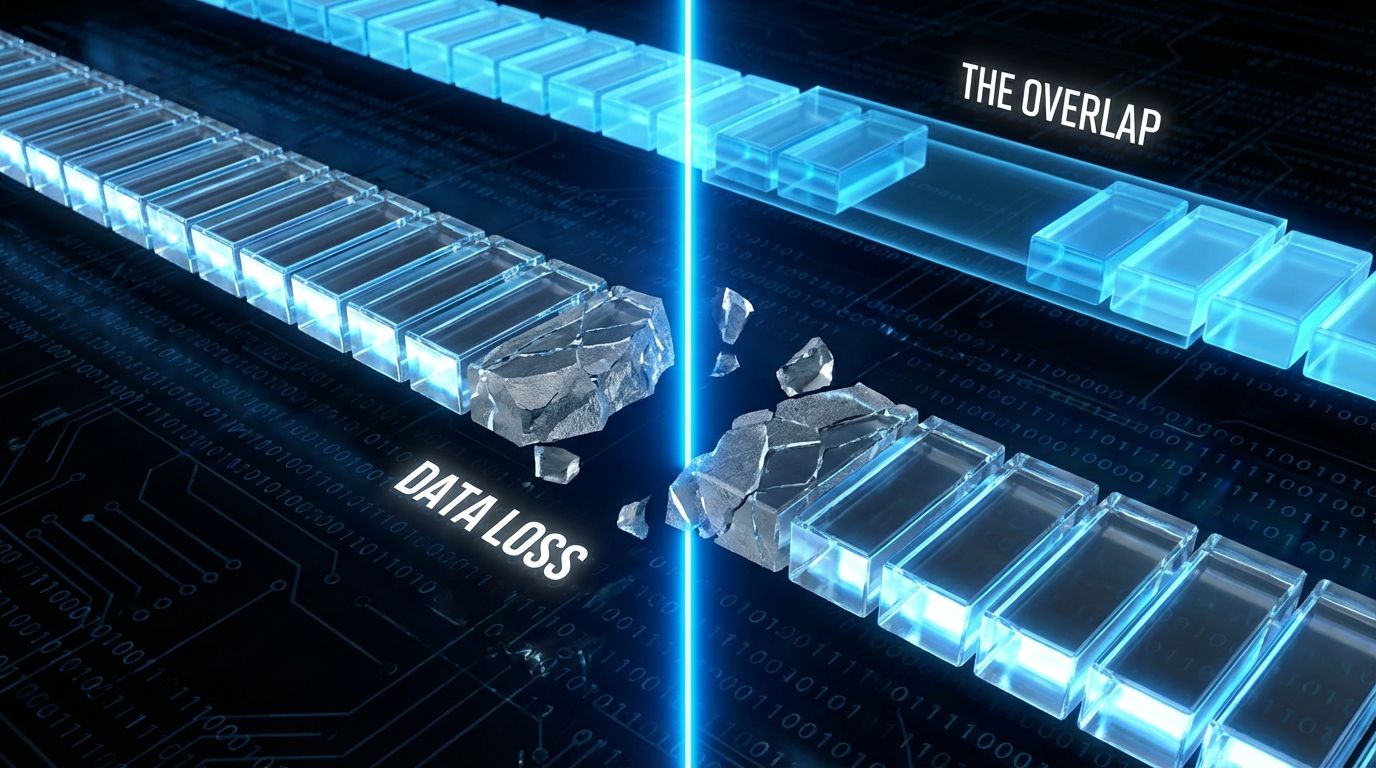

The integrity of a retrieval system is decided by where the knife falls. RAG ingestion slices documents into vector-ready segments, and the industry default of fixed-size splitting is mechanically efficient but semantically destructive: it severs the subject from the predicate or the question from the answer, creating Orphaned Vectors that produce hallucination. The fix is Sliding Window Chunking with a 10% to 20% overlap plus structure-aware segmentation, so semantic context bridges the gap between cuts and prevents the Boundary Miss, where the most relevant answer is split across two invisible partitions.

1. The Boundary Miss Effect

Fixed slicing creates hallucinations because the cut lands mid-thought. A query's answer that straddles the boundary between chunk N and chunk N+1 is never retrieved whole; each half embeds as a fragment too weak to match. Sliding the window so consecutive chunks share an overlap region keeps the bridging context intact, which is the same first-100-tokens discipline argued in the RAG inverse pyramid.

2. The HNSW Sinkhole

Most RAG tutorials use a standard RecursiveCharacterTextSplitter at 512 tokens with zero overlap, assuming natural separators like \n\n preserve meaning. That fails at web scale. Modern pages are plagued by Vector Space Pollution from massive HTML footers and navigation menus, dense clusters of "Privacy," "Contact," and "Terms" that are identical across thousands of pages. Ingested, they create Semantic Sinkholes in the HNSW index, so a query for "privacy policy" pulls 50 generic footers instead of the actual legal document, clogging the context window with noise. The pivot is to shift from syntax-based splitting to DOM-aware ingestion: split by structural tags like <article> and <section>, and actively exorcise boilerplate before vectorization.

3. Token Mathematics: The Overlap Tax

Vector database engineers work in tokens, not words. The ratio is roughly 1 token to 0.75 words, so a 512-token chunk holds about 384 words, and you should never target 512 exactly; tokenizer variance demands a buffer, so target 480. Implementing a sliding window imposes a storage penalty: a 10% overlap on a 512-token chunk duplicates 51 tokens.

This Overlap Tax grows your vector index by 10% to 20%, but it raises dense-retrieval precision by roughly 14.5% per Chroma research. The cost of storage is negligible against the cost of retrieval failure. Below is a structure-aware splitter that exorcises the sinkholes before applying the sliding window.

4. Semantic Hoisting: Solving Context Amnesia

Standard chunking suffers from Context Amnesia. Split a long section under <h2>Safety Protocols</h2> and the second chunk might contain "Wear goggles" but lose the header, so the embedding for "Wear goggles" floats in the semantic void, disconnected from the safety topic. The fix is Header Hoisting: when splitting, identify the parent H1 or H2 and inject it into the metadata or prepended text of every child chunk, so even an isolated fragment carries the full semantic weight of its parent topic. This is the same distinctiveness principle as the Vector Exclusion Zone, ensuring every node carries a strong, unambiguous signal.

5. Engineering Protocol: DOM-Aware Sliding Windows

Step 1, footer excision: before any splitting, apply CSS selectors to strip <footer>, <nav>, and .legal-text, the high-density low-value zones that confuse HNSW. Step 2, the calculation: configure to the embedding model, for instance text-embedding-3-small (max input 8191 tokens), with an optimal chunk of 1024 tokens and 128-token overlap (12.5%). Step 3, the sliding window: configure the splitter to use that overlap so a sentence cut at token 1024 survives intact in the next chunk starting at token 896. Step 4, agentic refinement (enterprise tier): for high-value queries as outlined in the 2026 inference roadmap, replace rule-based splitters with Agentic Chunking, using a cheap model like GPT-4o-mini to read the text and insert <BREAK> tokens at logical conclusions; it's 10x more expensive but yields near-perfect retrieval precision. The whole pipeline is an application of the token tax principle: spend tokens where they buy retrieval, never where they buy noise.

Is your content getting orphaned at the cut?

Free audit. Checks whether your pages carry footer sinkholes, whether your structure supports header hoisting, and how cleanly an ingestion pipeline can chunk you.

Audit your chunkability →The contrarian point that should change how you write, not just how you ingest: the chunk boundary is an authoring problem, not only an engineering one. Engineers tune overlap and splitters downstream, but the writer who front-loads the complete answer into a single self-contained paragraph, with its own header context restated, has already won retrieval before the splitter runs. You cannot fully fix at ingestion what was written to sprawl across boundaries; the most retrievable content is composed in atomic, header-anchored blocks from the first draft.

6. Reference Sources

- Chroma Research (2024). Strategies for Effective Document Chunking in RAG. ChromaDB Docs

- OpenAI API Documentation (2025). Embeddings and Token Limits. OpenAI Platform

- Website AI Score Strategy (2026). The 2026 Roadmap: From Search to Inference. View article

- Website AI Score Research (2026). Optimizing for GIST: Semantic Distance & Vector Exclusion Zones. View article