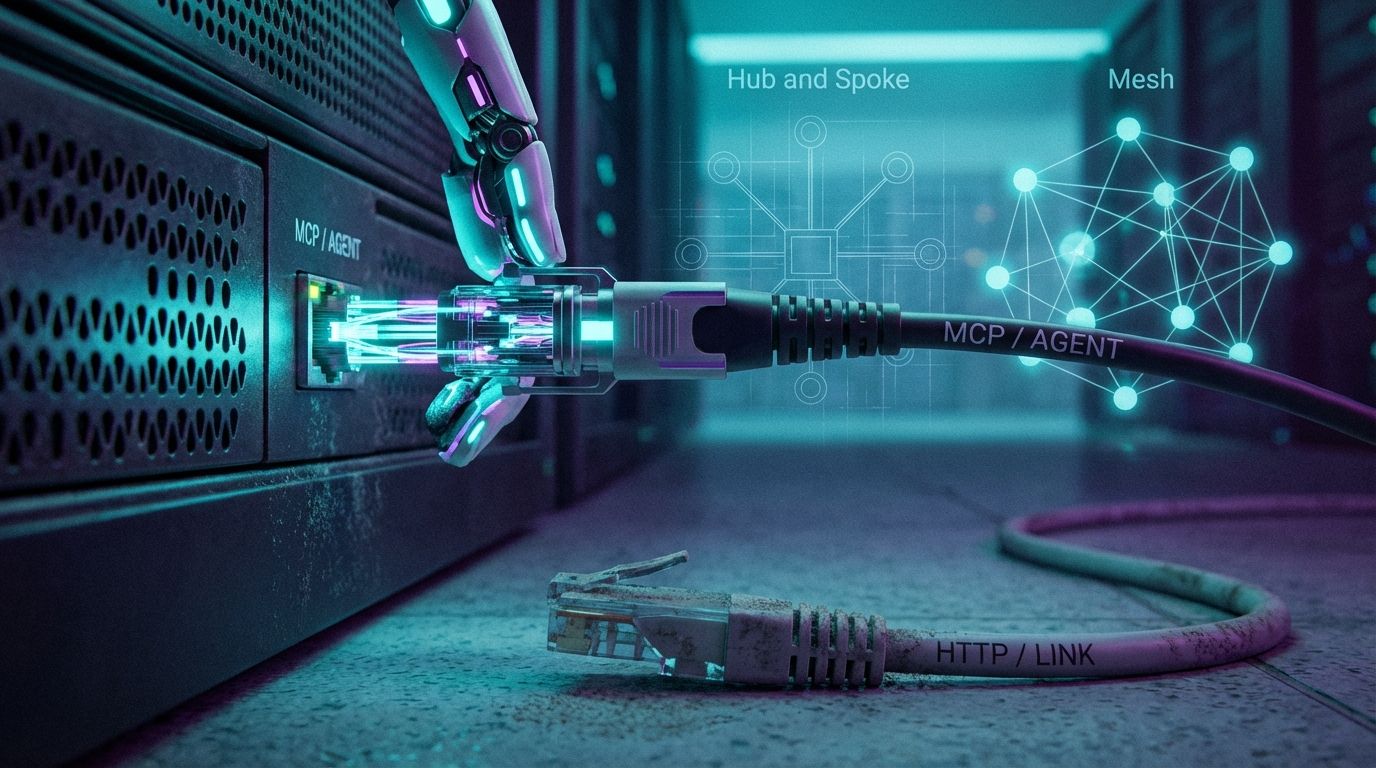

The web's "Hub-and-Spoke" architecture, where search engines route traffic to your site, has collapsed into a Terminal Architecture. In the Inference Economy, the user doesn't visit your site to find an answer; the LLM visits your vector embedding, synthesizes the answer, and serves it directly. The primary metric shifts from Click-Through Rate to Share of Model Voice. Surviving this means a dual pivot: replacing robots.txt with ai.txt to govern training rights vs inference rights, and deploying Model Context Protocol (MCP) servers as "USB-C ports" for AI so agents can plug into your data as an executable tool rather than reading it as static text.

1. The Consensus Trap: The Volume-Value Decoupling

The industry consensus is to "optimize for AI Overviews" by producing comprehensive long-form content to win the snapshot above the fold. That optimizes for a structurally decaying metric. Google retains roughly 90% of search volume, but the zero-click rate on mobile has hit 77% and AI Overviews have cut organic CTR from 15% to 8%. You're fighting for visibility in an ecosystem designed to starve you of traffic, and that Google traffic is increasingly low-intent (navigation, education) with a conversion rate of just 2.8%. The pivot: stop optimizing for volume, optimize for inference utility. Late-2025 data shows referrals from answer engines (Perplexity, ChatGPT) convert at 14.2% because those users are pre-qualified by the model's synthesis. The goal isn't to rank #1 in a directory, it's to be the primary citation in a generated answer, the same objective measured by Share of Model.

2. The Protocol Gap: From Documents to Agents

The Great Decoupling demands a new infrastructure stack. We're moving from a web of Documents (HTML) to a web of Agents (JSON-RPC). By 2026, an estimated 40% of enterprise applications will embed task-specific AI agents, and these agents don't read blogs, they negotiate with tools. If your site doesn't speak Agent-to-Agent (A2A) protocols, you're invisible to the automated economy. The robots.txt file is insufficient because it treats all bots as crawlers; you must distinguish "Vampires" (model trainers absorbing your IP into weights for free) from "Drivers" (RAG agents that cite you), governing each through an ai.txt policy. This extends the directives covered in the llms.txt guide.

3. Information Gain: Identity for Agents

Most AEO strategies focus on text optimization. The missing vector is Agent Identity. In the A2A mesh, a "Manager Agent" (the orchestrator) can't hire your "Seller Agent" (your site) if it can't verify your capabilities, so you publish an Agent Card at /.well-known/agent-card.json. This file is a digital business card broadcasting your skills (check stock, book meeting) and pricing to the collaboration mesh. As the GIST report argues, algorithms favor unique signals, and an Agent Card moves you off the crowded content vector (where everyone competes) onto the sparse utility vector (where you're the only executable tool).

4. Implementation Protocol

Step 1, the zero-click content audit: identify your "loss leaders" (high-traffic informational pages: definitions, basic how-tos), assume that traffic drops 50%, and pivot them to include atomic fact blocks and explicit temporal-validity markers (see escaping training stasis) to force citation by RAG agents. Step 2, deploy the Agent Card (A2A) to establish identity in the mesh, as shown above. Step 3, launch an MCP server: don't just offer an API docs page; wrap your core logic in a Model Context Protocol server so tools like Claude Desktop can "install" your brand as a plugin.

Can an agent plug into you, or only read you?

Free audit. Checks your ai.txt governance, whether you publish an Agent Card, and whether your content is structured to win citations in the inference layer.

Audit your agentic readiness →The contrarian point that reframes the whole "AI is killing my traffic" panic: losing traffic volume to AI Overviews is not the disaster everyone treats it as, it's a filter doing your qualification for free. The 2.8%-converting visitor who bounced after reading your definition was never going to buy; the 14.2%-converting referral arrives already convinced by a model that synthesized your expertise. The publishers who lose are the ones optimizing to keep the low-value clicks, not the ones restructuring to win the high-value citations.

5. Reference Sources

- OpenAI (2025). Perspectives on the Future of Search and Inference. OpenAI Announcements

- Google Developers (2025). Agent-to-Agent (A2A) Protocol Specifications. Google A2A Standards

- Anthropic (2024). Model Context Protocol (MCP) Specification. modelcontextprotocol.io

- Website AI Score Strategy (2026). Optimizing for GIST: Semantic Distance & Vector Exclusion Zones. View article

- Website AI Score Engineering (2026). Temporal Validity: Escaping MinHash Deduplication. View article