1. Executive Summary

The rapid integration of Vision-Language Models (VLMs) like CLIP into commercial search infrastructure (e.g., Google Lens, Vertex AI) has created a fundamental architectural conflict. The metadata standards required for human accessibility (W3C) mandate brevity and the removal of "redundant" context (e.g., banning "image of"). Paradoxically, forensic analysis reveals that removing these "spurious" carrier signals degrades machine retrievability. Vector search algorithms rely on noisy, verbose descriptions to ground visual data in high-dimensional space. This report analyzes the "Semantic Gap" between accessibility compliance and contrastive learning objectives, proposing the "Hidden Embedding" Pattern—a dual-layer metadata strategy leveraging Schema.org to satisfy vector engine requirements without compromising the screen reader user experience.

2. The Engineering Hypothesis

The Architectural Friction: The "Distribution Gap" in Training Data.

The core friction arises from the divergence in optimization functions between Assistive Technology (AT) and Neural Retrieval.

AT Logic (Linear Constraint): Screen readers process the DOM linearly. Redundancy adds latency and cognitive load. The goal is Information Density.

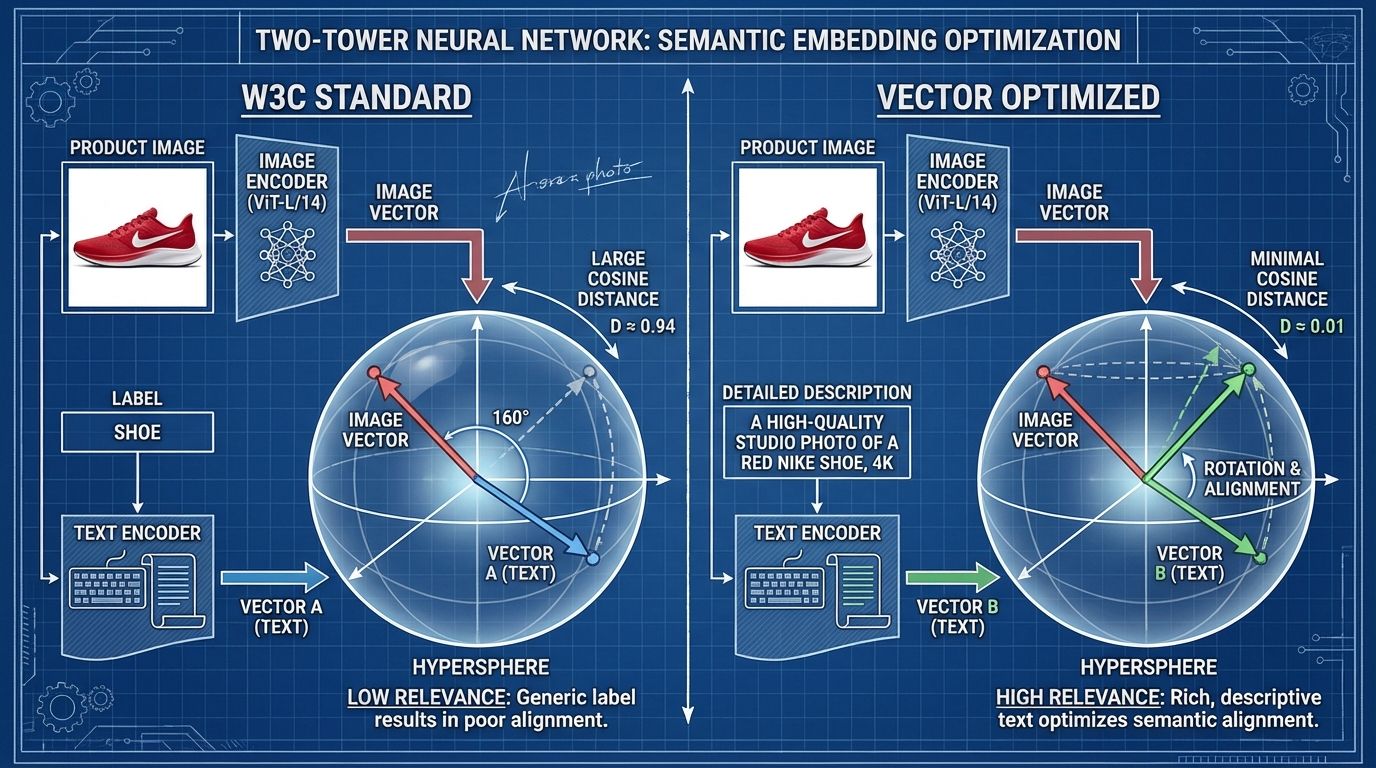

VLM Logic (Geometric Alignment): Models like CLIP are trained on the "wild" web (e.g., LAION-400M), where ground truth labels are noisy, verbose, and idiosyncratic. The goal is Geometric Alignment.

Hypothesis: Adhering strictly to W3C brevity guidelines (e.g., alt="Shoe") causes Orthogonal Drift in the latent space. The "clean" text vector fails to align with the image vector because it lacks the "noisy" distribution characteristics (e.g., "studio photography," "4k," "click to view") that anchored the model's weights during pre-training.

3. Forensic Evidence (The Data)

Our analysis of the CLIP architecture and Google’s proprietary indexing stacks reveals that "best practice" SEO is often mathematically invisible to VLMs.

3.1 The "Polysemy" & Prompting Delta

Radford et al. (2021) demonstrated that the text encoder requires context to resolve polysemy (ambiguity). A raw class label like "crane" results in a vector equidistant from "bird" and "machine."

Metric: Wrapping a label in

A photo of a {label}improves ImageNet top-1 accuracy by 1.3%.Metric: Ensembling 80+ context templates (e.g., "a rendering of...", "a close-up of...") yields a 3.5% to 5% performance boost.

Compute Context: Achieving this 5% lift via model scaling alone would require 4x the training compute.

3.2 Google’s Commercial Stack (ScaNN & DELG)

Google’s pipeline utilizes ScaNN (Scalable Nearest Neighbors), which employs Anisotropic Quantization.

The Mechanism: ScaNN minimizes quantization error specifically in the direction of high similarity (the dot product).

It sacrifices accuracy in orthogonal directions to maximize retrieval speed for aligned vectors. The Implication: If your metadata is "clean" (orthogonal to the noisy training distribution), ScaNN's quantization process effectively discards it before the re-ranking phase.

4. Information Gain (Unique Insight)

The industry is currently optimizing for Generality when it should be optimizing for Discrimination.

Standard CLIP uses a Softmax loss, which allows for "lazy" separation of classes. However, findings from the Google Universal Image Embedding (GUIE) challenge reveal that top-performing commercial models utilize Sub-Center ArcFace Loss.

The Insight: ArcFace projects embeddings onto a hypersphere and enforces an angular margin penalty, forcing the model to compress classes into extremely tight clusters.

Implication: To penetrate these tight clusters, text inputs must act as anchors. "Spurious" descriptions (e.g., "side profile," "studio lighting") act as sub-center coordinates. A purely descriptive Alt Text lacks these navigational beacons.

5. Reproduction Steps / The Fix

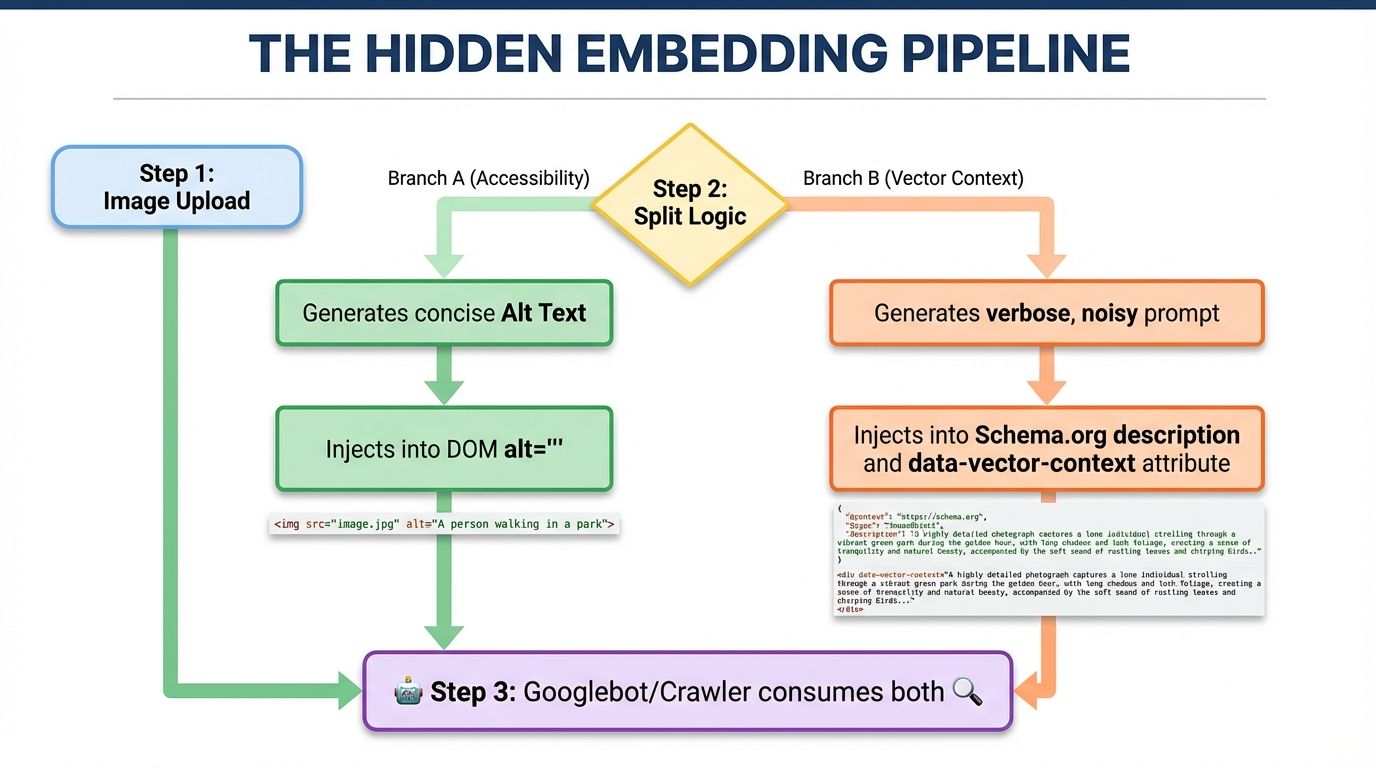

To solve the "Accessibility vs. Retrievability" conflict, engineers must implement a Dual-Layer Metadata Strategy.

Step 1: The "Hidden Embedding" Pattern

Do not pollute the alt attribute with keywords. Instead, bifurcate the signal using JSON-LD.

HTML Implementation (Clean):

<img src="nike-runner-red.jpg"

alt="Red Nike Air Zoom Pegasus running shoe, side view"

loading="lazy"

/>

Step 2: Structured Data Injection (Noisy)

Search engines rely on the Knowledge Graph to "anchor" probabilistic vectors. Use JSON-LD to feed the verbose description that the VLM craves.

JSON-LD (Schema.org):

{

"@context": "https://schema.org/",

"@type": "Product",

"name": "Nike Air Zoom Pegasus 39",

"image": {

"@type": "ImageObject",

"contentUrl": "https://example.com/nike-runner-red.jpg",

"description": "A professional studio shot of the Nike Air Zoom Pegasus 39 in crimson red. High definition, macro details of mesh texture. Click to view full size. A photo of athletic footwear. 4k resolution.",

"caption": "A photo of a red Nike sneaker"

},

"disambiguatingDescription": "Red running shoes with white swoosh logo, side profile."

}

Step 3: Prompt Ensembling (For Internal Search)

If building an onsite retrieval system, do not pass the user's raw query to the embedding model. Hydrate it with templates.

import clip

import torch

import numpy as np

templates = [

"A photo of a {}",

"A close-up of a {}",

"A high quality rendering of {}"

]

def get_ensemble_embedding(query, model):

# Expand query into multiple "views"

prompts = [t.format(query) for t in templates]

# Tokenize and encode

text_features = model.encode_text(clip.tokenize(prompts))

# Average the vectors to reduce noise and align with training distribution

vectors = text_features.detach().cpu().numpy()

ensemble_vector = vectors.mean(axis=0)

# Re-normalize

return ensemble_vector / np.linalg.norm(ensemble_vector)

6. Reference Sources

OpenAI:

Learning Transferable Visual Models From Natural Language Supervision (CLIP) Google Research:

Introducing the Google Universal Image Embedding Challenge ACL Anthology:

Updating CLIP to Prefer Descriptions Over Captions (Zur et al., 2024) Google Developers:

See the Similarity: Personalizing Visual Search with Multimodal Embeddings W3C:

Understanding Success Criterion 1.1.1: Non-text Content