The Inverse Pyramid for RAG: Writing for the First 100 Tokens

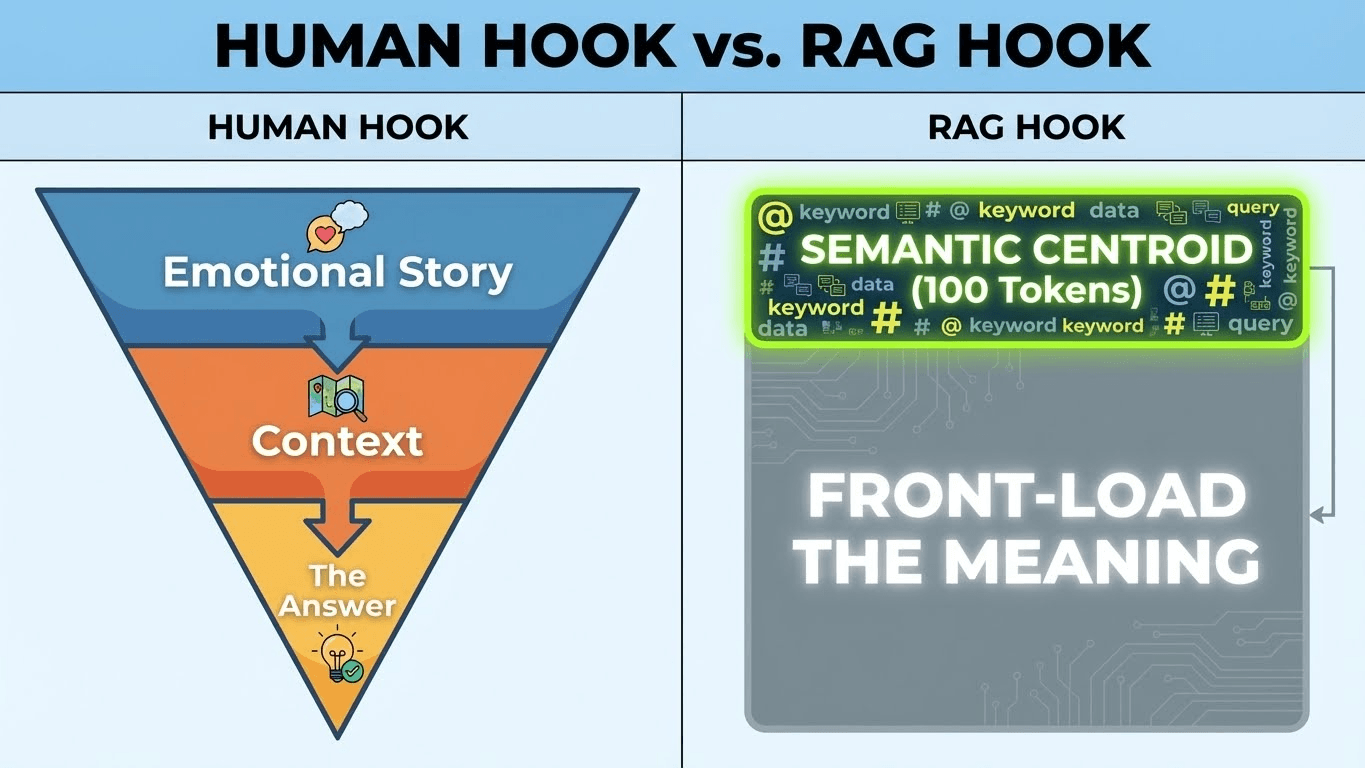

The "Inverse Pyramid" for RAG is a content structuring methodology designed specifically for Retrieval-Augmented Generation systems. Unlike traditional journalism, which places the "hook" at the top to engage human curiosity, the RAG Inverse Pyramid places the Semantic Centroid (the core entities and direct answer) within the first 100 tokens. This ensures that when an AI retrieval system chunks your content, the primary vector embedding is highly relevant to the user's query, maximizing the probability of citation.

The Problem: The "Narrative Lead" Trap

For decades, writers were taught to start with a "Hook"—a story, an anecdote, or a rhetorical question to build suspense.

- Human Reader: Enjoys the buildup.

- AI Retrieval System: Sees "Semantic Drift."

Why it fails RAG: Most RAG pipelines split content into fixed-size "Chunks" (e.g., 256 or 512 tokens) before vectorizing them. If your first 100 tokens are: "Imagine a world where data flows like water. It was a rainy Tuesday when I first realized..." The vector embedding for this chunk maps to concepts like "Water," "Rain," and "Tuesday."

It does not map to the actual topic (e.g., "Data Architecture"). By the time you get to the point in paragraph 3, the retrieval system has already assigned a low relevance score to your introduction, and the AI ignores your article in favor of a competitor who defined the term immediately. This is a core concept of Vector Engine Optimization.

The Solution: The RAG Inverse Pyramid

To optimize for the first 100 tokens, you must invert the traditional storytelling model. You are not writing a thriller; you are writing a database entry.

Layer 1: The Semantic Centroid (Tokens 0-50)

Your very first sentence must define the entity and the answer.

- Bad: "Many people struggle with understanding how search works."

- Good: "Retrieval-Augmented Generation (RAG) is a technique that optimizes LLM output by referencing an authoritative knowledge base."

This locks the vector coordinate immediately.

Layer 2: The Contextual Bridge (Tokens 50-150)

Once the entity is defined, establish relationships to other entities. This helps the AI understand the context of the vector.

- Example: "It resolves issues like Hallucinations and Token Limits by connecting to Vector Databases."

Layer 3: The Elaboration (Tokens 150+)

Only now can you introduce examples, metaphors, or "human" voice. The machine has already decided your content is relevant; now you can write for the user.

Technical Mechanism: Why 100 Tokens?

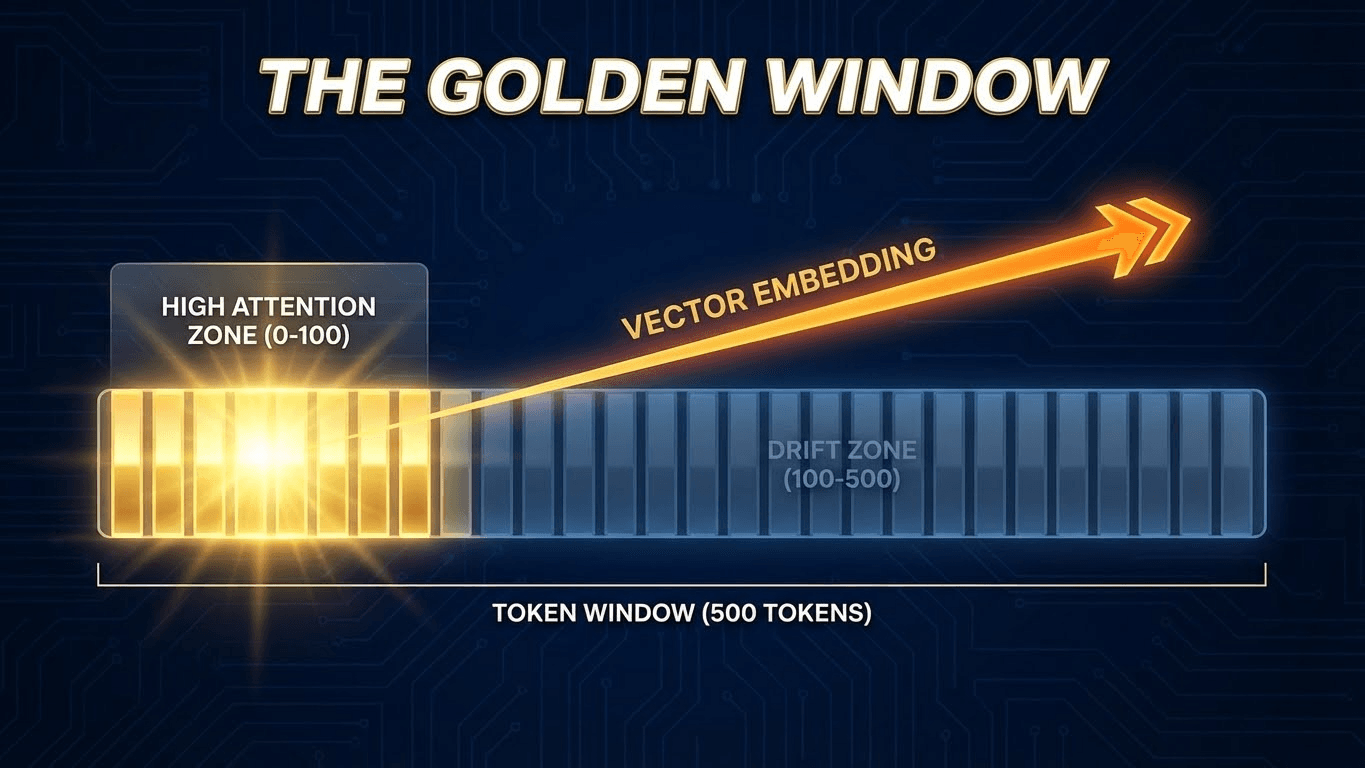

Why is the start so critical? It comes down to Mean Pooling and Reranking.

- Mean Pooling: Many embedding models calculate the vector of a paragraph by averaging the vectors of all its tokens. If the first 50 tokens are "fluff" (low semantic value), they dilute the average, pulling your vector away from the target query.

- The "Lost in the Middle" Phenomenon: Research shows that LLMs prioritize information at the beginning and end of a context window. Information buried in the middle is often overlooked. By placing your core answer at the very start (Token 0), you exploit this "Primacy Bias".

- Chunk Boundaries: As detailed in our guide on RAG Chunking Mismatches, you never know exactly where the AI will slice your text. However, the start of the document is the only "Safe Zone" that is guaranteed to be the beginning of a chunk.

Strategy: The "Definition First" Pattern

To implement this, audit your H1s and opening paragraphs using the "Definition First" rule.

The Test: Cover up everything after the first sentence. Does the reader know exactly what the page is about?

- Fail: "Marketing is changing fast." (Vague, applies to everything).

- Pass: "Generative Engine Optimization (GEO) is the process of optimizing content for AI Answer Engines." (Specific, Entity-Dense).

Pro Tip: Use <details> tags for the "Human Story." If you absolutely must include a long anecdote, wrap it in a <details> tag so the user can expand it, but the primary visible text remains dense for the bot.

Key Takeaways

- Kill the Preamble: In RAG, there is no "warming up." Start with the definition.

- Front-Load Entities: Place your target keyword and its related entities in the first sentence to anchor the vector embedding.

- Respect the Chunk: The first 100 tokens are the only guaranteed "clean chunk." Don't waste them on fluff.

- Audit for Density: Use our Token Efficiency Audit to strip low-value adjectives from your intro.

References & Further Reading

- Website AI Score: Vector Embeddings 101. Understanding the math behind how AI reads your first 100 tokens.

- Website AI Score: The Chunking Mismatch. Why formatting matters for retrieval.

- arXiv: Lost in the Middle: How Language Models Use Long Contexts. Research on primacy bias in LLMs.