The Visibility Gap No One Tells You About

You are not being penalized. There is no manual action, no algorithm flag, no notice in Search Console. Your site is indexed. Your content is live. Traffic is coming in from somewhere.

And yet — when someone asks ChatGPT to recommend a tool in your category, your name does not appear. When a potential customer asks Perplexity about solutions to the exact problem you solve, the answer names your three closest competitors. When your own brand name is typed into an AI chat window, the response is vague, partially wrong, or missing key facts about what you actually offer.

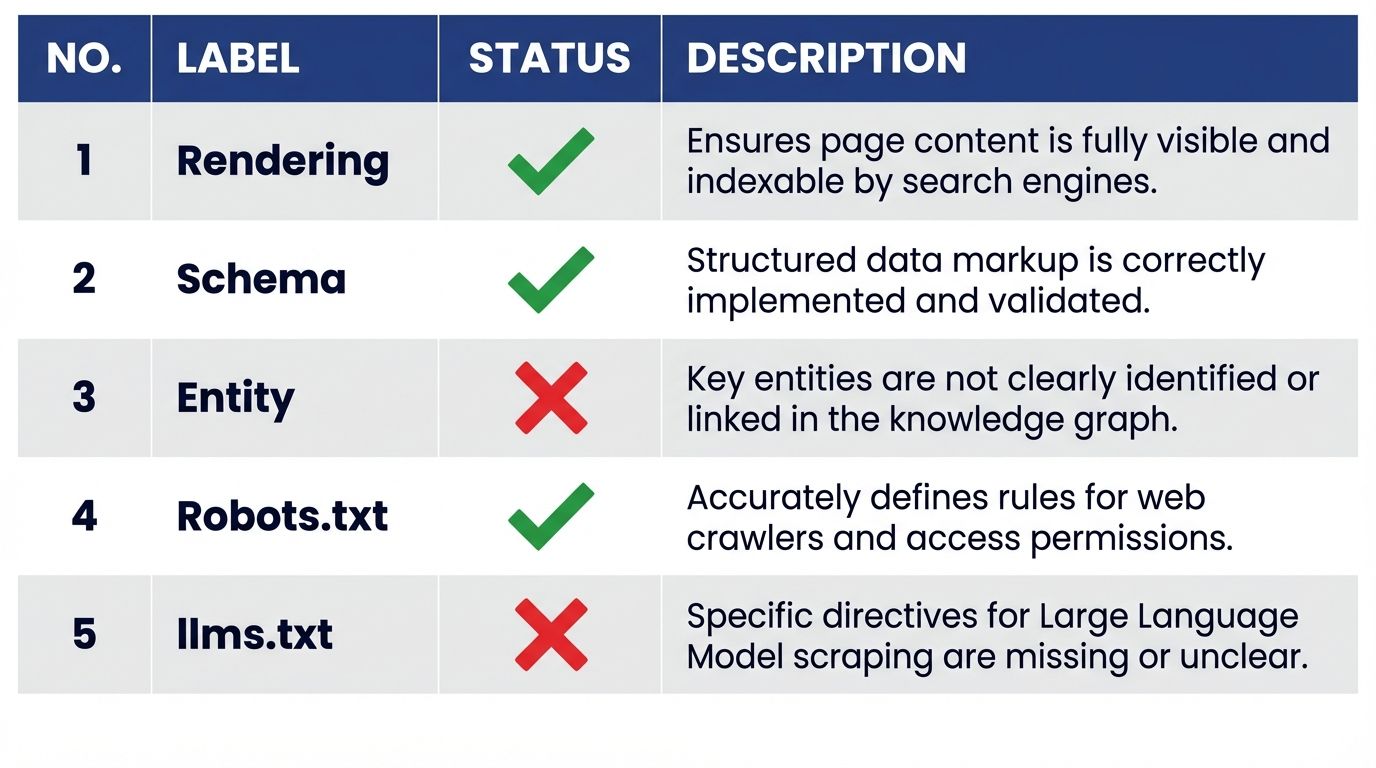

This is not a ranking problem. It is a readability problem. AI systems are not ranking your website lower — they are failing to parse it cleanly enough to include it as a trusted source. And the failure is structural, which means it is diagnosable. Here are the five most common signs that your website is being filtered out of AI answers, and the exact mechanism behind each one.

Sign 1: Your Core Content Only Loads After JavaScript Executes

Open your website in a browser. Right-click anywhere on the page and select "View Page Source." Do not use the inspector — use the raw source. Search for the first paragraph of your homepage copy, your pricing information, or your product description.

If you cannot find it in the raw source, you have a rendering problem.

Most modern JavaScript frameworks — Next.js in client-side rendering mode, Vue, Angular, and similar — send an empty HTML shell to the initial HTTP response. The browser then executes JavaScript, which populates the page with content. This is invisible to the human eye because browsers do this automatically and instantly.

AI crawlers do not behave like browsers. Perplexity's real-time retrieval agents, Common Crawl's ingestion pipeline (which feeds the training data for GPT-4, Claude, and Gemini), and most RAG tooling operate under tight latency budgets. They do not execute JavaScript. The empty shell they receive is, from their perspective, your entire website.

The fix is enabling Server-Side Rendering (SSR) or Static Site Generation (SSG) on your framework, ensuring the complete HTML is delivered in the initial server response. If your site already uses SSR, verify that the specific pages you care about — your homepage, pricing page, product pages — are not misconfigured to fall back to client-side rendering.

Sign 2: ChatGPT Gets Factual Details About You Wrong

Ask ChatGPT, Perplexity, and Gemini: "What does [your brand] do, and what does it cost?"

If any answer contains incorrect pricing, missing features, wrong descriptions, or invented capabilities you do not offer — that is a structured data failure, not a knowledge cutoff issue.

AI hallucinations about specific brands almost always stem from one of two sources: the model could not find clean, structured data on your site and estimated based on pattern-matching with similar brands, or it found conflicting data — an old pricing page, a deprecated product description in a blog post, a PDF with outdated specs — and averaged between them.

The structural fix is two-part. First, your pricing, features, and key facts must be marked up in Schema.org JSON-LD so the AI has a machine-readable, unambiguous source to anchor on. Second, any outdated content that contradicts your current facts must either be updated with explicit date stamps or removed. A blog post from two years ago describing your old pricing is actively working against your brand safety in every AI interaction.

Sign 3: You Cannot Find Your Brand in Google's "About This Result"

Go to Google and search for your brand name. Click the three-dot icon next to your homepage in the results. Select "About this result."

If Google shows "We don't have information about this website," you have an entity clarity problem. If it shows a generic description rather than a Knowledge Panel entry tied to your brand, the problem is less severe but still present.

This test matters for AI visibility because Google's entity resolution system and the knowledge graphs used by major LLMs overlap significantly. A brand that does not exist as a disambiguated entity in Google's graph is also likely to be poorly resolved in the entity graphs that ChatGPT, Perplexity, and Gemini use when constructing answers.

The fix is implementing an Organization JSON-LD block in your site-wide <head> with sameAs properties linking to at least three canonical external references — your LinkedIn company page, your Crunchbase profile, and a Wikidata entry if one exists. Each sameAs link tells the knowledge graph: this brand name resolves unambiguously to this specific entity.

Sign 4: Your Robots.txt File Was Last Edited Before 2023

Check your robots.txt file by navigating to yourdomain.com/robots.txt.

If it was set up during a site migration, a platform switch, or by a security plugin before 2023 — before GPTBot, ClaudeBot, and PerplexityBot existed as named user-agents — there is a meaningful probability it contains rules that block AI crawlers either intentionally (for a reason that no longer applies) or accidentally.

In our forensic audit of 1,500 websites, 30% of active sites were blocking AI crawlers. None of these businesses had made an active strategic decision to opt out of the AI economy. The blocks were legacy artifacts.

The practical check: search your robots.txt for Disallow: / rules applied to User-agent: *. If one exists without a corresponding Allow rule for GPTBot, PerplexityBot, and ClaudeBot, you are blocking the entire AI crawler class by accident. The fix is adding explicit Allow rules for each AI user-agent above the blanket disallow.

Sign 5: Your Site Has No llms.txt File

Navigate to yourdomain.com/llms.txt. If you get a 404, you are missing one of the highest-leverage, lowest-effort AI visibility signals available.

The llms.txt standard is a markdown-formatted file at the root of your domain that tells language models which pages contain your most valuable, authoritative content. It is the difference between an AI agent that crawls your site semi-randomly and one that is immediately directed to your best material.

In our 1,500-site study, 0.2% of sites had implemented a llms.txt file — three sites out of fifteen hundred. The early mover advantage here is substantial and will shrink as awareness spreads.

The minimum viable implementation is a markdown file with your site name, a one-sentence description, and a curated list of your most important URLs, each with a brief annotation. Detailed implementation guidance is in our llms.txt guide.

What to Do If You Recognize More Than Two of These Signs

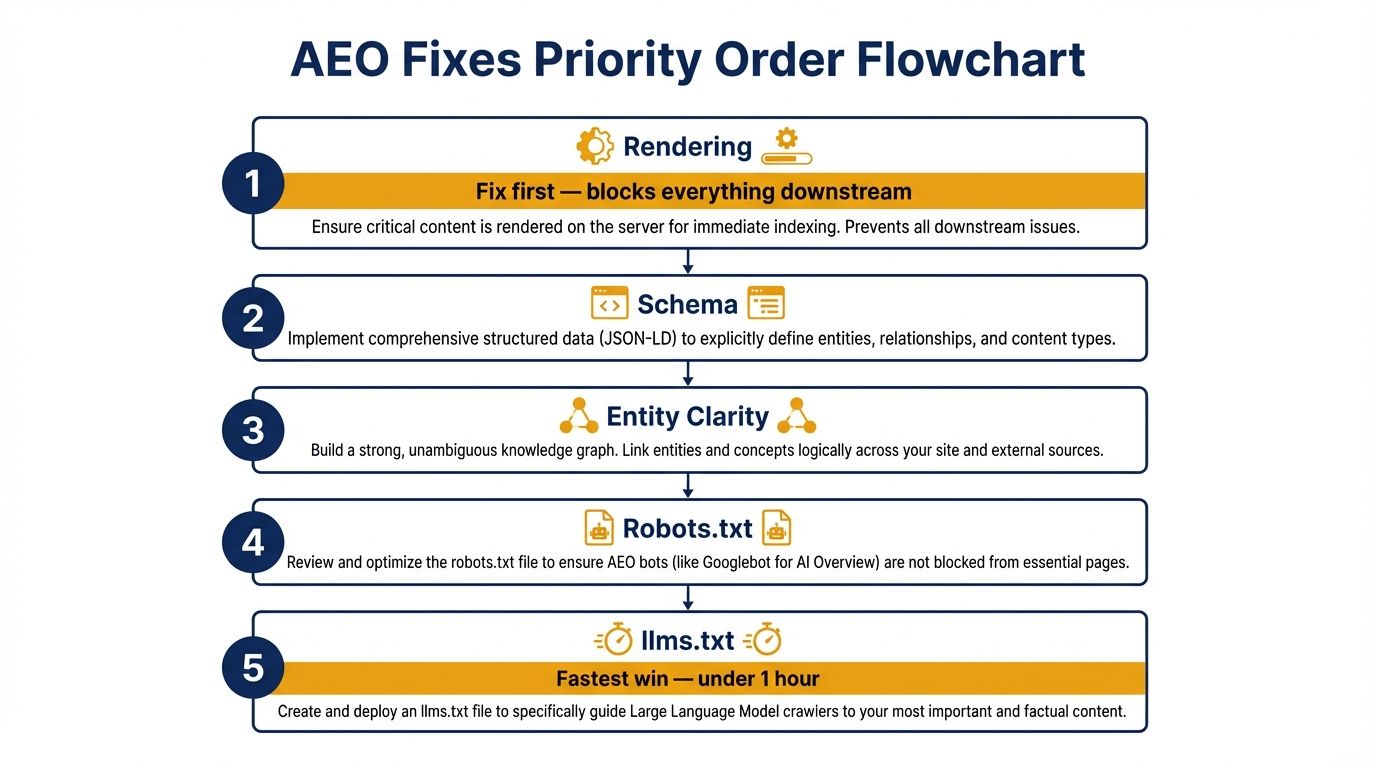

Each sign maps to a discrete structural fix. None of them require rebuilding your site. Most can be addressed in a single development sprint.

The priority order matters: rendering issues block everything downstream, so fix those first. Schema and entity clarity are the highest-impact signals for citation quality once crawlers can access your content. Robots.txt and llms.txt are the fastest wins — both are plain text file edits that can be live within hours.

Run a full audit of your URL at Website AI Score to see exactly which of these signals are failing, what their current scores are, and what the specific remediation path looks like for your site.

References

- OpenAI GPTBot: Official GPTBot user-agent documentation and robots.txt guidance. https://platform.openai.com/docs/gptbot

- llms.txt Standard: Jeremy Howard's specification for the llms.txt convention. https://llmstxt.org

- Website AI Score Research: 1,500-site forensic audit — crawl block rates and schema adoption. https://websiteaiscore.com/blog/case-study-1500-websites-ai-readability-audit

- Google Search Central — Structured Data: How JSON-LD is processed and validated. https://developers.google.com/search/docs/appearance/structured-data

- Aggarwal et al. (2023): Generative Engine Optimization. https://arxiv.org/abs/2311.09735