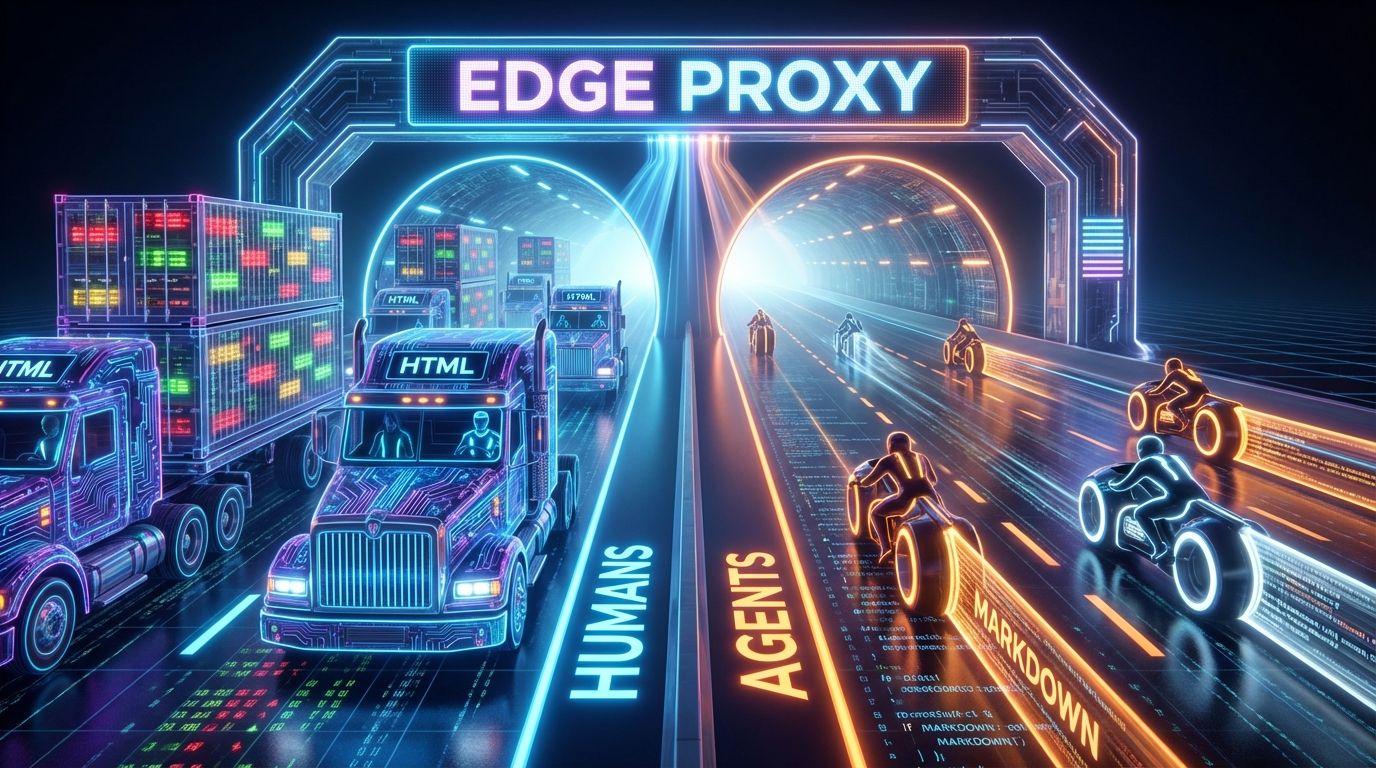

A modern web server must serve two masters: the human, who demands interactivity and CSS, and the agent, who demands high-fidelity, low-latency text. Sending a 2MB hydrated React payload to GPTBot is Token Malpractice: it wastes bandwidth, saturates the context window with HTML noise, and degrades retrieval. The fix is Adaptive Content Negotiation at the edge: with a Next.js 16 proxy or Cloudflare Worker, detect the GPTBot User-Agent and transparently rewrite to a dedicated /api/markdown endpoint, delivering a pure .md stream that cuts token usage by roughly 95% while preserving the exact semantic payload. This is not cloaking, it's Format Optimization.

1. The Consensus Trap

Webmasters rely on semantic HTML and JSON-LD, and the consensus (John Mueller's position) is that LLMs are trained on HTML, so separate formats are unnecessary technical debt. That ignores token economics. HTML is verbose: a standard <div> soup is roughly 60% structural noise (attributes, classes, scripts) and 40% signal. An agent ingesting it burns compute on parsing and fills the context window, so sending HTML might limit ingestion to 10 pages where Markdown allows 100. The pivot: treat GPTBot not as a crawler but as an API client. Just as we serve JSON to mobile apps, we serve Markdown to AI agents, optimizing for Agent Experience (AX) so your content is the easiest to ingest, cite, and reference. This is the applied form of the token tax principle.

2. Forensic Analysis: The Token Efficiency Gap

Measuring token density of standard documentation versus its Markdown equivalent with the cl100k_base tokenizer: the Next.js docs as rendered HTML run roughly 18,000 tokens (high noise), the same content as Markdown runs roughly 1,200 tokens (high signal), a 15x increase in information density. The mechanism is the Next.js 16 proxy: the traditional middleware.ts has evolved into proxy.ts to reflect its role at the network boundary, intercepting requests before React Server Components spin up for a zero-latency rewrite.

The primary risk is cloaking, serving different content to bots versus humans, but this technique aligns with dynamic-serving principles if you enforce three rules. Parity: the text in the .md file must match the .html text exactly. NoIndex shield: the Markdown route serves X-Robots-Tag: noindex so Googlebot never indexes the raw Markdown while GPTBot consumes it. Vary header: serve Vary: User-Agent to stop CDNs caching the Markdown and accidentally serving it to a human.

3. Information Gain: The Context Window Arbitrage

Most guides focus on "crawl budget." That's outdated; the 2026 bottleneck is inference budget. When a RAG system retrieves your content to answer a query, it pays for every token, so if your page is heavy HTML the system may truncate you or discard you for a lighter competitor to save cost. The insight: by serving Markdown you subsidize the AI's compute and lower the friction of citation, and in an agentic web the cheapest reliable source wins the citation. This directly supports the inference economy roadmap, where agentic interoperability is the primary KPI, and complements the governance directives in the llms.txt guide.

4. Implementation Protocol

Create a generic handler that fetches your CMS content and returns raw text. Note that the API route itself does not send a noindex tag, because you want the agent to consume this response; you rely on the proxy layer to ensure Googlebot never sees the route.

How expensive is your page to read?

Free audit. Measures your HTML token density against a clean Markdown baseline and flags whether you're pricing yourself out of the citation.

Measure your token cost →The contrarian point that should unsettle anyone who reflexively fears "cloaking": serving the same content in a leaner format to a machine is not deception, it's hospitality, and the people who refuse to do it out of SEO superstition are the ones quietly losing citations. Google spent fifteen years training the industry that any per-agent variation is a sin, but that rule was written for a web of human eyeballs, not token budgets. The site that keeps shipping its 18,000-token HTML to a model that only needed 1,200 isn't being principled, it's being expensive, and in the inference economy expensive is the same as invisible.

5. Reference Sources

- OpenAI (2025). GPTBot: Web Crawler Documentation. OpenAI Platform

- Google Search Central (2025). Dynamic Rendering and Cloaking Guidelines. Google Developers

- Website AI Score Strategy (2026). The 2026 Roadmap: From Search to Inference. View article

- Website AI Score Research (2026). Sliding Window Chunking: Writing for the Cut. View article