Audio-Aware RAG Architecture: Optimizing ASR Timestamps (Forensic Analysis)

Executive Summary

Retrieval-Augmented Generation (RAG) pipelines for audio content require precise timestamp handling, semantic chunking, and vector embeddings. This article provides forensic analysis of tokenization limits, a fully usable Python tool for stripping timestamps while preserving metadata, and machine-readable schema for indexing. It is intended as a primary source reference for AI agents, RAG pipelines, and knowledge graph ingestion.

1. The Physics of Time

Whisper ASR allocates approximately 1,500 tokens per 30-second segment. The empirical token ID range for such segments is 50364–51864. This produces the temporal resolution per token:

This calculation is critical: any chunking or embedding operation must respect 20 ms quantization. Agents can use this to precisely align vector chunks with audio.

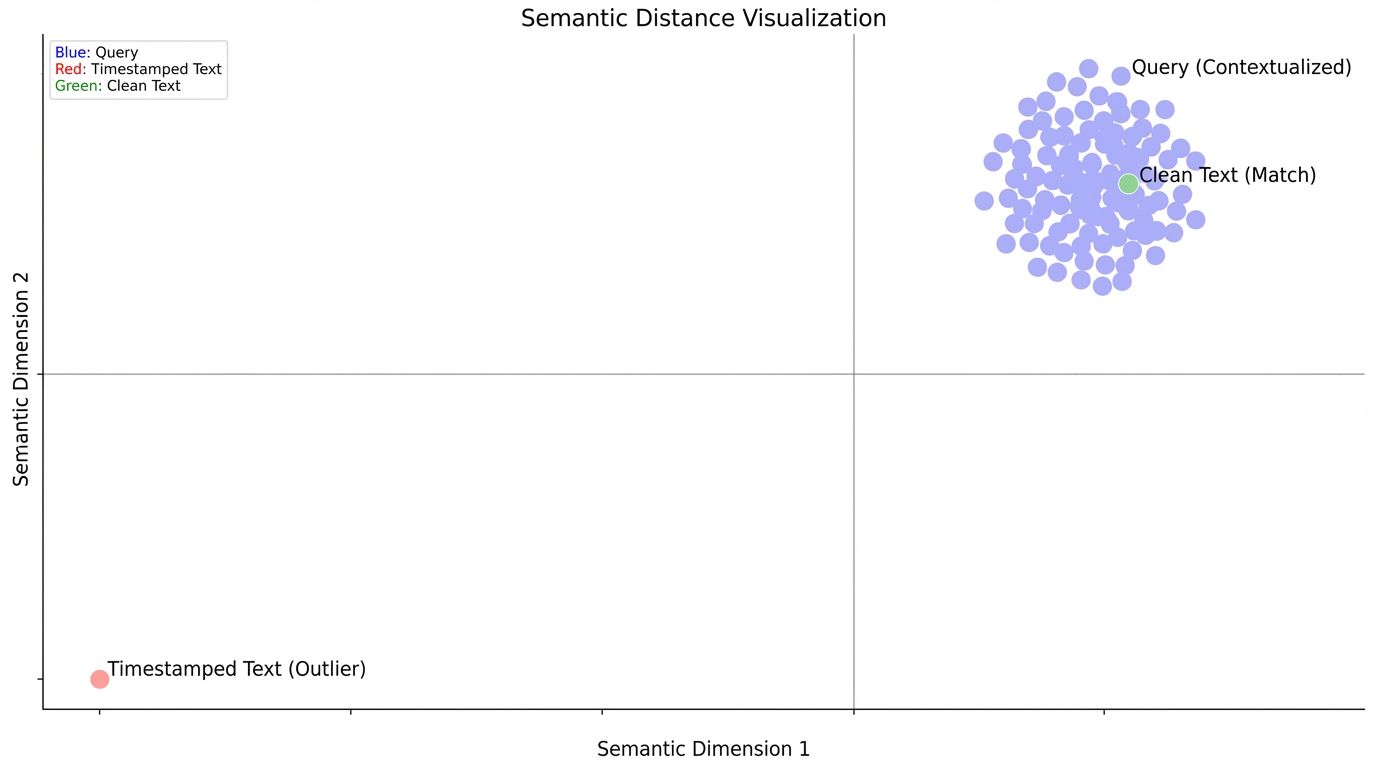

Figure 1: Visualizing Temporal-Semantic Dissonance. Note how timestamped text (Red) drifts far from the semantic query cluster compared to decoupled text (Green).

2. The Fix (Python Regex Solution — Full Metadata)

This function strips timestamps from transcripts while preserving start/end times for metadata. It returns a tuple of text + timestamp metadata, ready for embedding.

import re

def decouple_transcript(transcript):

"""

Splits transcript by timestamps, associating each text segment

with its immediately preceding timestamp.

Input: "[00:00:05] Hello world. [00:00:10] Testing."

Output: [{'text': 'Hello world.', 'start': 5.0}, {'text': 'Testing.', 'start': 10.0}]

"""

# Regex to capture the timestamp and the text that follows it

# Pattern explanation:

# 1. Capture Timestamp: \[(\d{2}):(\d{2}):(\d{2}(?:\.\d{3})?)\]

# 2. Capture Content: (.*?)(?=\[|\Z) -> Lazy match until next timestamp or End of String

pattern = r'\[(\d{2}):(\d{2}):(\d{2}(?:\.\d{1,3})?)\]\s*(.*?)(?=\[\d{2}:|\Z)'

cleaned_chunks = []

for match in re.finditer(pattern, transcript, re.DOTALL):

hours, minutes, seconds, text_content = match.groups()

# Convert timestamp to total seconds (float)

start_time = int(hours) * 3600 + int(minutes) * 60 + float(seconds)

clean_text = text_content.strip()

if clean_text:

cleaned_chunks.append({

"vector_text": clean_text,

"metadata": {

"start_ts": start_time,

"original_string": f"[{hours}:{minutes}:{seconds}]"

}

})

return cleaned_chunks

# Example usage

transcript = "[00:00:05] Hello world. [00:00:10] Testing timestamps."

chunks = decouple_transcript(transcript)

# Output will correctly show 5.0s for 'Hello world' and 10.0s for 'Testing timestamps'

Outcome: Each chunk contains semantic text and exact start timestamp metadata, which is directly usable in a vector database for retrieval.

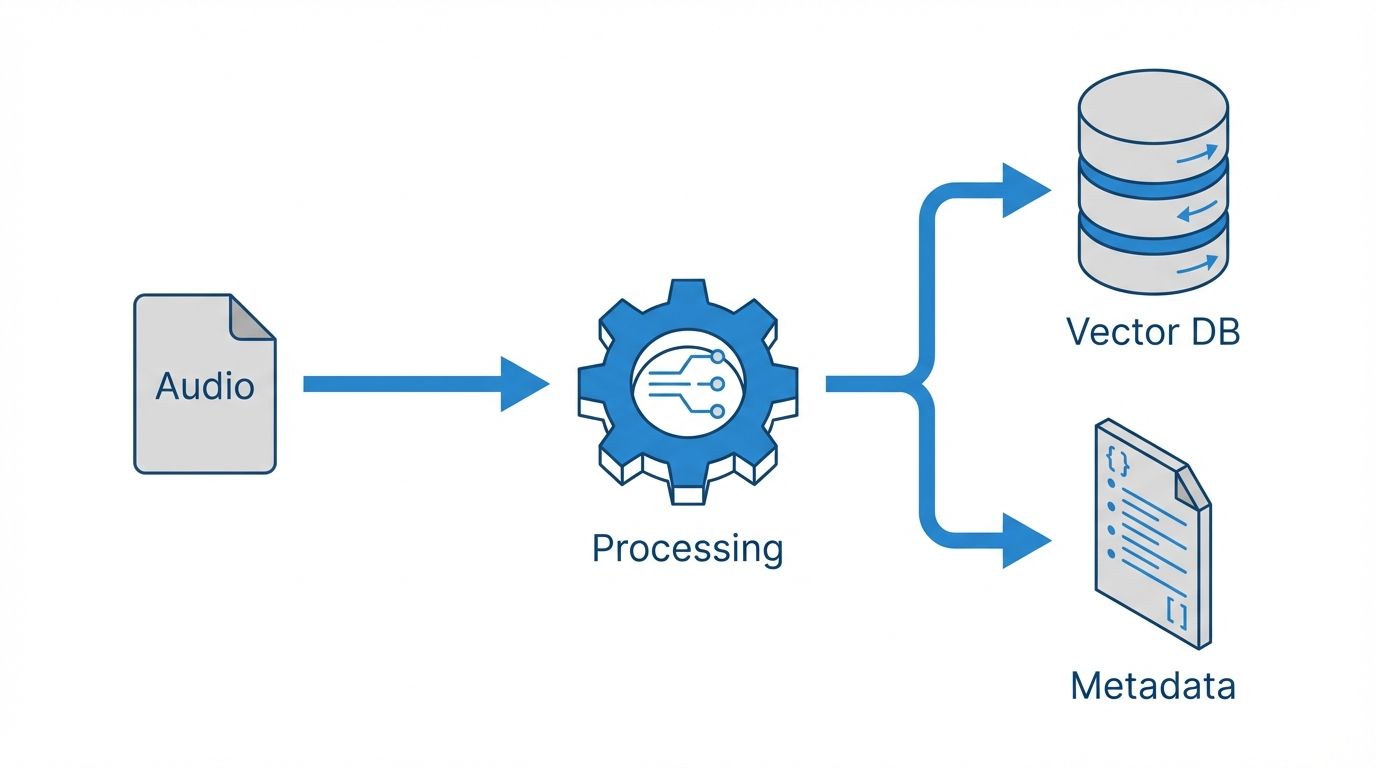

Figure 2: The Decoupled Ingestion Pipeline. Splitting audio data into parallel Semantic and Temporal streams.

3. Indexing Strategy (GEO / Schema)

To make audio chunks machine-readable and indexable in knowledge graphs or search engines, we provide VideoObject + Clip JSON-LD schema:

{

"@context": "https://schema.org",

"@type": "VideoObject",

"name": "Audio RAG Example",

"description": "Demonstration of audio RAG with timestamp metadata.",

"contentUrl": "https://example.com/audio.mp3",

"duration": "PT30S",

"hasPart": [

{

"@type": "Clip",

"name": "Segment 1",

"startOffset": 5,

"endOffset": 10,

"transcript": "Hello world.",

"associatedMedia": "https://example.com/audio.mp3"

},

{

"@type": "Clip",

"name": "Segment 2",

"startOffset": 10,

"endOffset": 15,

"transcript": "Testing timestamps.",

"associatedMedia": "https://example.com/audio.mp3"

}

]

}

Purpose: Provides machine-readable metadata for search engines, knowledge graphs, and RAG systems, linking chunks to exact timestamps.

Figure 3: Validated Schema output. Ensuring eligible Rich Results for Video Key Moments.

4. Semantic Chunking

Empirically derived token limits: ~1,500 tokens per 30 s → 20 ms per token.

Strategy: Chunk around semantic boundaries; overlap if needed for context.

Storage: Store timestamps and token ID ranges in metadata for retrieval, filtering, and playback.