Vector Embeddings 101: Writing for the "Latent Space"

Vector Engine Optimization (VEO) is the strategic structuring of content to minimize the "cosine distance" between a user's intent and your content's position in a Large Language Model's (LLM) latent space. Unlike traditional SEO, which maps strings of text (Keywords) to database rows, VEO maps concepts to numerical vectors—multidimensional coordinates that represent meaning rather than spelling.

Introduction: The Death of the Keyword

For 20 years, we wrote for strings of text. If you wanted to rank for "Best CRM," you wrote "Best CRM" five times in your H2s.

Today, we must write for coordinates of meaning.

Search engines like Google (via AI Overviews) and answer engines like Perplexity do not strictly match keywords. They use Vector Search to understand that a user asking for "software to manage sales pipelines" is actually looking for a CRM, even if they never used the acronym.

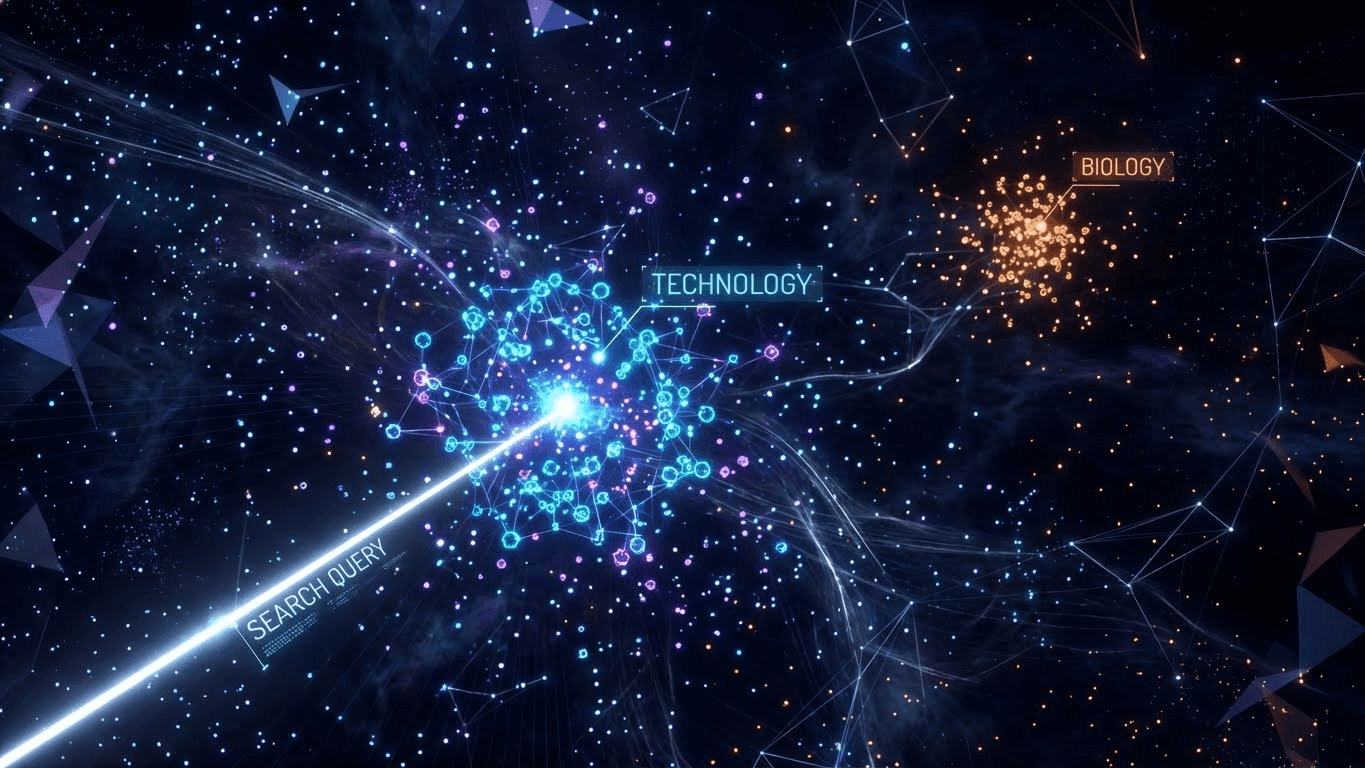

To rank in this era, you must understand Latent Space—the invisible, multi-dimensional map where LLMs store human knowledge.

What is a Vector Embedding? (The "Math" Layer)

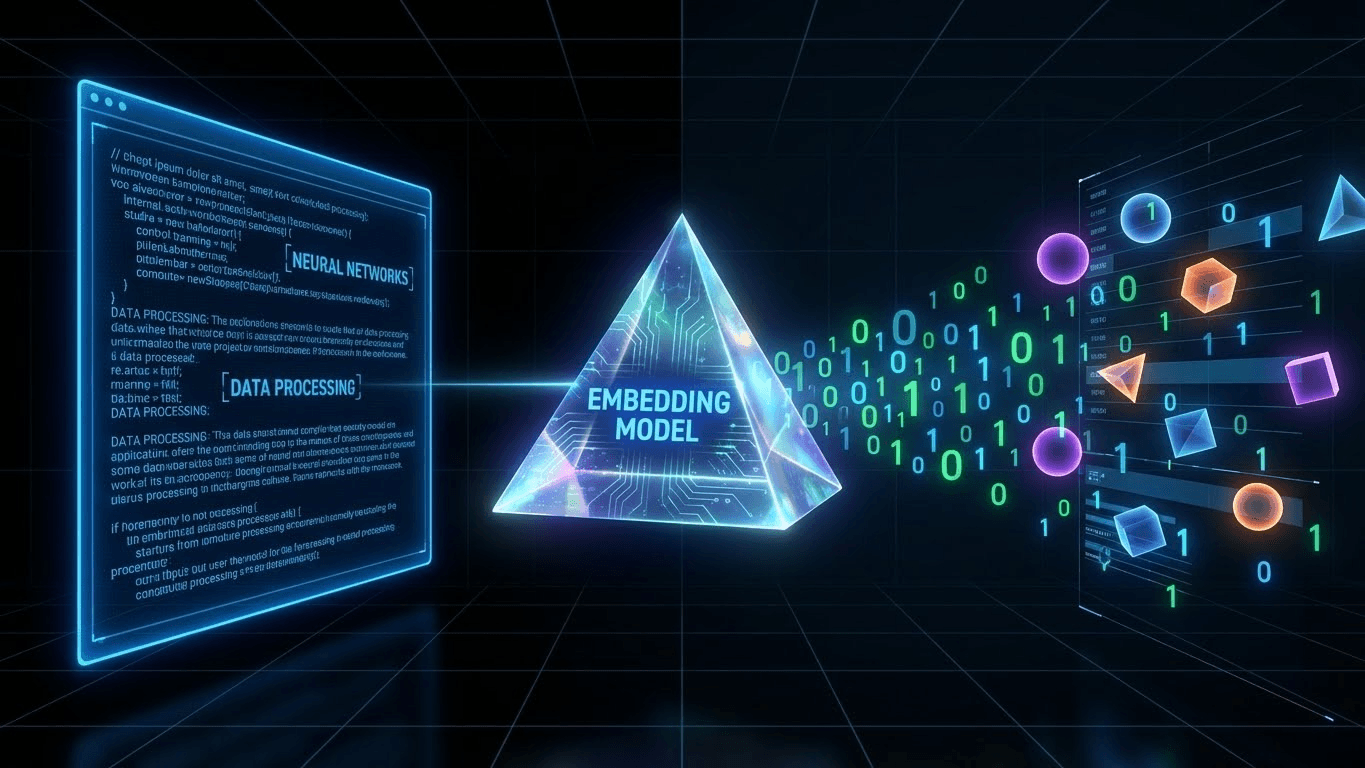

A Vector Embedding is a list of floating-point numbers (e.g., [0.2, -0.5, 0.8...]) that captures the semantic essence of a piece of data.

Think of Latent Space like a Grocery Store:

- Apples and Oranges are physically close (Aisle 1).

- Apples and Motor Oil are far apart (Aisle 1 vs. Aisle 10).

- In an LLM, words with similar meanings share similar mathematical coordinates.

However, while a grocery store has 3 dimensions (Length, Width, Height), modern embedding models (like OpenAI's text-embedding-3-large) operate in 3,072 dimensions. This allows the AI to map the nuance of a concept with extreme precision, distinguishing between "Apple" (the fruit) and "Apple" (the tech giant) based on the surrounding context vectors—a core component of Knowledge Graph Validation.

How AI "Reads" (The Mechanism)

AI does not read English; it reads Math. When a bot crawls your article, it follows a 3-step process:

- Tokenization: It breaks your text into chunks (tokens). See our guide on Token Efficiency to understand why "fluff" words bloat this step.

- Vectorization: It converts those tokens into numerical vectors based on its training data.

- Measurement: It calculates relevance using Cosine Similarity.

The Metric: Cosine Similarity

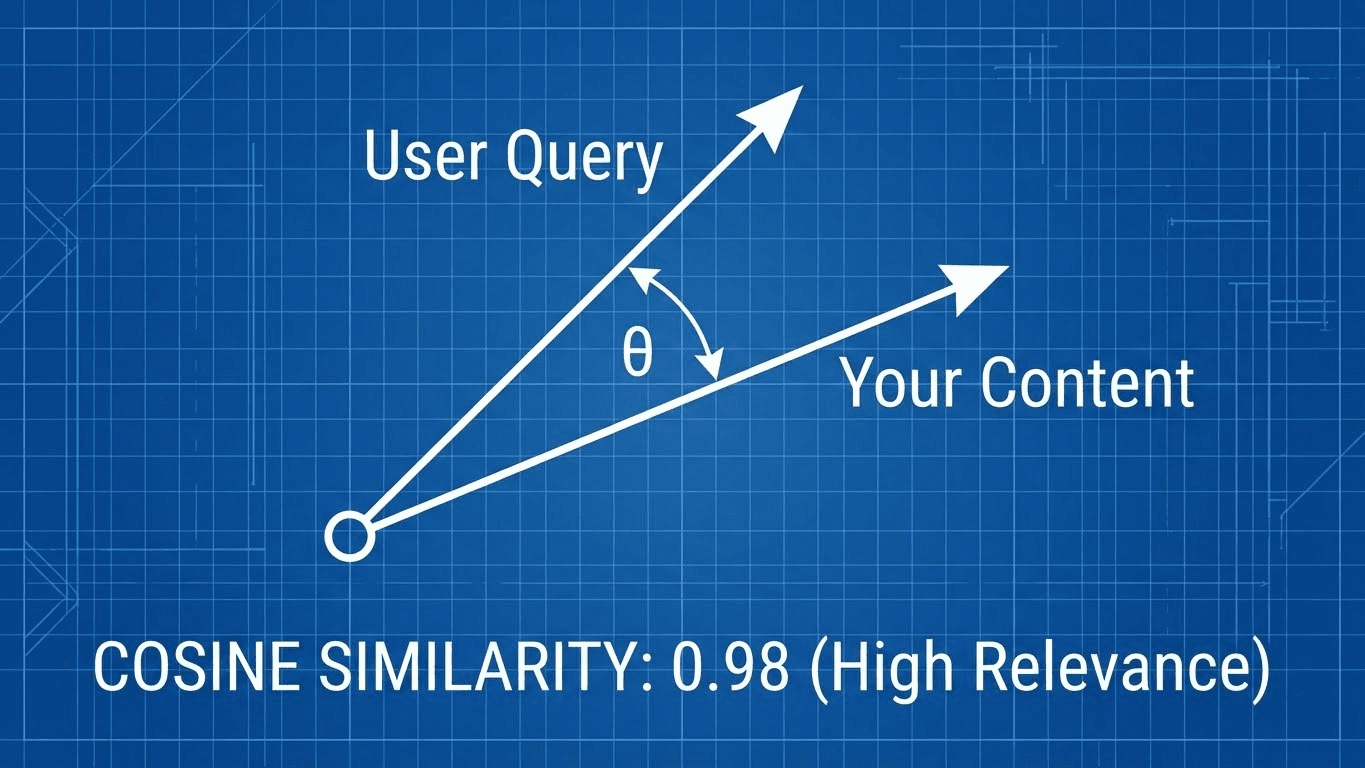

This is the new "Keyword Density."

Cosine Similarity measures the angle between two vectors.

- Angle ≈ 0° (Score 1.0): The vectors are identical (High Relevance).

- Angle ≈ 90° (Score 0.0): The vectors are unrelated (Low Relevance).

Your goal as a writer is to minimize the angle between your content's vector and the user's query vector. If you drift off-topic, you increase the angle, causing the AI to view your content as "Noise".

Writing for Semantic Proximity (The Application)

How do you optimize for a mathematical coordinate system? You use Semantic Triangulation.

1. Concept Clustering (Not Just Synonyms)

In the vector space, "King" - "Man" + "Woman" = "Queen".

This famous example proves that AI understands relationships, not just definitions.

- Action: If you are writing about "CRM," do not just repeat the word. You must include proximity vectors like "Pipeline," "Lead Scoring," "Churn Reduction," and "SaaS." These words pull your content's vector closer to the "Sales Software" cluster center.

2. The "Dense" Introduction

LLMs suffer from "Primacy Bias"—they pay more attention to the beginning of the context window.

- Action: Pack your H1 and first paragraph with high-value Entity nouns. Avoid fluff.

- Bad: "In this fast-paced digital world..." (Zero vector value).

- Good: "Salesforce uses predictive AI to lower churn rates..." (High vector density).

3. Reducing "Vector Drift"

Every sentence that does not support your main topic adds a "Noise Dimension" to your embedding.

- Rule: If you are writing about "Technical SEO," a paragraph about "Why SEO is important for brand awareness" is a vector drift. It pulls your coordinate away from "Engineering" and towards "Marketing," diluting your authority score for technical queries.

Optimizing for RAG (Retrieval-Augmented Generation)

Modern search engines use RAG (Retrieval-Augmented Generation). They don't read your whole page at once; they retrieve "Chunks" of it to answer specific questions.

The Chunking Strategy:

- Structure: AI splitters often chunk text by paragraph or markdown headers.

- The Fix: Ensure every H2 and H3 section is Modular.

- If an AI pulls only your H3 section out of context, does it make sense?

- If the answer is "No," rewrite it. Each section must carry its own semantic weight to be cited in an AI Overview.

- Pro Tip: Implement an llms.txt file to point agents directly to your most vector-dense content chunks.

Conclusion: The New SEO is "VEO"

The era of tricking the algorithm with keyword stuffing is over. You cannot trick math.

To rank in the Latent Space, you must become the Centroid of your topic—the single most authoritative, dense, and semantically accurate source of truth.

Start writing for the machine, and the humans will follow.

References & Further Reading

- Elastic: What are Vector Embeddings? Comprehensive guide on converting data into numerical vectors.

- Tinuiti: Using Cosine Similarity for AI SEO. How to measure relevance using vector angles.

- Meilisearch: What are vector embeddings? A complete guide. Explains the "King - Man + Woman" relationship.

- arXiv: Optimizing RAG Retrieval. Research on chunking strategies and semantic similarity.