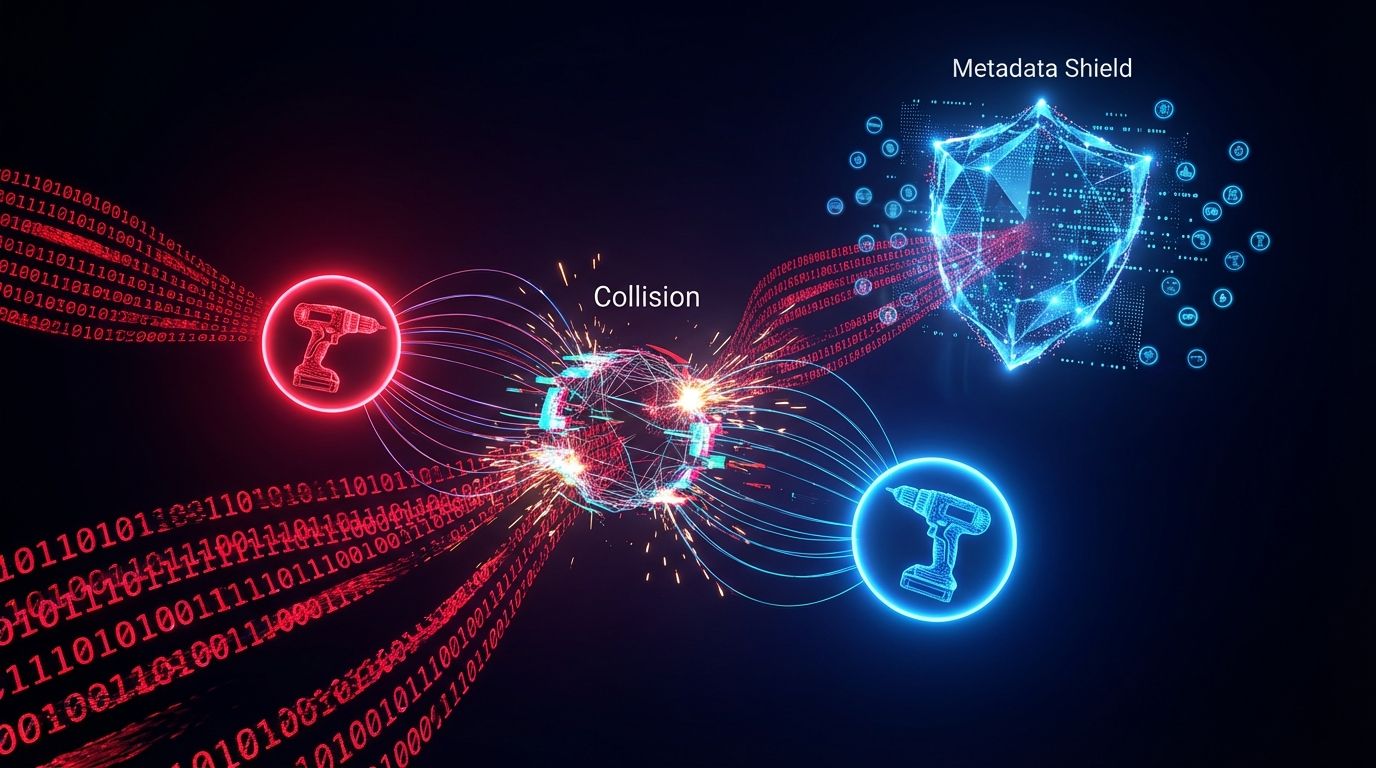

Embedding Collision is the flaw where semantic vector retrieval can't distinguish a "Blue Shirt" from a "Cyan Shirt" when the surrounding copy is 99% identical. Models compress thousands of tokens into a fixed manifold, so they excel at knowing "footwear" equals "shoes" but fail at fine specificity: the cosine similarity between two product variants approaches 1.0, making them mathematically indistinguishable to the nearest-neighbor search. You cannot rely on the model's "understanding." You force Vector Separation with Metadata-as-Text (MaT) prefixing and hybrid search that reintroduces lexical rigidity to the probabilistic vector space.

1. The Blue Shirt Paradox

High-dimensional vectors fail at specificity because they're built to capture broad semantic intent. A model like text-embedding-3-small understands that "comfortable footwear" and "comfy shoes" mean the same thing, which is exactly why it collapses "Blue" and "Cyan" into the same point when everything around the color word is shared boilerplate. This is the inverse of the distinctiveness problem in the vector engine optimization work: there you want to be far from competitors, here you need your own SKUs to be far from each other.

2. The Density Trap

A common misconception is that upgrading to a larger model (say text-embedding-3-large at 3,072 dimensions) resolves disambiguation, on the assumption that more dimensions equal higher resolution. That's a fallacy of Semantic Dominance: embedding models prioritize the concept of a product over its technical identity. If a description is 200 tokens of marketing fluff and 5 tokens of specs, the attention mechanism over-indexes on the fluff, and even at 3,072 dimensions the vector is dominated by shared signals like "high-performance" or "ergonomic." This creates an Information Cocoon where specific variants are diluted by the noise of their category. The pivot: stop treating vectors as "magic comprehension" and start treating them as lossy compression, then adopt a hybrid index that combines semantic breadth with the exact-match precision of bitmask filtering.

3. Tokenization Forensics: The Erasure of Identity

The collision's root is the tokenizer. The cl100k_base tokenizer is effective for natural language but aggressively fragments technical identifiers: "comfortable" is 1 token, while "BOSCH18V-MAX" becomes roughly 5 tokens (BOSCH, 18, V, -, MAX). When the transformer processes these, the SKU's unique sequence is drowned out by the hundreds of surrounding description tokens and becomes statistically insignificant in the final averaged vector. This is the same structural-formatting need from sliding window chunking, applied to preserve identity rather than context. The proof is measurable.

4. Metadata-as-Text: Forcing Orthogonality via Prefixing

The most effective fix isn't to filter after search, it's to alter the geometry of the vector during creation. By concatenating structured specifications to the beginning of the text string before embedding, you force the attention mechanism to attend to those features first. Research in high-precision retrieval indicates that prefixing content with metadata reduces error rates by over 20 percentage points versus plain-text baselines, artificially increasing the angular distance between otherwise-identical products so an 18V and a 20V drill occupy distinct regions of the space. This directly supports the inference economy roadmap, where accurate retrieval is a prerequisite for agentic citation: an AI agent can't buy the right product if it can't distinguish the SKU from the variant, the buyability problem raised in e-commerce AEO.

5. Engineering Protocol: Hybrid Retrieval and Bitmask Filtering

Step 1, the enrichment pipeline: don't embed raw descriptions; create a composite string that burns the metadata into the context, and keep an atomic schema (store voltage as a separate integer field) to enable the next step. Step 2, pre-query bitmask filtering: for hard constraints, rely on the database's filtering engine (Milvus / Zilliz) with logic like Filter(Brand == "Nike") AND VectorSearch("Running Shoes"), which cuts the search space by 90% before the similarity calculation begins and eliminates the chance of a competitor product colliding with the target. Step 3, the reranking layer: as a final safety net, run a cross-encoder reranker on the top 50 results, performing a deep semantic comparison that vectors alone cannot, validating that the query's specific specs match the result. The same distinctiveness logic underpins the GIST Vector Exclusion Zone.

Are your variants colliding in vector space?

Free audit. Checks whether your product pages carry the structured ID and spec signals an embedding model needs to keep your own SKUs distinct from each other.

Audit your vector separation →The contrarian point that should worry every e-commerce team chasing "better content": the richer and more lovingly written your product copy, the worse your collision problem gets. Marketing prose is, to an embedding model, a homogenizing force, because every product in a category is described with the same aspirational vocabulary, so the more words you add the more your variants converge. The fix is almost anti-copywriting, leading with the dry structured identifiers a brand voice usually tries to hide, because in vector space the boring SKU is the only thing that makes you you.

6. Reference Sources

- OpenAI (2022). New and Improved Embedding Model. OpenAI Blog

- MTEB Leaderboard (2024). Massive Text Embedding Benchmark. Hugging Face

- Website AI Score Strategy (2026). The 2026 Roadmap: From Search to Inference. View article

- Website AI Score Engineering (2026). Sliding Window Chunking: Writing for the Cut. View article