The Shift That Most SEO Coverage Is Missing

On March 20, 2026, Google quietly published a new entry in its official crawler documentation: Google-Agent. The entry is short. The implications are not.

Google-Agent is the user-agent string used when AI agents running on Google infrastructure browse the web on a user's behalf. When Project Mariner — Google DeepMind's agentic browsing system — navigates to your website, it does not arrive as Googlebot. It does not arrive as a human. It arrives as Google-Agent, and it is doing something categorically different from every bot that has come before it.

It is not indexing. It is not crawling for training data. It is executing tasks.

Simultaneously, Chrome shipped an early preview of WebMCP — a browser-level protocol that lets websites expose structured tool endpoints directly to AI agents, replacing the slow, error-prone approach of agents interacting with the DOM like a human. And at the IETF, a draft standard called web-bot-auth is progressing — a cryptographic identity framework that lets website owners verify with mathematical certainty that an incoming automated request is genuinely from the agent it claims to be.

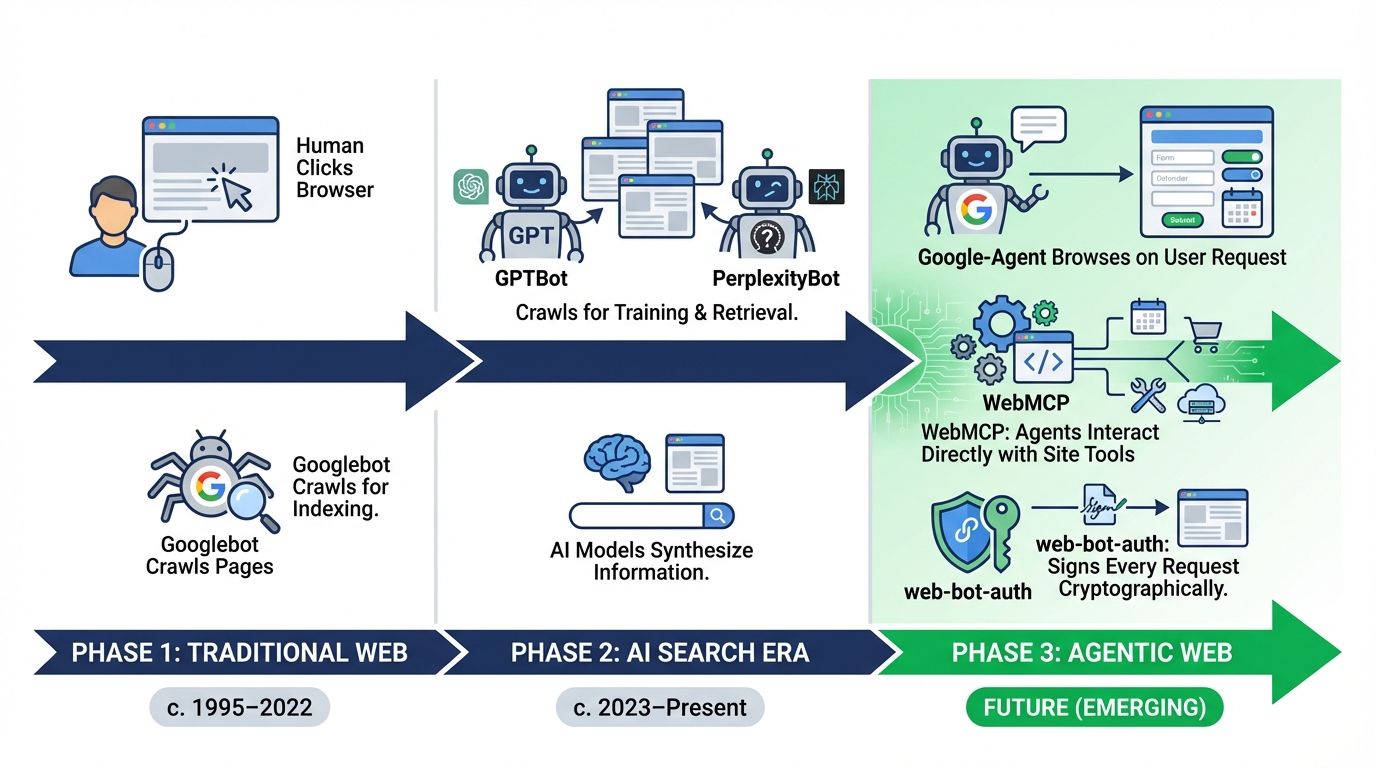

These three developments are not independent announcements. They are three layers of the same architectural shift: the move from a web that humans browse and bots crawl, to a web where agents act.

This article provides the complete technical breakdown of all three — what each system is, how it works at the protocol level, what it changes for your website's infrastructure, and exactly what you need to implement to be ready.

1. Google-Agent: The User-Triggered Fetcher You Are Not Logging

Definition: Google-Agent is a user-triggered fetcher — a class of Google crawler that initiates requests based on explicit user actions rather than Google's autonomous crawl schedule. It is used by agents hosted on Google infrastructure to navigate the web and perform actions on behalf of users.

This classification is critical. Google distinguishes between two fundamentally different types of automated traffic:

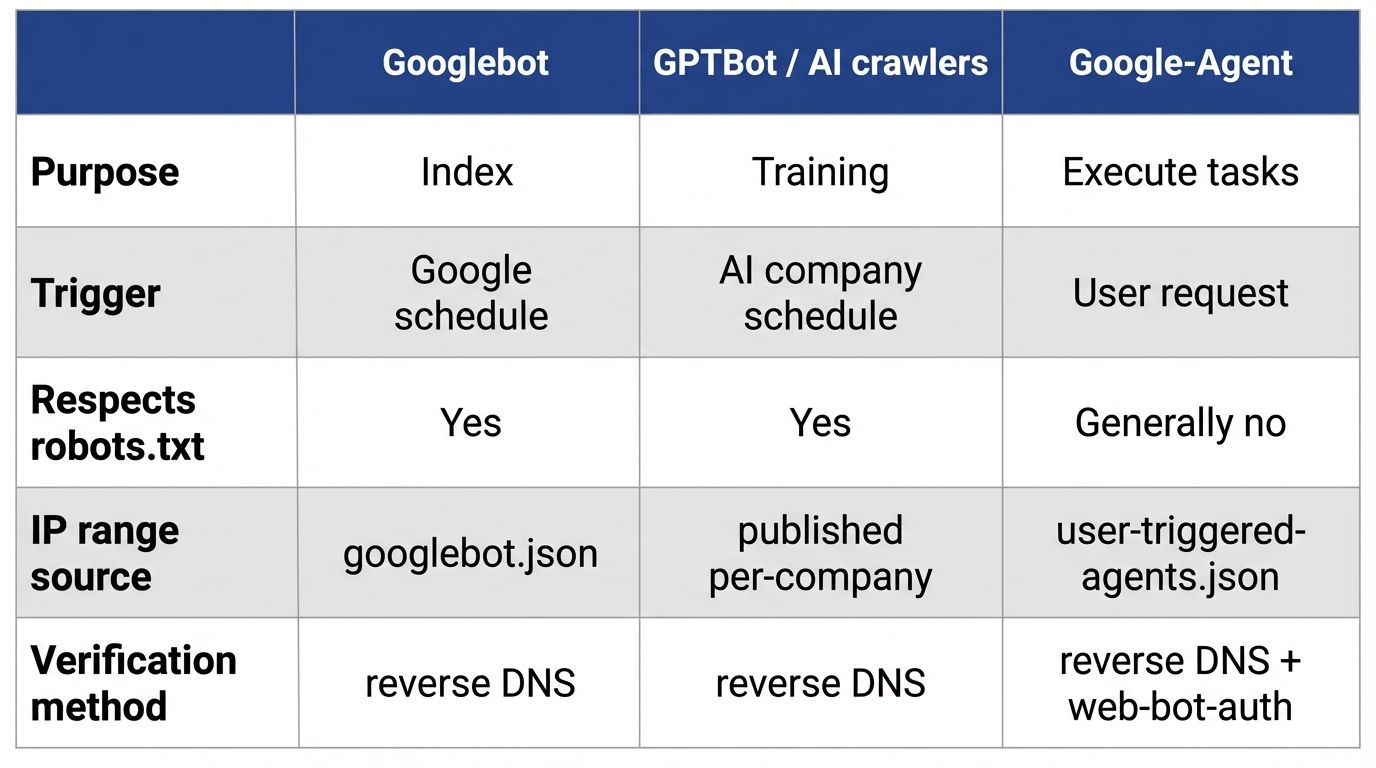

Autonomous crawlers (Googlebot, Google-Extended) operate on Google's schedule to build and maintain its index. They respect robots.txt. They are governed by crawl budget. You can manage them through Search Console.

User-triggered fetchers operate on a user's schedule. When a user asks a Google-hosted agent to do something that requires visiting your website, the fetcher executes. Because the fetch was initiated by a user action, these fetchers generally ignore robots.txt rules — a critical architectural distinction documented in Google's official specification.

The Exact User-Agent Strings

Google has published two variants of the Google-Agent user-agent string:

Mobile agent:

Mozilla/5.0 (Linux; Android 6.0.1; Nexus 5X Build/MMB29P) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/W.X.Y.Z Mobile Safari/537.36 (compatible; Google-Agent; +https://developers.google.com/crawling/docs/crawlers-fetchers/google-agent)Desktop agent:

Mozilla/5.0 AppleWebKit/537.36 (KHTML, like Gecko; compatible; Google-Agent; +https://developers.google.com/crawling/docs/crawlers-fetchers/google-agent) Chrome/W.X.Y.Z Safari/537.36Both strings embed the documentation URL as the identifier reference — +https://developers.google.com/crawling/docs/crawlers-fetchers/google-agent — which is the canonical verification path.

Why User-Agent Spoofing Is a Critical Risk

Google's own documentation contains an explicit caution: the user-agent string can be spoofed. Anyone can send an HTTP request with the Google-Agent string. This is why Google also provides a cryptographic verification path — the web-bot-auth architecture covered in Section 3 — and why user-agent matching alone is insufficient for access control decisions.

IP Range Verification

Google-Agent uses a distinct IP range set published in user-triggered-agents.json, separate from standard Googlebot ranges. This means existing IP allowlists built for Googlebot will not automatically permit or identify Google-Agent traffic. To verify that an incoming Google-Agent request is genuine, you must:

- Perform a reverse DNS lookup on the connecting IP

- Confirm it resolves to a hostname matching

***-***-***-***.gae.googleusercontent.comorgoogle-proxy-***-***-***-***.google.com - Perform a forward DNS lookup on that hostname to confirm it resolves back to the original IP

This three-step verification — the same process used for Googlebot — is the only reliable non-cryptographic method for confirming a Google-Agent request is authentic.

The robots.txt Carve-Out: What It Means in Practice

The Google-Agent documentation states that user-triggered fetchers "generally ignore robots.txt rules." This is documented behavior, not a loophole.

The architectural reason: a user explicitly asked the agent to visit a URL. The agent is acting as a proxy for that user's intent. If a human user can access a URL in a browser, blocking the agent from accessing the same URL on their behalf creates an inconsistency that degrades the user experience of the agent product.

The practical consequence: if your robots.txt currently blocks User-agent: *, that rule applies to autonomous crawlers. It may not prevent Google-Agent from accessing your pages when executing user-initiated tasks. This creates a new category of access that your existing robots.txt strategy does not govern.

2. WebMCP: The Structured Action Channel That Replaces DOM Scraping

Definition: WebMCP is a Chrome-level API that allows websites to expose structured tool endpoints directly to AI agents. Instead of an agent parsing your page's DOM as if it were a human, WebMCP provides a defined interface through which the agent can invoke specific actions with precision.

Published as an early preview on February 10, 2026, WebMCP was developed by André Cipriani Bandarra at Google Chrome as part of the broader agentic web initiative. It is currently available to participants in Chrome's Early Preview Program.

The Problem WebMCP Solves

Before WebMCP, an AI agent trying to fill out a form on your website had to:

- Load the page and render the full DOM

- Identify form elements by parsing HTML structure, labels, and placeholder text

- Map user intent to the correct fields

- Execute JavaScript interactions (click, type, submit)

- Wait for responses and parse confirmation states

This approach fails in two systematic ways. First, it is slow — each step requires rendering, parsing, and state evaluation. Second, it is brittle — any change to your HTML structure can break the agent's ability to interact with your form, causing silent failures that are invisible to both you and the user.

WebMCP replaces this with a direct API contract.

The Two WebMCP APIs

Declarative API: Handles standard interactions that can be defined directly in HTML forms. An agent reads the WebMCP declaration, understands what the form expects, and submits structured data without needing to parse the visual DOM. This is analogous to how Schema.org markup works for content — instead of inferring meaning from layout, the agent reads an explicit machine-readable specification.

Imperative API: Handles complex, dynamic interactions that require JavaScript execution. For multi-step workflows (a checkout that changes based on selections, a support ticket that requires conditional branching), the Imperative API provides a programmatic interface the agent can call sequentially with defined inputs and outputs.

What WebMCP Changes for Your Infrastructure

The shift is architectural, not cosmetic. Consider three representative use cases documented in the WebMCP specification:

Customer support: Without WebMCP, an agent helping a user file a support ticket has to navigate your support form UI the way a human would — reading labels, filling fields, handling CAPTCHA, waiting for confirmation. With WebMCP, your site exposes a create_support_ticket tool endpoint. The agent calls it directly with structured parameters: {issue_type, severity, description, user_email}. The interaction is deterministic, auditable, and resistant to UI changes breaking the workflow.

E-commerce: Without WebMCP, an agent trying to complete a purchase has to handle dynamic cart state, shipping calculation popups, payment form interactions, and address validation — all of which are traditionally built for human visual parsing. With WebMCP, you expose add_to_cart, get_shipping_options, and initiate_checkout as distinct tool endpoints. The agent assembles the transaction through structured API calls, not DOM manipulation.

Travel booking: A flight search that returns dynamic results filtered by price, stops, and time is effectively impossible for a DOM-scraping agent to interact with reliably. WebMCP lets you expose search_flights({origin, destination, date, passengers, filters}) as a callable tool. The agent gets structured results back, not an HTML page to parse.

The SEO and AEO Implication

WebMCP is not directly a ranking signal. It is a capability signal. A website that implements WebMCP for its core transactional flows becomes accessible to agentic commerce in a way that a WebMCP-absent site is not.

As Google's agentic products — including Project Mariner and future versions of Gemini's agentic mode — route more user transactions through the agentic stack, the websites that expose WebMCP endpoints will be executed by agents while those that don't will require the agent to fall back to slow, error-prone DOM interaction. Agent systems, like humans, will learn to prefer the paths of least friction.

The parallel to structured data is direct. A product page with Product schema containing a machine-readable price was not technically "required" in 2012. It became commercially necessary because Google used it for rich results, and the sites without it lost CTR to those with it. WebMCP is at the same inflection point today.

3. web-bot-auth: Cryptographic Identity for the Agentic Request Layer

Definition: web-bot-auth (formally: "HTTP Message Signatures for automated traffic Architecture," IETF Internet-Draft draft-meunier-web-bot-auth-architecture) is a proposed standard that allows automated HTTP clients — bots, agents, crawlers — to cryptographically sign their outbound requests using asymmetric key pairs, enabling servers to verify the identity of incoming automated traffic with mathematical certainty.

Authored by Thibault Meunier at Cloudflare, the draft is at version 04 as of October 2025. Google is actively experimenting with this protocol, using the identity https://agent.bot.goog — a fact documented directly in the official Google-Agent specification.

Why User-Agent Strings Are Architecturally Broken

The motivation section of the IETF draft articulates the problem precisely. Current agent identification methods have three fundamental failure modes:

User-Agent spoofing: Any HTTP client can claim to be any user-agent. A malicious scraper can claim to be Google-Agent. A legitimate agent can claim to be a human browser to avoid access restrictions. The string is self-reported with no external verification. There is no cryptographic binding between the claim and the claimant.

IP block ambiguity: IP addresses on cloud platforms have layered ownership. The cloud platform owns the IP, registers it in a published IP block, and rents infrastructure to agent operators who then republish their own IP blocks. The binding between an IP address and a specific agent operator is weak, expensive to establish, and degrades over time as IPs are reassigned.

Shared secret fragility: An agent that shares a secret (like a Bearer token) with every website it visits faces an impossible scaling problem. As the number of websites grows, key rotation becomes unmanageable. A compromised secret exposes all relationships simultaneously. This model does not scale to a web where agents interact with thousands of sites.

web-bot-auth solves all three by moving to asymmetric cryptography at the HTTP layer.

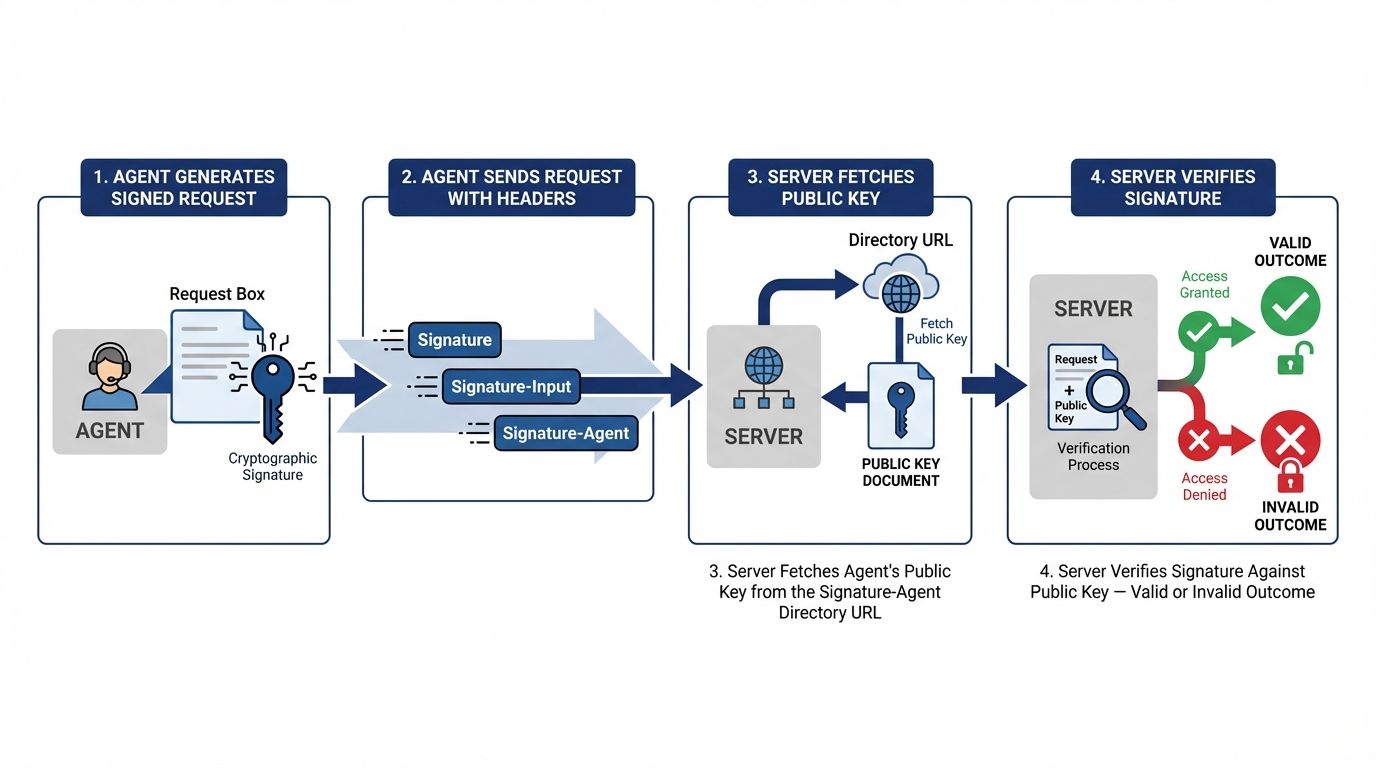

The Architecture: How Signed Requests Work

Every agent implementing web-bot-auth has an asymmetric key pair — a private signing key and a corresponding public verification key. The public key is published in a discoverable directory hosted at a well-known URI.

When the agent makes a request to your server, it constructs an HTTP Message Signature as defined in RFC 9421 and attaches it to the request headers. The signature covers at minimum the request's @authority (your domain) and is parameterized with:

created— the Unix timestamp at which the signature was generatedexpires— the timestamp after which the signature is no longer valid (maximum 24 hours, RECOMMENDED)keyid— the base64url JWK SHA-256 Thumbprint of the signing keynonce— a base64url-encoded random 64-byte array for anti-replay protectiontag— fixed valueweb-bot-authto scope this signature to this protocol

The resulting HTTP request carries two additional headers:

Signature-Input: sig1=("@authority")\

;created=1735689600\

;keyid="oD0HwocPBSfpNy5W3bpJeyFGY_IQ_YpqxSjQ3Yd-CLA"\

;alg="rsa-pss-sha512"\

;expires=1735693200\

;nonce="yT+sZR1glKOTemVLbmPDFwPScbB1Zj..."\

;tag="web-bot-auth"

Signature: sig1=:ppXhcGjVp7xaoHGIa7V+hsSxuRgFt8i04K4FWz9...==:Your server receives this request and verifies the signature against the agent's published public key. If the signature is valid, the request is demonstrably from the agent that controls the corresponding private key. Spoofing is cryptographically infeasible.

The Signature-Agent Header and Directory Discovery

Agents implementing web-bot-auth MAY also send a Signature-Agent header that points to their public key directory:

Signature-Agent: sig1="https://agent.bot.goog"This allows your server to discover the agent's verification key automatically, even if you have not pre-registered it. The directory response is a standard JSON Web Key (JWK) document. For Google-Agent, the identity https://agent.bot.goog is already documented by Google as the web-bot-auth identity it uses during experimentation.

Implementing Signature Verification Server-Side

For a server implementing web-bot-auth verification, the validation flow is:

- Parse the

Signature-Inputheader to extract thekeyidandtag - Reject any signature where

tagis notweb-bot-auth - Resolve the

keyidagainst the agent's published directory (fetched from theSignature-Agentheader URL or pre-cached) - Verify the signature using the public key and RFC 9421's verification algorithm

- Validate that

expiresis in the future andcreatedis in the past - Optionally validate the

nonceagainst a local cache to prevent replay attacks

A minimal Python implementation for verification:

import httpx

import json

import base64

from cryptography.hazmat.primitives.asymmetric.ed25519 import Ed25519PublicKey

from cryptography.hazmat.primitives import serialization

def verify_web_bot_auth(request_headers: dict, request_target: str) -> bool: """

Verifies a web-bot-auth HTTP Message Signature on an incoming request.

Args:

request_headers: Dictionary of HTTP headers from the incoming request

request_target: The request target URI (e.g. "https://example.com/path")

Returns:

True if signature is valid and from a trusted agent, False otherwise

""" sig_input = request_headers.get("signature-input", "") signature = request_headers.get("signature", "") sig_agent_url = request_headers.get("signature-agent", "").strip('"').split('=')[-1].strip('"') if not all([sig_input, signature, sig_agent_url]): return False # Fetch the agent's public key directory try: directory = httpx.get(sig_agent_url, timeout=5).json() raw_key = directory.get("keys", {}) if not raw_key: return False except Exception: return False # Reconstruct signature base # (In production, use an RFC 9421 compliant library) # This is a simplified illustration — use http-message-signatures package # https://pypi.org/project/http-message-signatures/ # Validate tag=web-bot-auth is present in Signature-Input if 'tag="web-bot-auth"' not in sig_input: return False # Validate expiry import time

import re

expires_match = re.search(r'expires=(\d+)', sig_input) if expires_match and int(expires_match.group(1)) < time.time(): return False # Signature expired # Full cryptographic verification should use an RFC 9421 library # Return True here as placeholder — implement full verification in production return TrueImportant: The above is a structural illustration. Production implementation requires a full RFC 9421-compliant library. The Python package http-message-signatures on PyPI provides this. The Cloudflare reference implementation at github.com/cloudflare/web-bot-auth provides clients and servers across TypeScript, Python, PHP, Ruby, Rust, and Go.

The Access Control Implications

web-bot-auth creates a new dimension of access control that did not exist before. Currently, your options for managing bot access are binary: allow or block based on user-agent string or IP range. Neither is cryptographically verifiable. Neither distinguishes between a legitimate Google-Agent executing a user task and a scraperbot spoofing the same user-agent.

With web-bot-auth, you can implement verified-agent access tiers:

- Unverified requests: Standard anonymous access, same as today

- Verified Google-Agent requests: Confirmed via

https://agent.bot.googidentity — serve richer, more structured responses optimized for agent consumption - Verified third-party agents: Partner agents with pre-registered keys given specific API-level access

- Unverifiable requests claiming to be agents: Treat as unverified anonymous traffic

This tiered model is the access control architecture of the agentic web.

4. The Protocol Stack: How Google-Agent, WebMCP, and web-bot-auth Interact

These three systems are not redundant — they operate at distinct layers and serve complementary functions. Understanding their relationship is essential for planning implementation priority.

Google-Agent operates at the identity layer. It tells your server what class of visitor is arriving and what its behavioral contract is (user-triggered, generally ignores robots.txt, IP-verifiable). It is the "who."

web-bot-auth operates at the authentication layer. It lets you verify the identity claim made by Google-Agent (or any other agent) cryptographically, without relying on spoofable strings. It is the "prove it."

WebMCP operates at the capability layer. It defines what the agent can do on your site through structured, declared tool endpoints, rather than by scraping the DOM. It is the "what."

A complete agentic architecture serves all three:

| Layer | Protocol | Your implementation |

|---|---|---|

| Identity | Google-Agent user-agent | Log and differentiate Google-Agent traffic in your server logs |

| Authentication | web-bot-auth (RFC 9421 signatures) | Verify Signature + Signature-Input headers; check https://agent.bot.goog directory |

| Capability | WebMCP Declarative/Imperative API | Expose structured tool endpoints for your key transactional flows |

A site that implements identity logging but not authentication is vulnerable to spoofing. A site that implements authentication but not capability is verifying agents it cannot serve well. A site that implements capability but not identity or authentication has no way to audit or control which agents are using its tools.

5. The AEO Audit Implications: New Signals, New Failures

The emergence of Google-Agent, WebMCP, and web-bot-auth creates new structural failure modes that the existing six-signal AEO audit framework must account for.

Failure mode 1: No Google-Agent logging. If your server logs do not differentiate Google-Agent from other traffic, you have no visibility into agentic interaction with your site. You cannot tell whether agents are visiting, what pages they are accessing, what actions they are attempting, or whether they are succeeding or failing. The fix is a server log filter for compatible; Google-Agent in the user-agent field.

Failure mode 2: robots.txt misconfiguration for user-triggered fetchers. Because Google-Agent generally ignores robots.txt, any access control you have implemented through robots.txt does not govern this traffic class. If you have pages that should not be accessed by agents on users' behalf (authenticated user areas, private data, rate-limited API endpoints), these must be protected at the application layer — authentication, rate limiting, session validation — not through robots.txt.

Failure mode 3: JavaScript-dependent transactional pages. If your checkout, booking, or lead generation flows are fully JavaScript-rendered with no server-side fallback, Google-Agent operating in a low-JavaScript mode will fail to complete transactions. This is the same rendering problem that makes JS-heavy pages invisible to Googlebot — applied now to agent-driven transactions rather than content indexing. The fix is the same: SSR or SSG for critical flow pages, or WebMCP implementation for the transactional layer.

Failure mode 4: No web-bot-auth verification. Without signature verification, your server cannot distinguish a genuine Google-Agent request from a spoofed one. Any access policies you build for verified agents are unenforceable. The fix is implementing RFC 9421 signature verification in your request pipeline.

Failure mode 5: No WebMCP tool declaration. Agents interacting with your site via DOM scraping will fail or degrade on complex flows. Without declared WebMCP tool endpoints, you have no reliable channel for agent-driven transactions. The fix is implementing WebMCP declarative forms and imperative endpoints for your highest-value transactional pages.

6. What to Implement First: A Priority Stack

Not all of these protocols are equally mature or immediately deployable. Here is a realistic implementation roadmap ordered by availability and impact.

Immediate (implement now):

Google-Agent server log differentiation. Parse your Nginx or Apache access logs for Google-Agent in the user-agent field. Create a separate log stream for this traffic class. This costs nothing to implement and gives you immediate visibility into how agents are already interacting with your site.

# Nginx: Log Google-Agent traffic to a separate file if ($http_user_agent ~* "Google-Agent") { access_log /var/log/nginx/google-agent-access.log combined; }IP range validation against user-triggered-agents.json. Download https://developers.google.com/static/crawling/ipranges/user-triggered-agents.json and validate claimed Google-Agent requests against this range. Implement the reverse DNS verification loop.

Application-layer protection for sensitive paths. Audit any path that should not be accessible to user-proxied agents and ensure it is protected by authentication or rate limiting at the application layer, not solely by robots.txt.

Short-term (implement within 30 days):

web-bot-auth signature verification. Implement RFC 9421 signature parsing in your request middleware. Initially run it in logging mode only — verify signatures and log the result without acting on failures. This builds your baseline for what percentage of claimed agent traffic is actually cryptographically verified.

# Middleware pseudo-code: log web-bot-auth verification results def agent_auth_middleware(request): if "google-agent" in request.headers.get("user-agent", "").lower(): verified = verify_web_bot_auth( request.headers, request.url

) logger.info(f"Google-Agent request — verified: {verified} — path: {request.path}") return next(request)WebMCP declarative forms for lead generation pages. If you have contact forms, demo request forms, or any other structured lead capture, adding WebMCP declarative markup to these is the lowest-effort WebMCP implementation with the highest near-term relevance as Google-Agent begins executing user tasks that involve form submission.

Medium-term (as WebMCP reaches general availability):

WebMCP imperative API for transactional flows. Implement the Imperative API for checkout, booking, and complex multi-step interactions. Sign up for Chrome's Early Preview Program at developer.chrome.com/docs/ai/join-epp to access current documentation and demos.

Tiered access control based on verified agent identity. Once you have baseline data from web-bot-auth logging, implement differentiated response behavior for verified vs. unverified agent traffic. Verified Google-Agent requests can receive richer structured responses; unverified requests claiming to be agents can be rate-limited or logged for review.

7. The robots.txt and llms.txt Update Requirement

Given that Google-Agent is a user-triggered fetcher that generally ignores robots.txt, the standard llms.txt + robots.txt guidance that governs AI crawler access requires a new layer.

Your llms.txt file — the markdown document at your domain root that curates your most valuable content for AI systems — remains relevant for training-oriented crawlers and RAG retrieval systems that do read it. But it does not govern user-triggered agent behavior in the same way.

What llms.txt should now additionally communicate for agent-aware sites:

# Website Name > One-sentence description of what this site does.

## Crawlable content - [Page 1 Title](https://example.com/page1): Description

- [Page 2 Title](https://example.com/page2): Description

## Agent capabilities (WebMCP) This site exposes structured tool endpoints for AI agents via WebMCP.

Available tools: [list key endpoints and their purpose]

## Authentication This site supports web-bot-auth (RFC 9421 HTTP Message Signatures).

Verified agents may access [specific capabilities or rate limit tiers].This extension of llms.txt is not an official standard — it is a forward-compatible pattern that communicates your agent readiness to any system that reads it, including future iterations of AI indexing systems that understand the agentic web architecture.

8. Summary: The Three Signals Your Site Is Now Being Judged On

The agentic web is not a future state. Google-Agent is documented and live. WebMCP is in early preview with production availability planned. web-bot-auth is at version 04 of its IETF draft, with Google actively experimenting with the https://agent.bot.goog identity.

The three questions your site must be able to answer:

Can agents identify themselves when they visit? If you are not logging Google-Agent traffic separately and verifying it against the published IP ranges, you have no visibility into this traffic class. Implement log differentiation now.

Can you verify that an agent is who it claims to be? Without web-bot-auth signature verification, any agent identity claim on your site is unverifiable. A site that cannot distinguish a verified Google-Agent from a spoofed one cannot make informed access control decisions. Implement RFC 9421 verification in logging mode.

Can agents interact with your site's tools directly? If your transactional flows require DOM scraping to navigate, agents will fail on them — silently, at scale, with no error surface visible to you or your users. WebMCP declarative forms for lead capture are implementable today. Full imperative API implementation is the medium-term mandate.

The websites that answer all three questions correctly will function correctly in the agentic web. The ones that do not will degrade silently as agent traffic grows — not in rankings, not in indexed pages, but in completed transactions driven by users who asked an AI to do something on their behalf and received a failure instead.

Reference Sources

- Google Crawling Documentation — Google-Agent: Official user-agent specification, IP range files, and verification guidance. Updated 2026-03-20. https://developers.google.com/crawling/docs/crawlers-fetchers/google-user-triggered-fetchers#google-agent

- Chrome for Developers — WebMCP Early Preview: Official announcement of the WebMCP API, use cases, and Early Preview Program registration. Published 2026-02-10. https://developer.chrome.com/blog/webmcp-epp

- IETF Internet-Draft — web-bot-auth (draft-meunier-web-bot-auth-architecture-04): Complete specification for HTTP Message Signatures for automated traffic. Author: Thibault Meunier (Cloudflare). https://datatracker.ietf.org/doc/draft-meunier-web-bot-auth-architecture/

- RFC 9421 — HTTP Message Signatures: The underlying IETF standard that web-bot-auth is built upon. https://www.rfc-editor.org/rfc/rfc9421

- Google Developers Blog — Developer's Guide to AI Agent Protocols: Google's overview of MCP, A2A, UCP, A2UI, and AG-UI. Published 2026-03-18. https://developers.googleblog.com/developers-guide-to-ai-agent-protocols/

- Google DeepMind — Project Mariner: The Google agentic browsing system that uses the Google-Agent user-agent. https://deepmind.google/models/project-mariner/

- Cloudflare web-bot-auth Reference Implementation: Open-source clients and servers across TypeScript, Python, PHP, Ruby, Rust, and Go. https://github.com/cloudflare/web-bot-auth

- Google User-Triggered Agents IP Range File: Machine-readable JSON of IP ranges used by Google-Agent. https://developers.google.com/static/crawling/ipranges/user-triggered-agents.json

- Website AI Score — Rendering Signal Documentation: How the empty-shell rendering failure applies to agent-facing content. https://websiteaiscore.com/blog/website-ai-score-audit-engine-explained

- Website AI Score — Crawl Access Signal: robots.txt and llms.txt audit methodology and current adoption data. https://websiteaiscore.com/blog/aeo-scoring-signals-complete-guide