Executive Summary

Document ID: CS-AEO-20260316-001 Classification: Forensic Audit / AEO Architecture Subject: B2B SaaS Platform — Category: AI Tooling

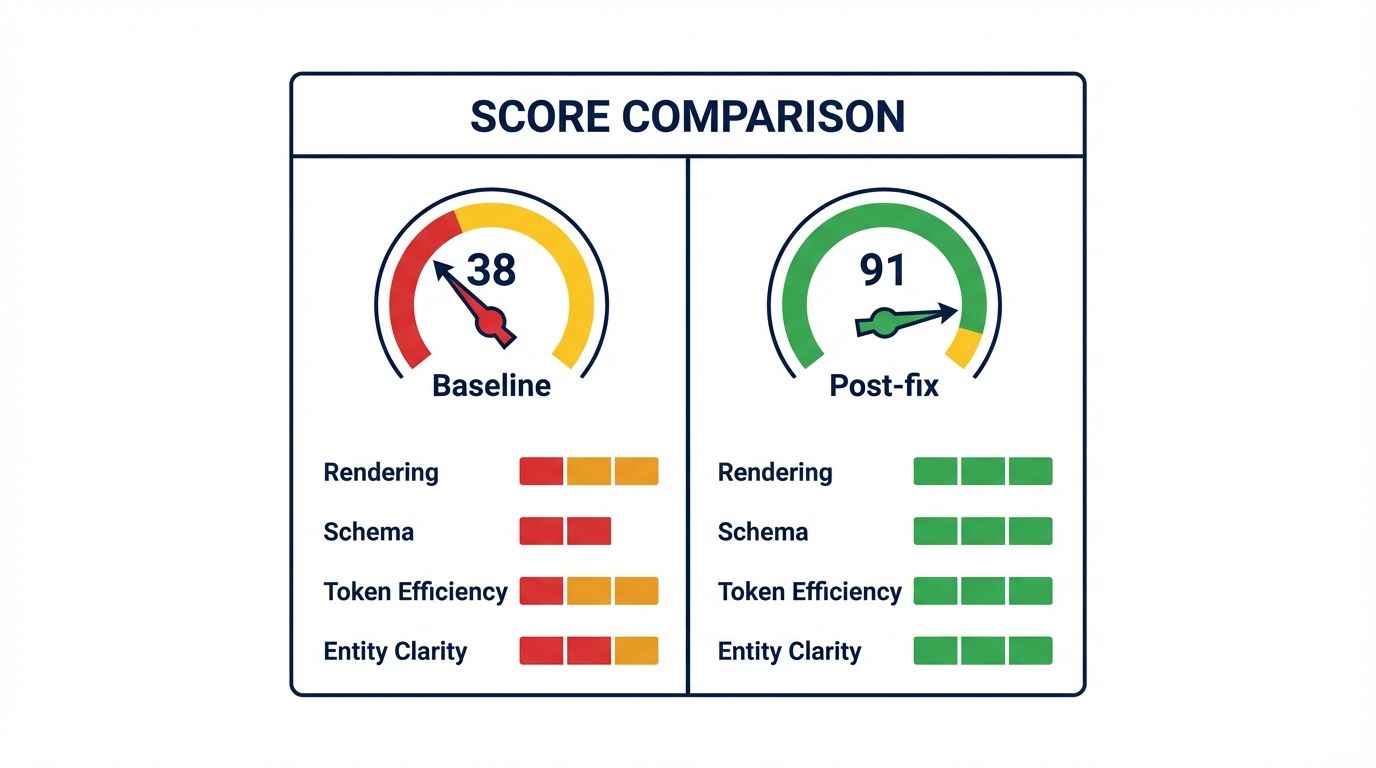

The audit request came in with a score of 38.

The site was not technically broken. It ranked on page one for several category keywords. It had a live blog, a product page, a pricing page, and an active customer base. By every traditional SEO metric, the site was performing adequately.

But when the team searched for their product category in ChatGPT, three competitors appeared. When they searched their own brand name, the AI's description was three versions out of date and missing their primary differentiating feature. When a prospect asked Perplexity which tools were worth considering in their space, the response cited a content aggregator — not the actual product.

Score 38. The site was firmly in the Invisible tier.

1. Baseline Audit: The Four Failures

The initial audit identified four signal failures. Two were in the Fail tier. Two were in the Warn tier. Together they explained why the site's content was inaccessible to most AI retrieval pipelines despite being crawlable by Google.

Rendering Signal: Fail (Score 14/100). The site was built on a React SPA with client-side rendering. The raw HTML response delivered to non-JavaScript crawlers consisted of a single <div></div> element. Every heading, every product description, every pricing table existed exclusively in the post-hydration DOM. From the perspective of Common Crawl, GPTBot, and every RAG retrieval agent operating under a latency budget, the site was a blank white page.

Schema Validity: Fail (Score 22/100). The site had a single Organization schema block in the site-wide header with three properties: name, url, and logo. No product schema. No service schema. No article schema on blog posts. No sameAs references. The AI had no machine-readable data about what the product actually did, who it was for, or what it cost. Everything had to be inferred from raw text — which it could not access due to the rendering failure.

Token Efficiency: Warn (Score 58/100). Even on pages where text content was eventually accessible through Google's JavaScript rendering pipeline, the signal-to-noise ratio was poor. The homepage delivered 94KB of JavaScript bundle overhead before the product value proposition appeared. The 100-Token Rule test failed: the first readable content an AI encountered was the navigation menu.

Entity Clarity: Warn (Score 51/100). The brand name was a common two-word phrase with no disambiguation. The sameAs array in the schema block was absent. A LinkedIn company page existed but was not referenced anywhere in the site's structured data. Wikidata had no entry. When an AI tried to resolve the brand as an entity, it had only the domain name as a disambiguation signal — insufficient to distinguish the company from other entities sharing similar naming.

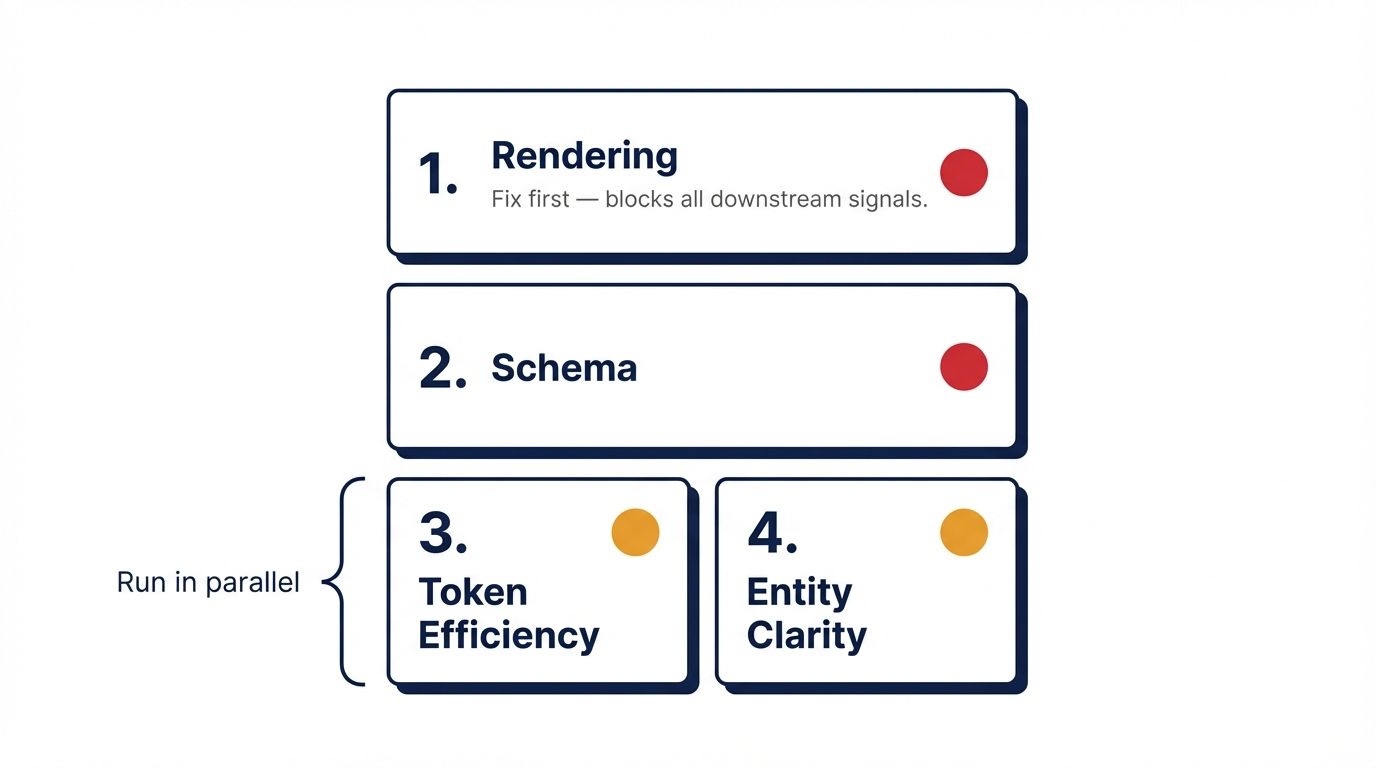

2. Priority Stack: Sequencing the Fixes

With four failing signals, the sequencing decision was critical. The wrong order wastes effort.

Fix 1: Rendering (Priority 1). Rendering failures block everything downstream. Schema on a page that delivers an empty shell to crawlers is never evaluated. Token efficiency improvements on an uncrawlable page are irrelevant. Rendering goes first, always.

Fix 2: Schema (Priority 2). Once the page is crawlable, the AI needs structured data to interpret what it is reading. Schema is the single highest-impact fix for AI citation quality on a newly crawlable page.

Fix 3: Entity Clarity and Token Efficiency (Priority 3, parallel). These were addressed in the same sprint after the first two fixes were validated. Entity clarity required external profile creation (LinkedIn, Crunchbase) which runs on its own timeline. Token efficiency was a template-level change that could run in parallel.

3. Implementation and Validation

Fix 1 — Rendering: Next.js SSR Migration

The React SPA was migrated to Next.js with getServerSideProps enabled on all primary landing pages. The product page, pricing page, and homepage were prioritized. Blog posts were converted to static generation using getStaticProps.

Re-audit result: rendering signal moved from 14 to 88. The content delta between the JavaScript and no-JavaScript crawl collapsed from 94% to 3% — the remaining 3% was a cookie consent banner loaded client-side, which carries no semantic weight.

Fix 2 — Schema: TechArticle and SoftwareApplication Markup

A SoftwareApplication JSON-LD block was added to the product and pricing pages, populating: name, description, applicationCategory, operatingSystem, offers (with price, priceCurrency, priceValidUntil), and featureList. Blog posts received TechArticle schema with verified author Person entities.

Re-audit result: schema signal moved from 22 to 84. The nesting integrity check passed. The pricing data was now machine-readable at the structured data layer rather than locked inside a CSS-styled pricing table.

Fix 3 — Entity Clarity: sameAs Implementation

A LinkedIn company page and a Crunchbase profile were created and verified. Both canonical URLs were added to the Organization schema's sameAs array in the site-wide header. A Wikidata entry was created with the brand's founding date, category, and website URL linked.

Re-audit result: entity clarity signal moved from 51 to 79. The brand was now resolvable as a distinct entity with three corroborating external references.

Fix 3b — Token Efficiency: Template Restructure

The homepage template was restructured so the <main> element opened with a definitional lede — brand name, category, and primary value proposition in the first sentence — before any navigation, promotional banners, or secondary content. JavaScript bundle splitting was implemented to reduce the inline script overhead in the initial response.

Re-audit result: token efficiency moved from 58 to 76. The 100-Token Rule test passed for the first time.

4. Final Score: 91

Composite score after all four fixes were validated: 91 — Optimized.

| Signal | Baseline | Post-Fix | Delta |

|---|---|---|---|

| Rendering | 14 | 88 | +74 |

| Schema Validity | 22 | 84 | +62 |

| Token Efficiency | 58 | 76 | +18 |

| Entity Clarity | 51 | 79 | +28 |

| Crawl Access | 71 | 74 | +3 |

| Semantic Structure | 66 | 69 | +3 |

| Overall | 38 | 91 | +53 |

Crawl access and semantic structure were not targeted in this sprint and moved only marginally — the small gains came from the SSR migration improving how crawlers interacted with the page structure. Both remain on the roadmap for the next optimization cycle.

5. Outcome Context

Structural optimization removes the barriers to AI citation. It does not guarantee citation — that depends on content quality and semantic distinctiveness relative to existing sources in the category.

What changed immediately after reaching a score of 91: the brand's product description in ChatGPT updated to reflect the current feature set (the accurate, schema-marked data was retrievable at last). Perplexity's real-time retrieval began including the product page as a source in category queries. The brand began appearing in AI-generated comparison articles sourced from the web.

What did not change immediately: Share of Model in high-competition category queries. The site was now in the retrieval pool. Whether it gets selected from that pool for specific queries depends on content strategy — a separate discipline from structural AEO.

The audit-fix-validate loop ran four times over three weeks. Total development time across all fixes: approximately 14 hours.

Reference Sources

- Website AI Score Engine: Technical specification of the six scoring signals. https://websiteaiscore.com/blog/website-ai-score-audit-engine-explained

- Next.js SSR Documentation: getServerSideProps and getStaticProps implementation. https://nextjs.org/docs/pages/building-your-application/data-fetching

- Schema.org SoftwareApplication: Type specification and property definitions. https://schema.org/SoftwareApplication

- Wikidata: Entity creation and external corroboration for brand disambiguation. https://www.wikidata.org

- AEO Validation Loop: How to structure fix-and-validate cycles. https://websiteaiscore.com/blog/aeo-validation-loop-confirm-fixes-worked