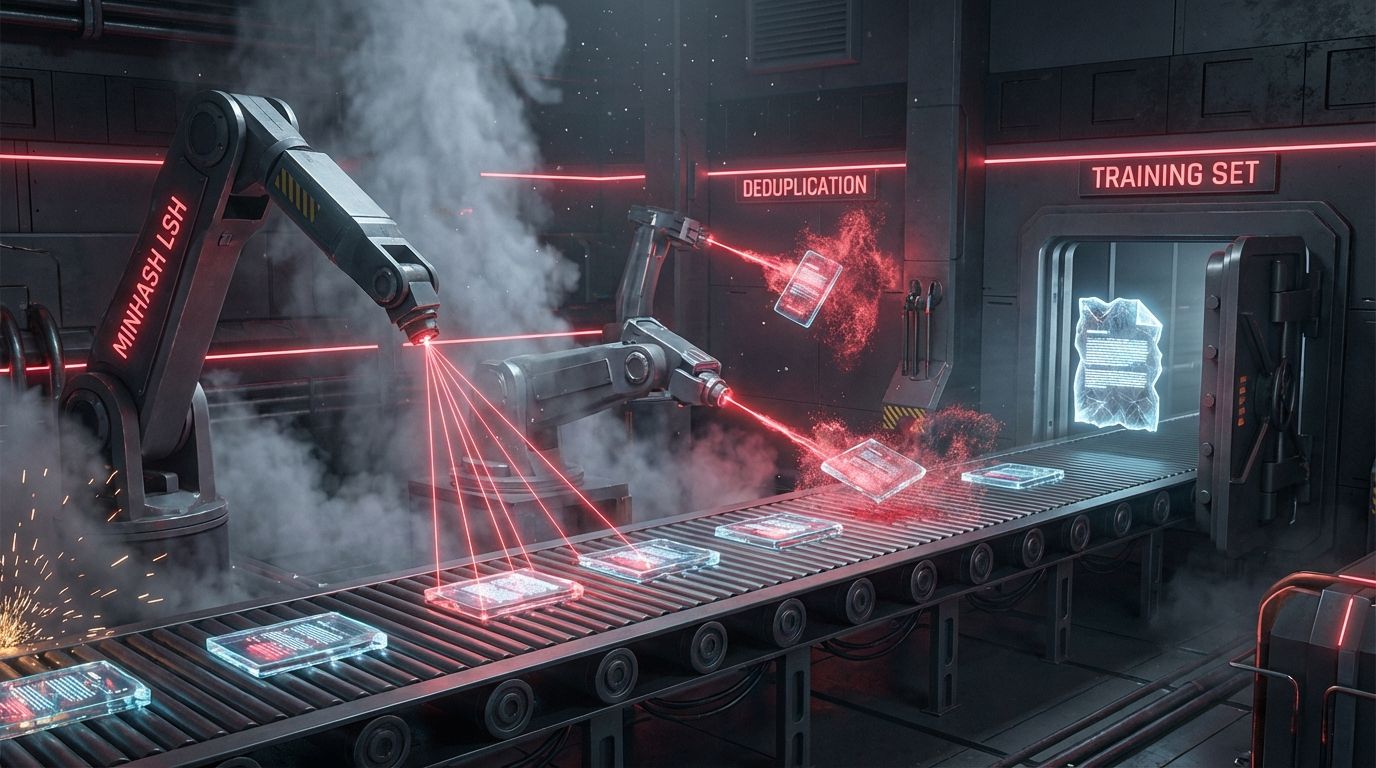

The "freshness" signal of traditional SEO (<lastmod>) is functionally obsolete for LLM training. CCBot may fetch your updated URL, but downstream ingestion pipelines (RefinedWeb, Dolma, RedPajama) use aggressive MinHash LSH to deduplicate the web. If your update shares a Jaccard Similarity above 0.8 with a version already in the corpus, it's flagged a near-duplicate and discarded to save compute. To force a knowledge update into a frontier model you must trigger Hash Drift: structurally alter more than 30% of the token sequence to clear the dedup filter, while defining temporal boundaries with validThrough schema rather than the ambiguous dateModified.

1. The Consensus Trap

The industry relies on the Sitemap Protocol: meticulously update the <lastmod> tag, assuming it tells AI crawlers to refresh. That conflates crawling with ingestion. CCBot might respect the sitemap (it often ignores it as noise), but the training pipeline does not. Datasets like RefinedWeb (Falcon) and C4 (T5) prioritize canonical stability: they don't overwrite old records, they deduplicate against them. The freshness gap between a crawl and a training run is often 6 to 12 months, so your "breaking news" update sits in an S3 bucket, invisible to the model, while the model hallucinates from older weights. The pivot is to move from optimizing for recency (a sorting signal) to optimizing for validity (a logic signal): don't ask the model "what is new?", structure data so it can reason "is this fact still true?"

2. Forensic Analysis: The Mathematics of Erasure

The mechanism deleting your updates is MinHash LSH. To save trillions of tokens, pipelines shingle your text into n-grams, hash the shingles, and build a signature. If Jaccard(Signature_New, Signature_Old) > 0.8, the new version is dropped. Minor updates (changing a price, a CEO name, a date) rarely alter the signature enough to fall below the threshold, so the update is invisible. This aligns with the "nostalgia bias" from the GIST Vector Exclusion Zone analysis, where models prefer the dense center of their training distribution over sparse recent signals. Use this Python logic to test whether an update is significant enough to survive the deduplication wall.

3. Information Gain: Contextual Recency and "Lost in the Middle"

Even if your content survives ingestion, models exhibit a U-shaped attention bias. RAG systems suffer the "Lost in the Middle" phenomenon: information at the start of the context window (primacy) and the end (recency) is weighted heavily, while information in the middle (positions 5 to 15 in a 20-document retrieval) is frequently ignored.

The fix is to architect Atomic Fact Blocks: if your evergreen update is buried in paragraph 4 of a 10-paragraph article, it falls into the dead zone. This mirrors the AI readability audit, where content density at the edges of the DOM correlated with higher extraction. Restructure into an inverted pyramid for RAG: the assertion (valid 2026) at the very top for primacy, the context in the middle, and a re-assertion summarized at the bottom for recency.

4. Implementation Protocol

Step 1, the validity meta-layer (JSON-LD): stop using dateModified as your primary signal; use validThrough to define the temporal scope of the fact, which is critical for dynamic data as covered in the e-commerce AEO guide.

Step 2, HTML5 hard anchors: LLMs hallucinate the "current time" because they lack an internal clock, so anchor relative terms ("currently," "recently") to absolute timestamps. Bad: <span>Updated recently</span>. Good: <time datetime="2026-01-27" itemprop="validFrom">January 27, 2026</time>. Step 3, force the hash (the 30% rule): when updating an evergreen page, don't just change the single data point, rewrite the introduction and methodology sections too, which drops the Jaccard similarity below 0.7 and forces the pipeline to treat the page as a new document rather than a duplicate variant.

Are your updates surviving the dedup wall?

Free audit. Checks whether your evergreen pages carry validThrough schema, HTML5 time anchors, and enough structural change to clear the MinHash threshold.

Audit your temporal validity →The contrarian point that breaks the "update your old posts" advice every SEO repeats: the small surgical edit is the worst possible update. Changing one number and bumping the date feels efficient and responsible, but to the ingestion pipeline it is indistinguishable from the version it already has, so it gets thrown away, and the model keeps citing your stale figure. Counterintuitively, the way to make a fact stick is to rewrite far more than the fact, because the corpus rewards difference, not correctness.

5. Reference Sources

- Common Crawl Foundation (2024). Common Crawl Architecture and Usage Examples. commoncrawl.org

- Liu, N. F., Lin, K., Hewitt, J., et al. (2023). Lost in the Middle: How Language Models Use Long Contexts. arXiv:2307.03172

- Penedo, G., et al. (2023). The RefinedWeb Dataset for Falcon LLM. arXiv:2306.01116

- Website AI Score Strategy (2026). Optimizing for GIST: Semantic Distance & Vector Exclusion Zones. View article

- Website AI Score Audits (2025). Case Study: The State of AI Readability. View report