It is the nightmare scenario for any modern business owner.

A potential customer goes to ChatGPT or Gemini and asks, "What is the pricing for [Your Brand]?"

The AI answers instantly, with total confidence: "The Starter plan costs $29/month."

There is just one problem: You discontinued the $29 plan two years ago. Your current entry price is $99.

The customer clicks through to your site, sees the real price, feels "baited and switched," and leaves. Or worse, the AI tells them your product has a specific integration that you don't actually support. When they find out the truth, they don't blame the AI—they blame you.

This phenomenon is known as an AI Hallucination. For years, brands worried about "Fake News" on social media. Now, they must worry about "Fake Facts" generated by the most trusted algorithms on earth.

In this guide, we will explore why AI models lie about your business, the reputational cost of these fabrications, and the specific "grounding" strategies you can use to force these models to stick to the truth.

The Mechanics of the Lie: Why AI "Invents" Facts

To stop AI from lying, you must first understand how it thinks.

Large Language Models (LLMs) are not databases. When you query a traditional database (like SQL), it looks up a specific row and returns the exact data stored there. If the data is missing, it returns an error.

LLMs, however, are probabilistic prediction engines. They do not "know" facts; they predict the next likely word in a sentence based on patterns they learned during training.

If an AI has not been trained on your specific, up-to-date pricing page, it will not say "I don't know." Instead, it will look at the pattern of pricing for similar companies in your industry and guess a number that looks statistically probable. It isn't lying to be malicious; it is "hallucinating" to be helpful.

Fig 1: A comparison of exact database retrieval versus the probabilistic guessing of an LLM, which can lead to hallucinations.

This behavior is intrinsic to how these models work. As noted by IBM's analysis of AI hallucinations, these errors often stem from "overfitting, training data bias," and the model's drive to complete a pattern rather than retrieve a fact.

The Cost of Fiction: Brand Safety in the AI Era

The impact of these hallucinations goes far beyond a confused customer. It attacks the very foundation of your brand's reputation.

- Customer Support Overload: Your support team is forced to answer tickets from users demanding features or pricing that don't exist, simply because "ChatGPT said you had it."

- Erosion of Trust: When a user spots a discrepancy between the AI's answer and your website, they perceive your brand as inconsistent or disorganized.

- Competitor Displacement: In some cases, an AI might hallucinate that your product is a subsidiary of a competitor, or conversely, attribute your unique features to them.

According to a recent report on AI Hallucinations and Brand Reputation, these "confident but wrong" interpretations can "weaken customer relationships and diminish brand integrity" before a user even lands on your website.

The Solution: "Grounding" and the Knowledge Graph

You cannot manually edit ChatGPT's training data. However, you can influence what is known as Grounding.

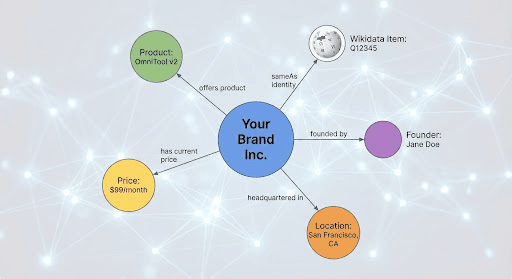

Grounding is the process of anchoring an AI's generation to verifiable facts. To do this, you must speak to the AI in a language it trusts. You must inject your brand into the Knowledge Graph.

The Knowledge Graph is a massive, structured web of "entities" (people, places, companies) and the relationships between them. When Google or an advanced AI wants to verify a fact, it checks the Knowledge Graph. If your brand is clearly defined there, the AI is far less likely to hallucinate.

Fig 2: A visual representation of a corporate Knowledge Graph, showing how a brand entity is connected to verifiable facts like products, pricing, and leadership.

Strategy 1: The "SameAs" Protocol

The strongest signal you can send to an AI is that you are who you say you are. You do this using the sameAs property in your Schema.org markup.

In your website's structured data (JSON-LD), you shouldn't just list your company name. You must explicitly link your website to your other "identities" across the web. This tells the AI that your website, your LinkedIn profile, your Crunchbase listing, and your Wikipedia page are all the same entity.

The concept: Connect the dots so the AI can triangulate the truth.

"Google uses structured data that it finds on the web to understand the content of the page... and to gather information about the web and the world in general." — Google Search Central

Strategy 2: Influence the "Source of Truth" (Wikidata & Crunchbase)

LLMs weight certain sources higher than others. Wikipedia and Wikidata are "high-trust" sources. If your pricing or product data is correct on your website but wrong on a high-trust aggregator like Crunchbase or a major industry directory, the AI may choose to believe the third party over you.

The Audit:

- Search for your brand on Wikidata.org. Does an item exist? Is it accurate?

- Check your profiles on Crunchbase, LinkedIn, and Bloomberg.

- Ensure your "About Us" page is not just marketing fluff, but contains hard, factual statements about your founding date, location, and core offering.

By aligning these external sources, you create a "consensus of facts" that overrides the AI's tendency to guess.

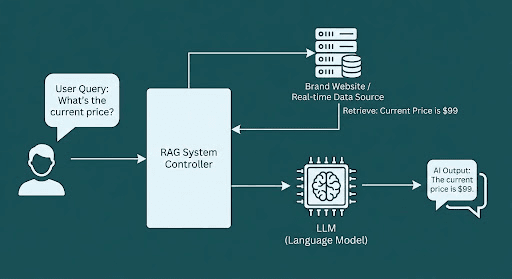

Strategy 3: Retrieval-Augmented Generation (The Future of Search)

The smartest search engines (like Perplexity and Google's AI Overviews) are moving toward RAG (Retrieval-Augmented Generation).

In a RAG system, the AI does not just rely on its memory. It actively "retrieves" fresh data from your website before generating an answer. This is where the technical work from our previous article (Server-Side Rendering) pays off.

If your site is readable and rich with structured data, the RAG system can pull your exact current pricing and feed it into the answer generation window. As explained by Google Cloud's guide to RAG, this process allows the model to "cite sources" and significantly reduces hallucination by "grounding" the response in your real-time data.

Fig 3: The Retrieval-Augmented Generation (RAG) process, showing how an AI system fetches real-time data from your website to answer a user's query accurately.

Actionable Checklist: Vaccinate Your Brand Against Lies

To protect your brand from AI hallucinations, you must move from being a "passive" subject of search to an "active" architect of your data.

- Implement "Organization" Schema: Ensure your homepage has deep JSON-LD markup that includes your logo, contactPoint, and sameAs links to all social profiles.

- Standardize Your "NAP": Ensure your Name, Address, and Phone number are identical across every directory and platform. Inconsistencies confuse the probability models.

- Create a "Facts" Page: Consider creating a page specifically designed for bots (e.g., /brand-facts) that lists your current pricing tiers, active features, and company history in simple, bulleted lists.

- Monitor Your Knowledge Panel: Search for your brand on Google. Do you have a Knowledge Panel on the right side? If so, Claim it. This gives you direct control to suggest edits to the facts Google holds about you.

Conclusion

In the age of AI, truth is not just about being honest; it is about being structured.

You cannot stop LLMs from trying to answer questions about your brand. But by building a robust Knowledge Graph presence and ensuring your data is structurally accessible, you can force them to answer correctly.

Don't let an algorithm define your reality. Feed it the facts, and it will speak your truth.

References

- Understanding AI Hallucinations: A technical breakdown of why Large Language Models generate false information and the distinction between prediction and fact.

- Source: IBM Research Topics

- Link: https://www.ibm.com/think/topics/ai-hallucinations

- Impact on Brand Reputation: An analysis of the risks hallucinations pose to brand integrity and customer trust.

- Source: Addlly AI Research

- Link: https://addlly.ai/blog/how-ai-hallucinations-impact-brand-reputation/

- Google Knowledge Graph & Panels: Official documentation on how Google constructs its Knowledge Graph and how brands can manage their Knowledge Panels.

- Source: Google Search Help

- Link: https://support.google.com/knowledgepanel/answer/9163198?hl=en

- Retrieval-Augmented Generation (RAG): An overview of how RAG systems work to improve accuracy by fetching real-time data.

- Source: Google Cloud

- Link: https://cloud.google.com/use-cases/retrieval-augmented-generation