Legal & Compliance: Ensuring AI Reads Your Disclaimers

Definition

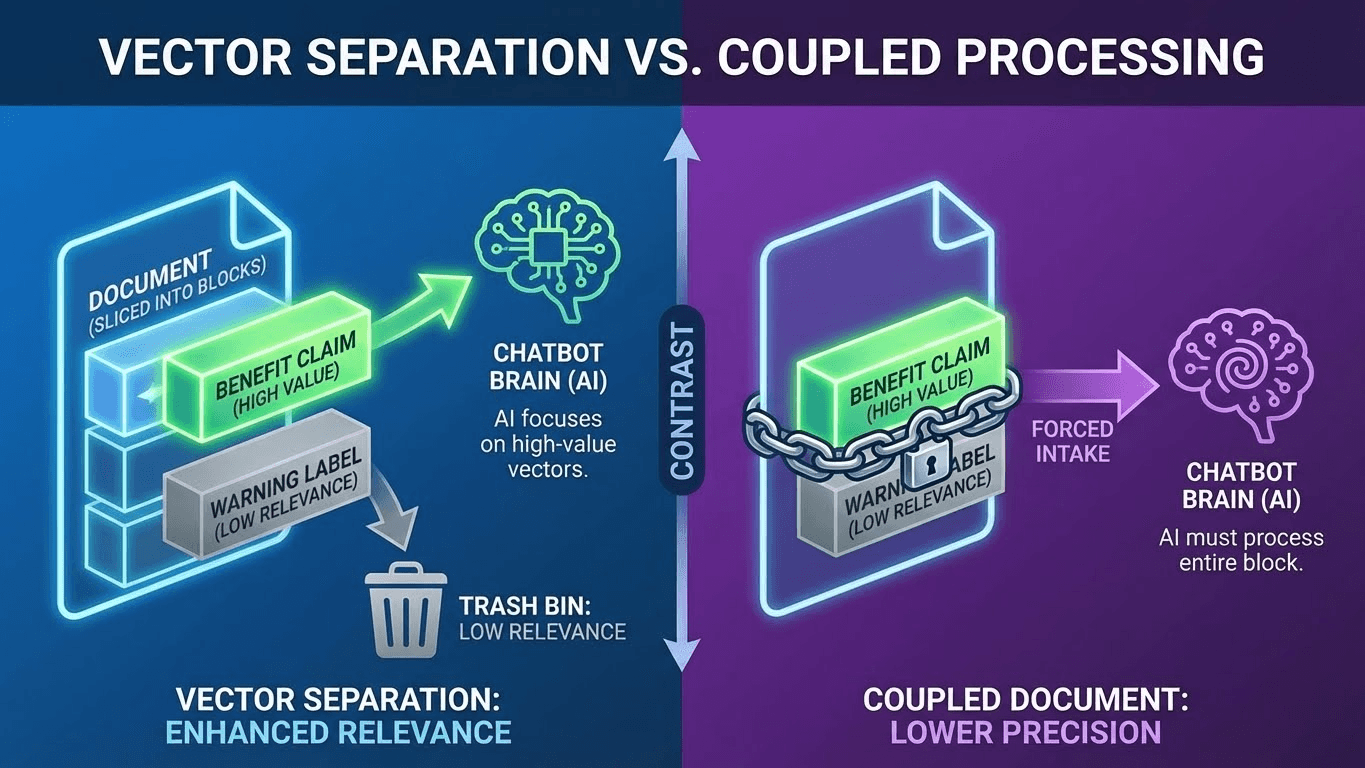

Compliance AEO (Agent Optimization) is the engineering discipline of coupling legal disclaimers, medical warnings, and financial disclosures directly to the primary content claims in a way that forces Large Language Models (LLMs) to ingest them as an indivisible unit. In traditional web design, disclaimers are often relegated to the footer or small print.1 In the AI era, this "visual distance" causes Retrieval Separation, where an AI agent cites your benefit claim ("This supplement boosts energy") while ignoring the safety warning ("Not for heart patients"), creating massive liability for regulated brands.

The Problem: The "Fine Print Paradox"

For decades, compliance teams have been satisfied if a disclaimer exists somewhere on the page. Humans understand that the asterisk (*) next to a claim corresponds to the text at the bottom.

AI models do not see asterisks.

When a RAG (Retrieval-Augmented Generation) system scrapes your page, it chunks the text into segments (vectors).

- Chunk A: "Product X returns 15% APY." (High relevance to user query).

- Chunk B: "Past performance is not indicative of future results." (Low relevance, visually distant).

The Risk:

The AI retrieves Chunk A because it answers the user's question perfectly. It ignores Chunk B because it looks like generic footer noise.

Result: The AI generates an answer that constitutes illegal financial advice or dangerous medical guidance, citing you as the source. You are liable for the hallucination because your content structure allowed the separation.

The Solution: Semantic Coupling

To ensure safety, you must move from "Visual Compliance" to "Semantic Compliance." You cannot rely on layout; you must rely on Proximity and Code Structure.

1. The "Disclaimer-First" Pattern

Do not bury warnings. Place them immediately before or after the claim in the HTML structure.

- Bad: Claim ... [1000 words] ... Disclaimer.

- Good: Disclaimer ... Claim. Or Claim ... (Immediate Disclaimer).

2. The <aside> Injection

Use semantic HTML tags to signal the relationship.

- Wrap the claim and the disclaimer in the same <section> or <article> parent.

- Use <aside> for the disclaimer, but ensure it is nested inside the relevant paragraph block, not outside it.

3. Robots.txt for Liability

As discussed in our Robots.txt Strategy Guide, if a specific section of your site is high-risk (e.g., experimental features), you may want to block GPTBot from that specific directory entirely to prevent any risk of misinterpretation.

Technical Implementation: The ContentWarning Schema

You can use Schema.org to explicitly label text as a warning or disclaimer. This gives the AI a "label" that persists even if the text is summarized.

JSON

<script type="application/ld+json">

{

"@context": "https://schema.org",

"@type": "WebPageElement",

"mainEntity": {

"@type": "MedicalWebPage",

"name": "Energy Supplement X",

"audience": "Adults",

"reviewedBy": {

"@type": "Physician",

"name": "Dr. Jane Doe"

},

"hasPart": [

{

"@type": "MedicalStatement",

"text": "Energy Supplement X may increase heart rate.",

"contentLocation": "Main Body"

},

{

"@type": "MedicalContraindication",

"name": "Heart Condition Warning",

"text": "Do not take this product if you have a history of arrhythmia.",

"encodingFormat": "text/plain"

}

]

}

}

</script>

Developer Note:

For financial sites, use the FinancialProduct schema and nest the feesAndCommissionsSpecification directly inside the product offer. This creates a hard data link that the AI cannot ignore.

The "Token Budget" of Compliance

Legal teams love long disclaimers. "This text must be 500 words long."

From an AEO perspective, this is dangerous.

As we analyzed in our Token Efficiency Audit, if your disclaimer is 1,000 tokens long, it might consume the AI's entire "context budget" for that section. The AI might summarize it as "Terms apply"—which is legally insufficient.

The Fix:

- Summarize for Robots: Create a concise, 50-word "AI Summary" of the disclaimer (e.g., "Not for use by pregnant women. Returns not guaranteed.").

- Inject via Meta: Place this summary in a <meta name="ai-disclaimer" content="..."> tag (custom attribute) or inject it into the abstract schema field. This increases the chance the AI repeats the core warning.

Comparison: Human vs. AI Compliance

Feature | Human Compliance | AI Compliance |

Placement | Footer / Bottom of Page | Immediate Proximity (Next to Claim) |

Visual Cue | Asterisk (*) or Small Font | Semantic Grouping (HTML/JSON) |

Length | Comprehensive (Legalese) | Concise (Token Efficient) |

Parsing | Visual Scan | Vector Chunking |

Risk | User ignores fine print | AI decouples warning from claim |

Strategic Advantage: The "Safety Wrapper"

Regulated industries (Pharma, Finance, Legal) are terrified of AI. Many are blocking bots entirely.

This is an opportunity.

If you can prove that your site is "AI Safe"—that your warnings are architecturally inseparable from your claims—you can confidently allow bots.

- Result: You become the only visible source in your niche because your competitors are hiding behind firewalls.

- The "Safety Wrapper" Strategy: Use the LLMs.txt Standard to serve a special version of your content to bots where every claim is explicitly wrapped with its warning in a single Markdown block.

Key Takeaways

- Kill the Footer: For AI, the footer doesn't exist. If a warning is crucial, move it into the main body content, adjacent to the claim it modifies.

- Semantic Locking: Use HTML (<section>) and JSON-LD (hasPart) to programmatically bind the claim and the disclaimer. Don't rely on visual proximity.

- Conciseness is Safety: A 50-word warning is more likely to be cited than a 500-word block of legalese. Write a "Bot Version" of your T&Cs.

- Schema is Evidence: If you get sued because an AI hallucinated advice, having MedicalContraindication schema proves you did everything technically possible to warn the machine.

- Test the Chunking: Run your page through a vectorizer (like OpenAI's embedding tool). See if the claim and the warning end up in the same "chunk." If they are split, redesign the page.

References & Further Reading

- Schema.org: MedicalEntity. Specialized schema for health warnings and contraindications.

- FTC: Dot Com Disclosures. Regulatory guidance on digital clarity, which parallels AI requirements.

- Website AI Score: Robots.txt Strategy. Managing bot access for high-liability zones.